Agent OS: the concept shaping the new stack layer

Actualizado: 2026-05-03

Agent OS is a label that has been showing up in more and more conversations since mid-2024. It does not describe a specific product but a category taking shape: the layer that sits between agentic applications and language models to handle planning, context management, persistent memory, fault tolerance, and isolation. Already three or four projects claim the label with substance, several products are attempting it, and a research base is starting to formalize the concept. Time to review what is real behind the term.

Key takeaways

- The AIOS paper (Rutgers, March 2024) coins the concept with rigor: autonomous agents share the same classic problems an OS had to solve for processes.

- An Agent OS consists of four pieces at varying maturity: request scheduler, context management, persistent memory, and isolation.

- Existing projects (AIOS 0.2, MemGPT/Letta, Swarm, LangGraph) cover subsets, not the full stack.

- Three unsolved problems slow consolidation: abstraction cost, lack of comparable benchmarks, and absence of a governance model.

- For teams building today: adopt pieces as needed, not a complete framework from day one.

Where the term comes from

The AIOS paper published by Kai Mei and a Rutgers University group in March 2024 coins the expression with rigor. The thesis is that autonomous agents based on language models share the classic problems an operating system had to solve for processes:

- Resource contention.

- Task scheduling.

- Context isolation.

- Memory management.

- Access control.

The proposal is not a vague analogy but a concrete design with separate modules for each of those functions.

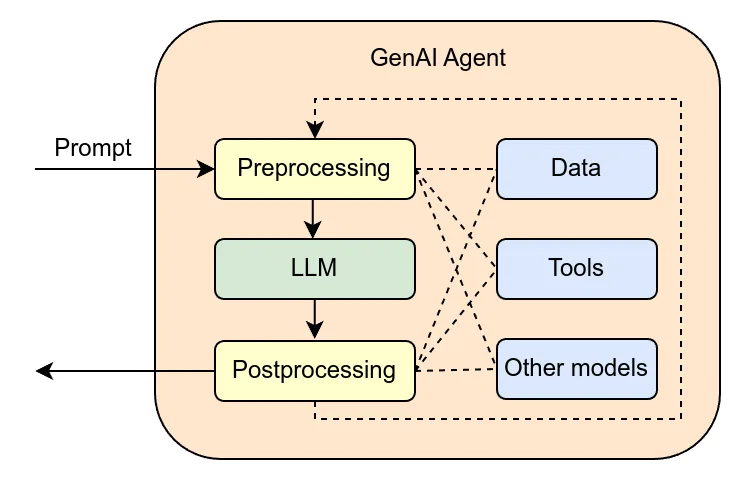

What is interesting in the paper is not the code — more academic than production-ready — but the ontology. Before AIOS, an agent platform meant something like LangChain: libraries to orchestrate model calls, tools, short memory, and little else. After AIOS, the conversation clearly separates the application layer from what we could call the agentic kernel, the part you do not rewrite per agent but share.

That separation is useful because the application layer evolves quickly and the kernel should evolve slowly.

What pieces compose an Agent OS

The projects claiming the label today agree on four pieces:

- Request scheduler. When multiple agents share compute, you must decide order and priority: from simple FIFO to strategies that favor agents with latency debts or penalize those consuming more tokens.

- Context management. A long-running agent can generate conversations that exceed the model window. More sophisticated systems keep structured state with pinned sections, rotating summaries, and on-demand retrieval from a vector store.

- Persistent memory. Unlike context, it survives multiple runs and lets an agent learn from past interactions. Implementing it well is hard: you must decide what is remembered, with what structure, how it is indexed, and how it is purged when information becomes stale.

- Isolation. If several agents share a process, a failure in one must not affect others. If an agent runs tools that touch the file system or the network, it should do so in a bounded environment. This is where Firecracker, Kata Containers, or gVisor come in: strong isolation requires system primitives, not just language ones.

The real projects that exist today

The original AIOS remains active and published a 0.2 version where the kernel splits from the SDK. The new architecture allows running the kernel as a remote service with applications connecting over RPC, which resembles a real operating system more than the initial library-packaged version.

MemGPT has been formalizing long-term memory management since 2023 with the pager metaphor: the agent has a limited main memory matching the context, and an unlimited secondary memory acting as a persistent store paged on demand. The project has evolved into a full platform named Letta.

OpenAI Swarm was presented as an experimental framework for orchestrating agents with different specializations. Though presented as a library, the control-transfer model and handoff pattern are system primitives.

On the open side, LangGraph has developed a persistent state and checkpointing model that looks a lot like an Agent OS memory piece. The line between library and kernel is blurring.

The practical problems still unsolved

Three problems every project acknowledges and none has clearly solved:

- Abstraction cost. Every layer added between application and model is latency and complexity. If your agent makes three model calls per response, going through a scheduler, context manager, and persistent memory store can add 500 ms without visible value.

- Evaluation. Comparing one Agent OS to another is hard because there are no standard benchmarks, and existing ones measure different things: task completion rate, cost per task, instruction fidelity, robustness against tool failures.

- Governance. An Agent OS decides important things: which agent reaches which resource, how much compute it consumes, which data it touches. Those decisions have security, privacy, and compliance implications, and today they are treated ad hoc.

What someone building today should look for

For a team starting to build serious agentic applications, the question is not whether it needs an Agent OS but which of its pieces it needs. Few use cases need all four components from day one. Most follow this progression:

- Decent context management.

- Add persistent memory when single sessions stop being enough.

- Add scheduling when parallel execution shows up.

- Consider isolation only when the threat model justifies it.

This incremental approach is healthier than adopting a full Agent OS from the start. Projects that pick very complete frameworks from day one often end up fighting with abstractions they do not understand for problems they do not yet have.

Conclusion

Thinking of Agent OS as an analytic lens — rather than a product to pick — helps organize the right questions: where is the scheduling, where is the memory, what isolation do I need, how do I manage context. For large teams with several agentic products, adopting a shared Agent OS can amortize effort; for a single product, better to start with small pieces and resist the temptation to import a full framework.