Enterprise agent governance: the controls that are no longer optional

Actualizado: 2026-05-15

The enterprise agent conversation stopped being aspirational about twelve months ago. In April 2025 most large deployments were pilots with a human supervisor; by April 2026 we have agents executing complete back-office process steps without human approval for each action. That jump has moved governance from the quarterly committee to the daily operational control, and it has left a set of practices that are no longer optional if you want to pass the next audit or explain an incident to leadership without improvising.

Key takeaways

- Governance has dropped into engineering territory: a policy stating what cannot be done is no longer sufficient, you need a technical chain that makes it hard.

- A serious audit in 2026 walks in with five questions: agent inventory, traceability, guardrail tests, failure procedure, and legal responsibility.

- The three controls absorbing most incidents: threshold-based human review, automatic circuit breaker, and persistent shadow mode.

- The EU AI Act in full application since August 2026 requires concrete names on paper for high-risk systems.

- Minimum viable: living inventory, per-agent budget, and separation of intent and execution for irreversible actions.

From Committee to Operational Control

Until recently, AI governance at the average enterprise was a policy layer written by a committee and a model register on Confluence. It worked while AI systems were classifiers with a human at the end of the process. With agents that book flights, adjust orders, answer tickets, or modify infrastructure configuration, that documentary layer stopped being enough.

The underlying shift is that agents make decisions with real side effects: payments, state changes in systems, emails sent, infrastructure deployed. Each of those effects is a record somebody will want to audit.

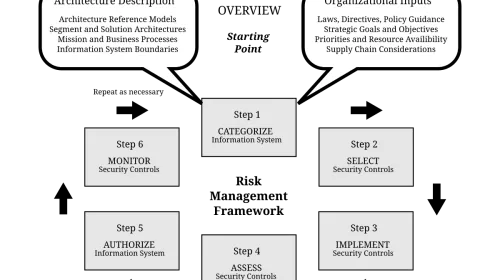

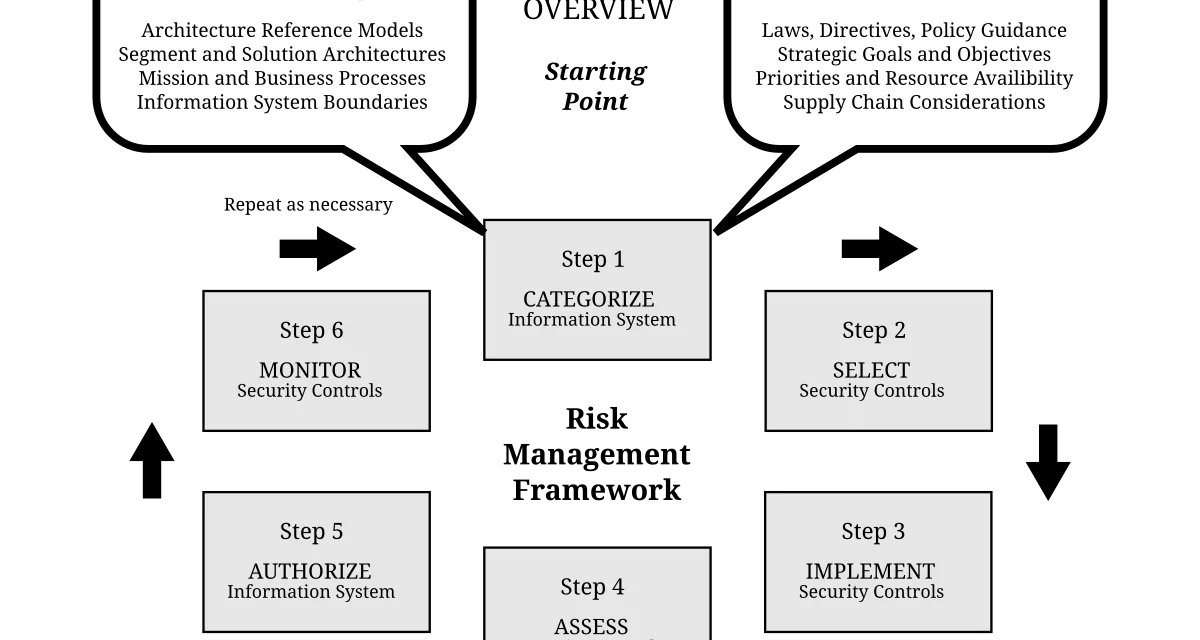

The practical consequence is that governance has dropped into engineering territory. A policy stating what cannot be done is no longer sufficient. You need a technical chain that makes forbidden actions impossible, or at least hard. Agents are, in practice, non-human identities with credentials, scope, and traceability, and the rigour we apply to service accounts must apply to them.

What a reasonable audit asks today

A serious internal audit in 2026 walks in with five questions:

- Which agents are in production, with what permissions, and who approved them? Answering this demands an agent inventory as strict as the service-account register.

- What has each agent done in the last ninety days? Granular traceability, not just the LLM call log but the full reasoning chain, invoked tools, parameters, result, and side effect, has become the most expensive deliverable to stand up and the one auditors ask about first.

- Which guardrails are active, and how do you demonstrate they work? Audits require evidence that controls are not decorative. That means periodic prompt-injection tests and review of false negatives caught manually.

- What happens when the agent fails? This is where many projects have no answer. An agent that loops, burns budget, produces toxic output, or executes an irreversible action needs a clear containment path.

- Who bears legal responsibility? The EU AI Act in full application since August 2026 requires concrete names on paper for high-risk systems. Model provider, enterprise deployer, compliance owner, and end user have distinct and separable responsibilities.

What Broke in 2025 and Left a Lesson

The past year produced a catalogue of incidents:

- Agents with corporate-email access executing instructions injected via incoming-message signatures.

- Support agents, badgered by a persistent user, escalating account permissions without real authorisation.

- Infrastructure agents, when facing ambiguity, choosing the destructive path that solved their task without preserving state.

From those incidents emerged defensive patterns now standard:

- Strict isolation between untrusted content and sensitive tools: any agent processing third-party email should not be able to move money or grant permissions in the same context.

- Temporal scope restriction: the agent holds broad permissions during its task window and drops to minimum afterwards.

- Separation of intent and execution: one chain proposes and another verifies before acting, mandatory for irreversible actions.

AI incident postmortems document these same patterns with concrete cases.

Agent inventory and minimum viable compliance

The minimum a responsible company runs today starts with a central register. Each production agent needs:

- Unique identifier and function description.

- Base model with version.

- Exposed tools and affected systems.

- Business owner and technical owner.

- Last security-review date.

Reasonable policies add an economic guardrail: every agent has a call budget and a monetary budget per unit of time. A looping agent in 2026 costs real money in tokens and in actions on third-party systems, and without a technical limit the risk is unbounded.

agent_id: finance-reconciliation-001

owner_business: finance.ap@company.com

owner_technical: ai-platform@company.com

model: claude-opus-4-7

scope:

tools: [ledger.read, sap.invoice.read, email.draft]

budget_calls_per_day: 2000

budget_usd_per_day: 40

systems: [sap-prod, exchange-corp]

controls:

human_review: actions > 1000 usd

circuit_breaker: errors > 5% in 10 min

audit_log: warehouse.agent_events

last_security_review: 2026-03-12Guardrails absorbing most incidents

After a year in production, three controls account for the bulk of saves:

- Threshold-based mandatory human review: any action above an economic, scope, or reversibility threshold requires explicit human approval regardless of the agent’s declared confidence.

- Automatic circuit breaker on error rate or anomalous behaviour: if the agent fails too often or drifts from its historical pattern, it suspends itself and alerts.

- Persistent shadow mode: most new agent changes spend weeks running in parallel with the human, without side effects, before being granted real autonomy.

Continuous evaluation has professionalised too. Regression tests on known cases, adversarial prompt-injection tests, and failure drills are part of the standard deployment cycle. For the evaluation framework detail, see production agent evaluations.

My Read

Agent governance in 2026 is not a new problem; it is the classic privileged-identity problem with an extra degree of unpredictability. Companies that had fewer incidents are the ones that treated their agents as service accounts with superpowers, not as human users and not as traditional applications.

If I had to pick three things to start with:

- A living inventory.

- Threshold-based human review.

- An anomaly circuit breaker.

Everything else builds on those pillars without surprises. Most of all, resist the temptation to treat governance as friction to reduce: it is the only layer that separates a useful agent from an incident waiting to happen.