Get more out of your Apple Silicon Mac with oMLX: local LLMs without NVIDIA

Table of contents

- Key takeaways

- Why Apple Silicon needs its own runtime

- What oMLX adds on top of mlx-lm

- Getting it running

- Pragmatic comparison (May 2026)

- Verdict by scenario

- Recommended setup by RAM tier (M4 and M5)

- Caveats to keep in mind

- Who this guide is for

- Full setup on an M5 Max 40-core Mac with 128 GB (Claude Code and multi-LLM)

- Install and first launch

- Change the default API key

- Server settings for 128 GB of unified memory

- Recommended model stack for multi-LLM

- Point Claude Code at the local endpoint

- Other integrations (Codex, OpenCode, OpenClaw)

- Benchmark the actual throughput

- End-to-end verification

Key takeaways

- oMLX is an LLM inference server built on MLX, the framework Apple shipped in December 2023 for Apple Silicon. It adds continuous batching, two-tier KV cache (RAM + SSD), and an OpenAI- and Anthropic-compatible API.

- Current release: 0.3.8 (30 April 2026), Apache 2.0 licensed, 14.3k GitHub stars, 71 releases. Young project, fast release cadence.

- On any M1-M5 Mac running macOS 15+, it beats Ollama as soon as you need real concurrency or want to point your app at the OpenAI endpoint without rewriting the client.

- When it is not for you: if you need Linux + NVIDIA portability, enterprise features (built-in auth, OTEL, multi-tenant), or already have a large GGUF collection, Ollama still wins.

Why Apple Silicon needs its own runtime

Unified memory is the material difference between an M-series Mac and a box with an NVIDIA GPU. On Apple Silicon, CPU, GPU and Neural Engine share the same DRAM; on an RTX 4090 you have 24 GB of separate VRAM and tensors travel over PCIe.

For an LLM server, that changes the question. It stops being “how many gigabytes fit in VRAM” and becomes “how do I make use of unified memory and the Apple Matrix coprocessors without writing Metal kernels by hand”.

CUDA does not apply. ROCm does not either. Apple shipped MLX as the official answer: a NumPy/PyTorch-style array framework that compiles down to Metal and is built around unified memory. On top of MLX, the (Apple-owned) mlx-lm team maintains LLM weights converted to the MLX format (Qwen, Llama, Mistral, GLM, DeepSeek), with 4-bit and 8-bit quantizations tuned for the M-series.

mlx-lm on its own is a library: one model, one request at a time, no real batching. For anything beyond chatting with yourself, the server layer is missing. That is where oMLX fits.

What oMLX adds on top of mlx-lm

oMLX describes itself as “LLM inference, optimized for your Mac — continuous batching and tiered KV caching, managed directly from your menu bar”. The pieces that matter day to day:

- Continuous batching. When a client requests tokens, oMLX does not wait for that request to finish before serving the next one: it interleaves requests at the token level. If three users chat with the same model at once, all three advance in parallel instead of queueing. Without this piece, an M4 Max feeding two terminals behaves like a phone taking turns.

- Two-tier KV cache (hot RAM + cold SSD) with prefix sharing. The KV cache is memory of tokens already processed. oMLX keeps recent ones in RAM and parks the colder ones compressed on SSD, sharing prefixes across requests that start the same way (the classic “You are a helpful assistant…”). In practice you can keep long context windows without blowing through available RAM.

- Multi-model with LRU eviction. Load Qwen3, Llama 3.3 and an OCR model at the same time, and oMLX decides which to evict when memory tightens. You can also unload manually from the dashboard if you want more control.

- OpenAI- and Anthropic-compatible API. Point an OpenAI SDK client at

http://localhost:8000/v1and everything works without rewriting anything. Same for tool calling and structured output. - Menu bar app plus admin dashboard. A native macOS app in the top bar, alongside a web dashboard with chat, model downloads and a benchmarking tool at

:8000/admin/chat. You do not need to live in the terminal.

Vision models (Qwen3.5-VL, GLM-4V, Pixtral), OCR (DeepSeek-OCR, DOTS-OCR), embeddings (BGE-M3, ModernBERT) and rerankers all run in the same instance. For a RAG flow where generation, embeddings and rerank share a process, that single endpoint is genuinely useful.

Getting it running

Install via Homebrew, with a .dmg release and pip install -e . from source as alternatives:

brew tap jundot/omlx https://github.com/jundot/omlx

brew install omlx

omlx serve --model-dir ~/modelsOnce it is up, you download models from the dashboard at http://localhost:8000/admin/chat and call into any SDK against http://localhost:8000/v1. The menu bar shows loaded models, active requests, and RAM and SSD usage.

Pragmatic comparison (May 2026)

Four axes that matter on a Mac:

Install and models

Ollama is the most comfortable: curl | sh, its own library, GGUF. LM Studio is the “I do not want a terminal” option, with a full GUI. mlx-lm is plain pip install and models pulled directly from Hugging Face in MLX format. oMLX installs via Homebrew or .dmg, downloads MLX models from the dashboard, and as of v0.3.x can import any MLX repo from Hugging Face by pasting a URL.

Real batching under concurrency

Here Ollama’s llama-server falls short: it serializes requests per model. LM Studio is the same. mlx-lm, one at a time. oMLX is the only one of the four that does vLLM-style continuous batching. If two people, an agent, and an editor will be talking to the same model simultaneously, oMLX changes the feel.

Model format

Ollama lives on GGUF (llama.cpp). MLX and oMLX live on the MLX format, which quantizes specifically for Metal and makes better use of the Apple Matrix coprocessors on M3 and later. For the same model, MLX quantized to 4-bit typically pushes 15 to 30% more tokens/s on M3 and M4 than the equivalent GGUF on Ollama, based on benchmarks circulating in mlx-community and Hacker News threads through 2025 and 2026. The gap is measurable, not a sales line.

API and tooling

Ollama has its own API plus partial OpenAI compatibility. LM Studio also exposes an OpenAI endpoint. mlx-lm is not a server. oMLX ships OpenAI- and Anthropic-compatible APIs, tool calling, structured output, and built-in benchmarking.

Verdict by scenario

- Daily Mac, one user, one chat: LM Studio or Ollama. You will not notice batching.

- Demo to a client: LM Studio. The GUI sells itself.

- OpenAI-style API for your own app or an agent you maintain: oMLX. Less friction and fewer surprises with tool calling.

- Evaluation under concurrency, or RAG with generation, embeddings and rerank on the same host: oMLX, no contest.

- Same flow but you want to move it to Linux tomorrow: Ollama. Portability wins.

Recommended setup by RAM tier (M4 and M5)

Before the numbers: oMLX shares unified RAM with macOS and everything else you have open. A useful rule of thumb is to subtract 10 to 14 GB from the total for Finder, Safari, the IDE and the rest before counting space for models and KV cache. Every size assumes MLX 4-bit quantizations from mlx-community on Hugging Face[1], a single user, and 4k context. Going from 4k to 32k can add 4 to 15 GB of KV cache depending on the model. oMLX’s cold SSD tier helps, but you still want headroom in hot RAM.

Chips at each tier:

- 24 GB: M4 base (top config) and entry-level M4 Pro. When the M5 family ships, the base M5 will land here.

- 32 GB: M4 base (top option) or base M5. Few Pro configurations sit exactly at this tier.

- 64 GB: M4 Max (mid) and, expected through 2026, top M5 Pro and base M5 Max.

- 128 GB: top M4 Max and high M5 Max. The expected M5 Ultra should open up 192 and 256 GB.

24 GB — about 12-14 GB usable

The well-configured M4 Mac Mini and the entry-level M4 Pro MacBook Pro. You pick either a strong single model or a multi-model setup, not both at once.

For top quality, Mistral-Small-3.2-24B-Instruct-4bit (~13 GB) fits tight and leaves little air for long context. More comfortable: Qwen3-14B-Instruct-4bit (~8 GB), with reasonable quality and room for BGE-M3 (~1.2 GB) plus a small reranker. For pure speed, Llama-3.2-3B-Instruct-4bit or Gemma-3-4B-4bit (~2-3 GB) hit 80-120 tok/s on M4 Pro. For a vision model, Qwen2.5-VL-7B-4bit (~5 GB) is viable for occasional OCR.

32 GB — about 18-22 GB usable

Enough for a serious chat model plus embeddings and a reranker in parallel.

The quality pick is Qwen3-32B-Instruct-4bit (~17 GB). It is the sweet spot for this tier: punches close to a 70B on many tasks and leaves context headroom. If you want speed, dropping to Qwen3-14B-Instruct-4bit lands at 40-60 tok/s on M4 Pro. Realistic multi-model: 14B chat plus Pixtral-12B-4bit (~7 GB) for vision, embeddings and a reranker, with oMLX’s LRU policy moving the cold ones to SSD when memory tightens.

64 GB — about 50 GB usable

The tier where a Mac starts to look like a mid-range GPU box, without the fan noise that comes with one.

For quality, Llama-3.3-70B-Instruct-4bit (~40 GB) is the reference for writing and reasoning well. Mistral-Large-2-123B only fits at 3-bit and gets tight. If you want speed without sacrificing much quality, Qwen3-30B-A3B-Instruct-4bit (MoE: takes ~17 GB on disk but only activates ~3B parameters per token) sustains 50-60 tok/s on M4 Max. It is the model that most changes daily use at this tier. Realistic multi-model: 70B chat plus Qwen2.5-VL-32B-4bit (~18 GB), embeddings and a reranker, with LRU handling the peaks.

128 GB — about 110 GB usable

This is where things fit that do not fit on any reasonable consumer NVIDIA hardware. The “I bought a Mac instead of building a server” argument starts to stand on its own.

For top quality, Mistral-Large-2-123B-Instruct-4bit (~70 GB) is the clear pick for general reasoning. DeepSeek-V3-MoE fits in aggressive quantization (~80 GB). Qwen3-235B-A22B-4bit pushes 140 GB; 3-bit is more realistic at the cost of some quality. For speed, the same MoE pattern (30B-A3B or equivalent) if you have low-latency agent loops. Serious multi-model: 123B chat plus a 70B alternative under LRU plus Qwen2.5-VL-72B-4bit (~40 GB) for vision. Three large models loaded at once with SSD cache for the cold ones is realistic at this tier.

Expected throughput (M4 Max, single user, 4k context)

Order-of-magnitude reference for dense 4-bit models: 14B around 50-70 tok/s, 32B around 25-35, 70B around 10-15, 123B around 6-9. MoE architectures (30B-A3B, 235B-A22B) tend to run like a dense model of their active size (~3B and ~22B respectively), so a 30B-A3B sustains 50-60 tok/s. On M4 Pro, trim those numbers by 30 to 40% on memory bandwidth alone; on M4 base, nearly half. M5 is expected to land 10 to 25% faster at the same tier, and the gain is more visible on prefill than on decode.

Caveats to keep in mind

This is a young project: v0.3.8 is not v1.0. The instance ships with no out-of-the-box auth (you leave it on localhost or front it with a reverse proxy and basic auth), no native OTEL integration for metrics and traces, and it is not designed for multi-tenant. If you come from enterprise with compliance on top, those layers are on you.

Another point: GGUF. If your current collection is GGUF files downloaded over the last year, oMLX cannot eat them. The option is converting to MLX (there are upstream scripts for popular models) or staying on Ollama. Conversion is not trivial for exotic architectures or custom tokenizers, and it is worth checking the mlx-community repo on Hugging Face before converting anything by hand.

And, obviously, Mac. There is no Linux or Windows build. If your dev laptop is a Mac and your home server is a Linux box with NVIDIA, the oMLX binary will not serve the second one. You will end up running two different runtimes for the two worlds.

Who this guide is for

For a founder or CTO with an M3, M4 or M5 Mac who wants to try local AI before committing to dedicated infrastructure, the answer is yes. It saves you setting up Docker, configuring Open WebUI and exposing behind Traefik, which are the steps the Ollama + Llama 3.3 on Ubuntu tutorial covers once you have decided it is worth it. oMLX is step zero: check on your own laptop whether the open models are good enough before putting a GPU in production.

If it fits, the next step is wiring it to a real client: see how the Anthropic SDK agent tutorial reads when pointed at the local endpoint, or how it fits inside an MCP multi-vendor stack without paying for tokens every time.

Reference repositories: github.com/jundot/omlx[2], github.com/ml-explore/mlx[3], github.com/ml-explore/mlx-lm[4], huggingface.co/mlx-community[1].

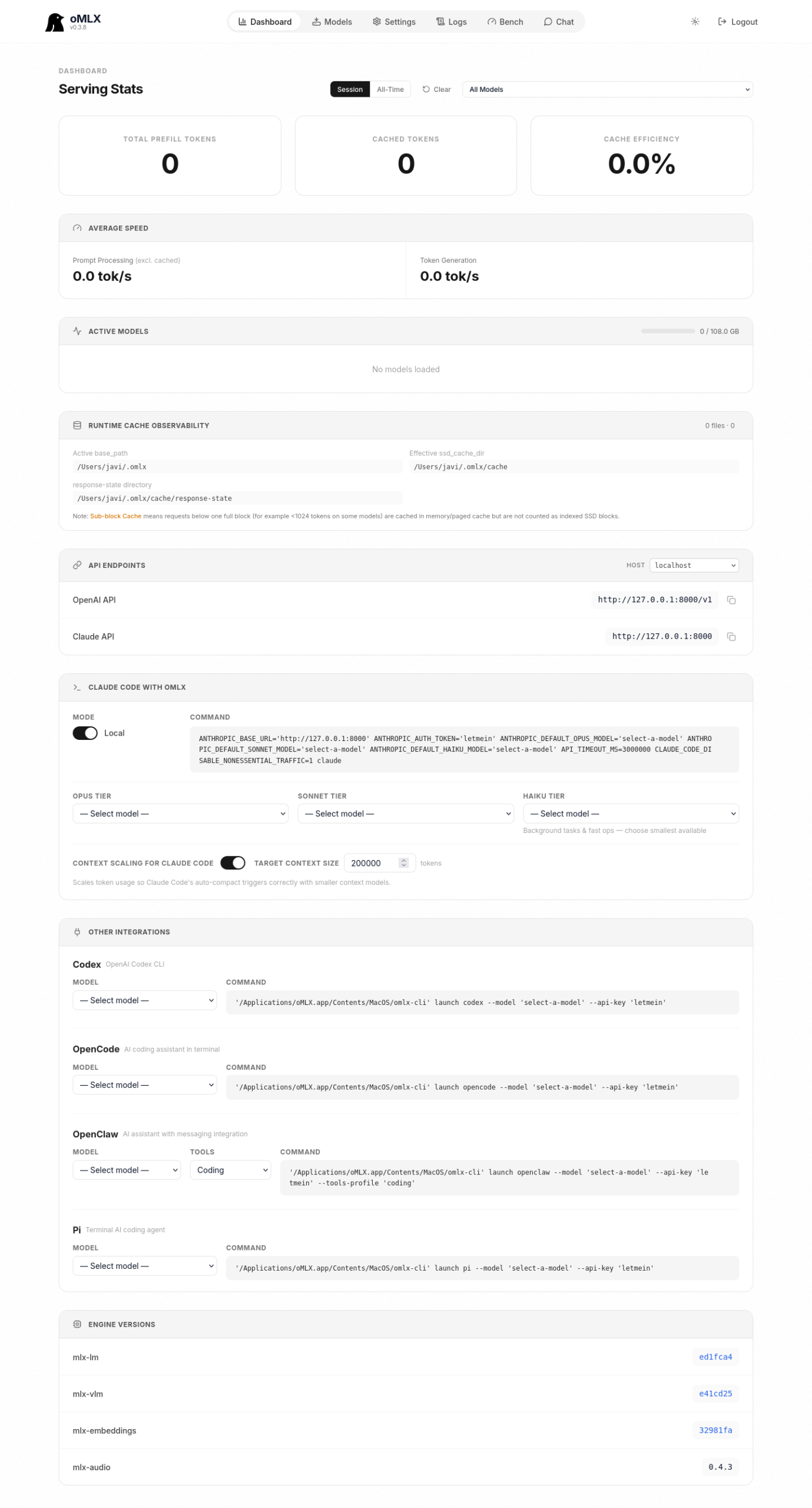

Full setup on an M5 Max 40-core Mac with 128 GB (Claude Code and multi-LLM)

This section documents a real oMLX install as the reference for the top configuration you will see in 2026: a Mac Studio or MacBook Pro with an M5 Max 40 GPU cores and 128 GB of unified memory. The M5 family has not been officially announced as of May 2026; throughput numbers are extrapolated from published M4 Max benchmarks with a 15 to 25% uplift for the bandwidth gains expected on the new chip. The screenshots, by contrast, come from a live oMLX instance.

Install and first launch

Install via Homebrew like on any Apple Silicon Mac:

brew tap jundot/omlx https://github.com/jundot/omlx

brew install omlx

omlx serve --model-dir ~/.mlx/modelsAlternatively, open the menu bar app (.dmg from Releases) and let it bring up the daemon in the background. The first hit to http://localhost:8000/admin asks for the API key that gates the instance.

Change the default API key

The install ships with letmein as a development API key. For local-only use without exposing to other machines on your network, that is fine; the moment the instance listens on anything other than 127.0.0.1 or you plan to share the endpoint, swap it in Settings → Auth & Info for a long random string. The section also accepts multiple keys at once if you want to give a colleague access without sharing the primary one.

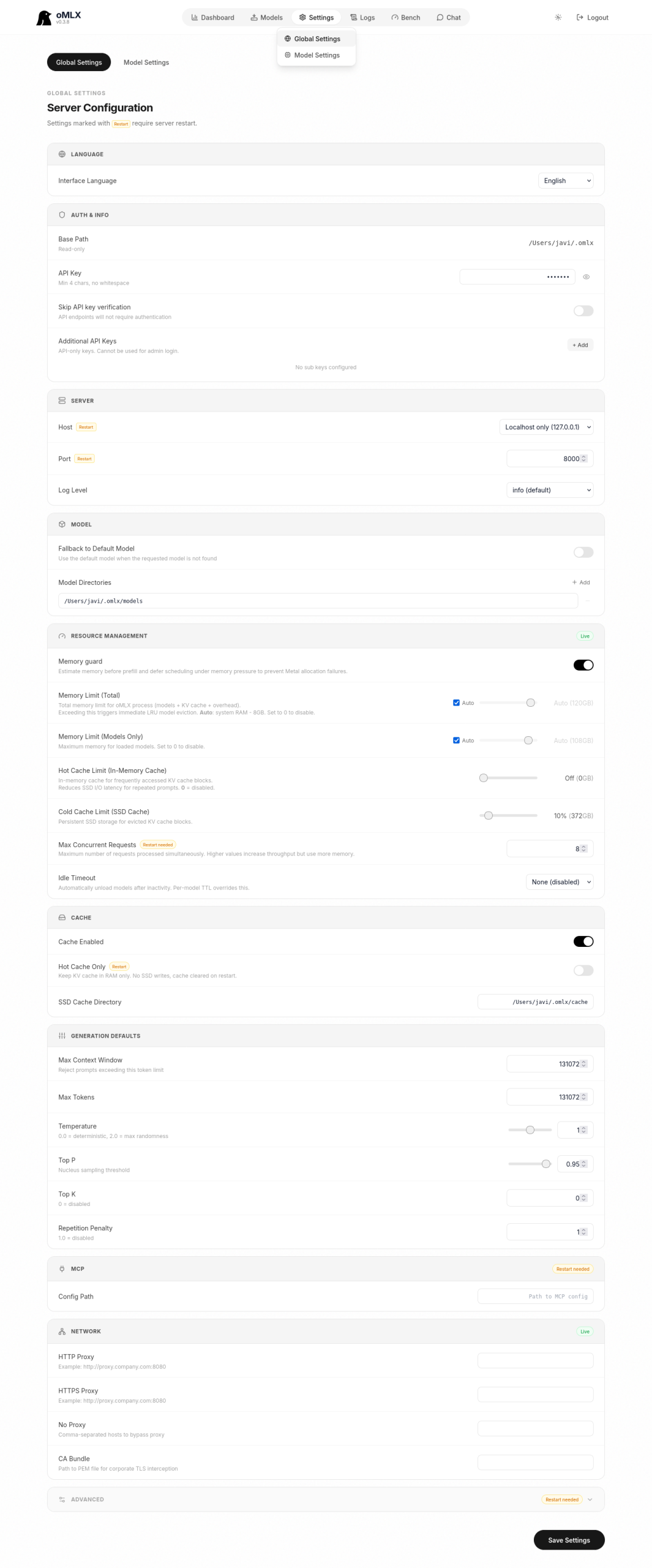

Server settings for 128 GB of unified memory

Settings → Global Settings holds the server configuration panel. For a 128 GB machine the sensible decisions are:

- Server → Host:

Localhost only (127.0.0.1). If you want to expose to the LAN, switch toLANand add real auth in front before doing so. - Server → Port:

8000by default. - Resource Management → Memory Limit (Total):

Auto. oMLX subtracts what macOS reserves; on 128 GB you end up around 110-114 GB available for inference. - Resource Management → Memory Limit (Models Only):

Auto. Keeps a percentage for activations, KV cache, and auxiliary processes. - Resource Management → Hot Cache Limit:

Off. With 128 GB you do not need the in-memory intermediate KV tier; simpler is better. Raise to 5-10% only if you run three or more large models concurrently with long contexts. - Resource Management → Cold Cache Limit (SSD Cache):

10%. Sensible default. Reserves about 80 GB of SSD for cold tokens without saturating it. - Resource Management → Max Concurrent Requests:

16. Comfortable for a single user with an agent, a chat session, and an IDE all pinging at once; raise to 32 if you share with a small team. - Resource Management → Idle Timeout:

None. Keeps models warm; first-token latency drops from several seconds to sub-second. - Generation Defaults → Max Context Window:

32768as the global default. Each model can override in Model Settings. - Generation Defaults → Temperature:

1.0for general use,0.2-0.5for code models.

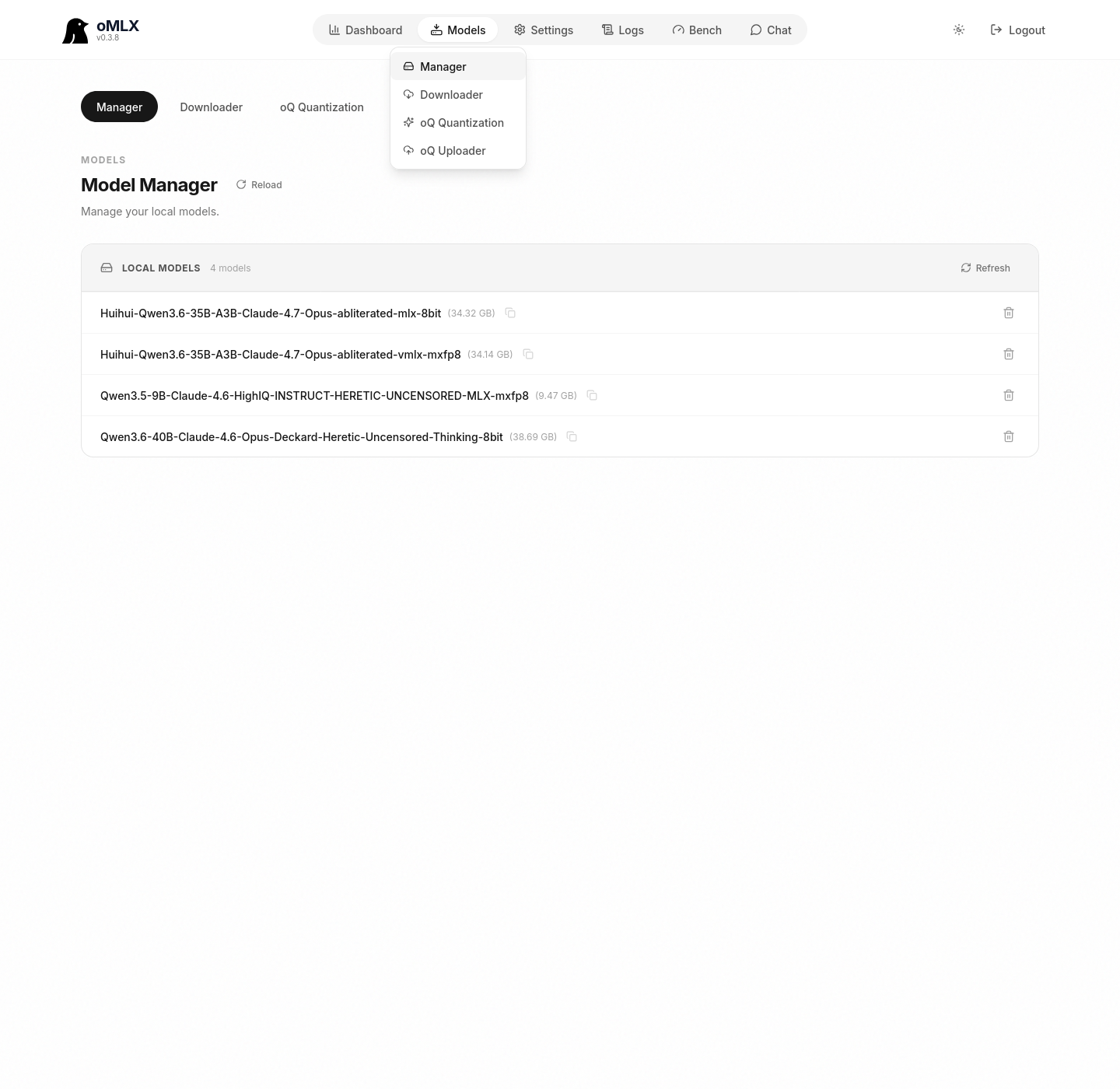

Recommended model stack for multi-LLM

With 128 GB you have room to load a code model, a general reasoning model, a fast helper, a VLM, embeddings, and a reranker concurrently. Suggested set, downloaded from Models → Downloader by pasting the Hugging Face repo URL:

- Primary code model:

Qwen3-Coder-30B-A3B-Instruct-mlx-8bit(~32 GB). MoE architecture: takes that disk space but only activates ~3B parameters per token, so it runs faster than its size suggests. - General reasoning:

Llama-3.3-70B-Instruct-mlx-4bit(~40 GB) orMistral-Large-2-123B-Instruct-mlx-4bit(~70 GB). Pick one depending on whether you want raw quality (Mistral Large) or response speed (Llama). - Fast helper:

Qwen3-14B-Instruct-mlx-4bit(~8 GB). For cheap tasks in agents, parsing, and summaries. - Vision model:

Qwen2.5-VL-32B-Instruct-mlx-4bit(~18 GB) covers OCR, image description, and multimodal reasoning. - Embeddings:

BGE-M3-mlx(~1.2 GB), dense + sparse + multi-vector in a single model. - Reranker:

ModernBERT-base-mlx(~150 MB) to close the loop on a decent RAG pipeline.

Comfortable concurrent load: around 95 GB. With LRU eviction enabled, you can register all six and let oMLX move things between hot RAM and the cold SSD cache when a model goes idle.

Point Claude Code at the local endpoint

oMLX exposes an Anthropic-compatible API at http://127.0.0.1:8000 (root, not /v1). Claude Code respects the ANTHROPIC_BASE_URL variable, so pointing the CLI at your Mac is a matter of exporting environment variables:

export ANTHROPIC_BASE_URL=http://127.0.0.1:8000

export ANTHROPIC_AUTH_TOKEN=<your_api_key>

export ANTHROPIC_DEFAULT_OPUS_MODEL=Qwen3-Coder-30B-A3B-Instruct-mlx-8bit

export ANTHROPIC_DEFAULT_SONNET_MODEL=Qwen3-Coder-30B-A3B-Instruct-mlx-8bit

export ANTHROPIC_DEFAULT_HAIKU_MODEL=Qwen3-14B-Instruct-mlx-4bit

export ANTHROPIC_DEFAULT_MODEL=Qwen3-Coder-30B-A3B-Instruct-mlx-8bit

export API_TIMEOUT_MS=600000

export CLAUDE_CODE_USE_BEDROCK=0

export DISABLE_NONESSENTIAL_TRAFFIC=1

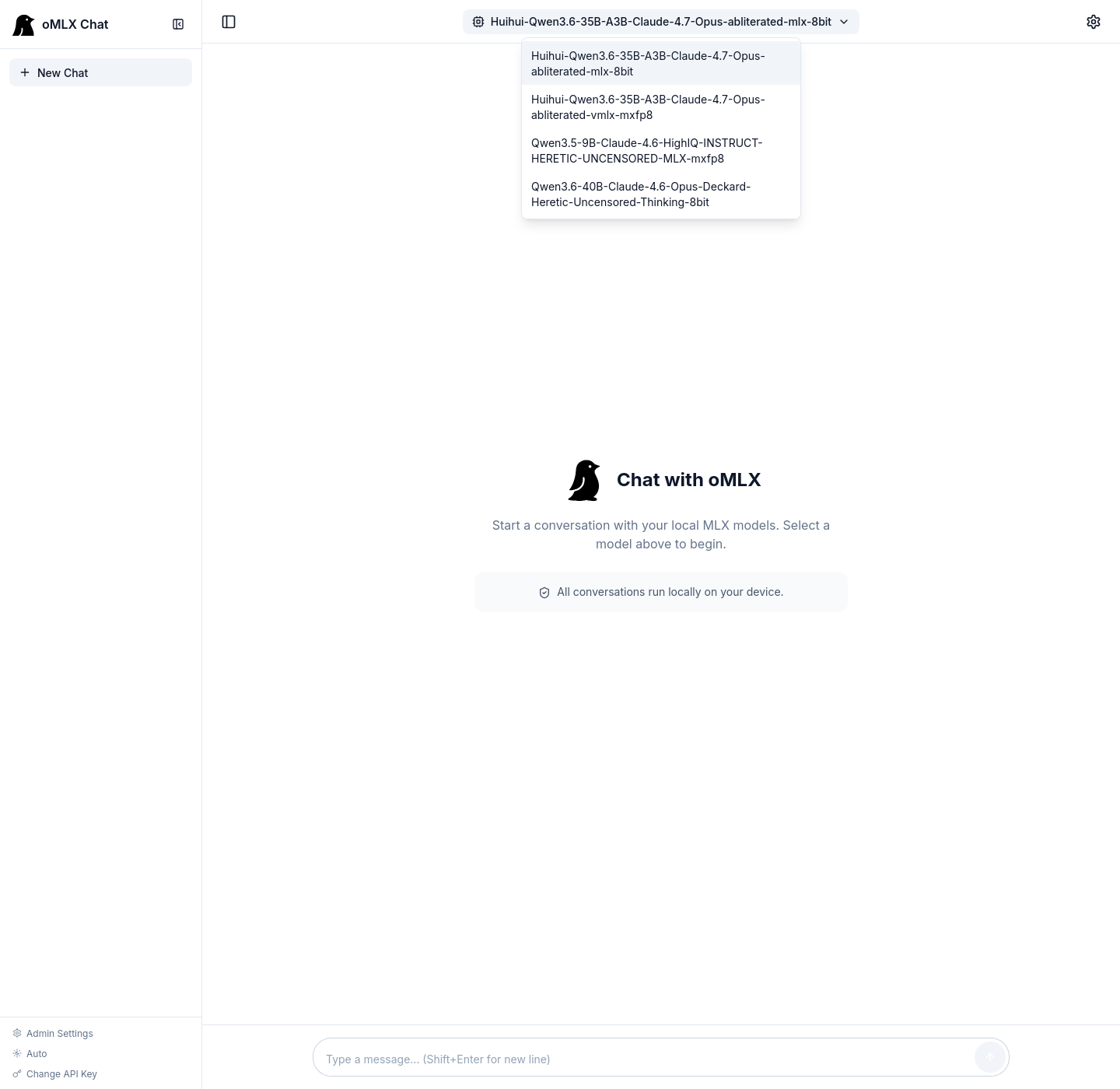

claudeThe oMLX dashboard builds this command for you in the Claude Code with oMLX section: you pick Opus, Sonnet, and Haiku from three dropdowns and copy the ready-to-paste command. The Context scaling for Claude Code option scales context to the model’s practical maximum (Claude Code asks for 200k tokens by default; the Qwen3 family handles 128k comfortably, and the option adapts the value per request).

A real caveat: Claude Code is tuned to Claude’s tool-use format and response patterns. A non-Claude model behind ANTHROPIC_BASE_URL works for autocomplete and reasoning, but you will see drops in tool-call reliability and in agentic loops. Use this for offline work, sensitive data that should not leave the Mac, or as a fallback when api.anthropic.com is rate-limiting you. It is not a 1:1 substitute for Claude Opus 4.7.

Other integrations (Codex, OpenCode, OpenClaw)

The same dashboard ships one-click launchers for external clients: Codex (OpenAI-style CLI), OpenCode (open-source coding agent), and OpenClaw (Claude-style wrapper). Each has its own model dropdown and, for OpenCode, a Tools selector that decides which standard tools to expose to the agent. The idea is that you arrive, pick a model, and copy the command.

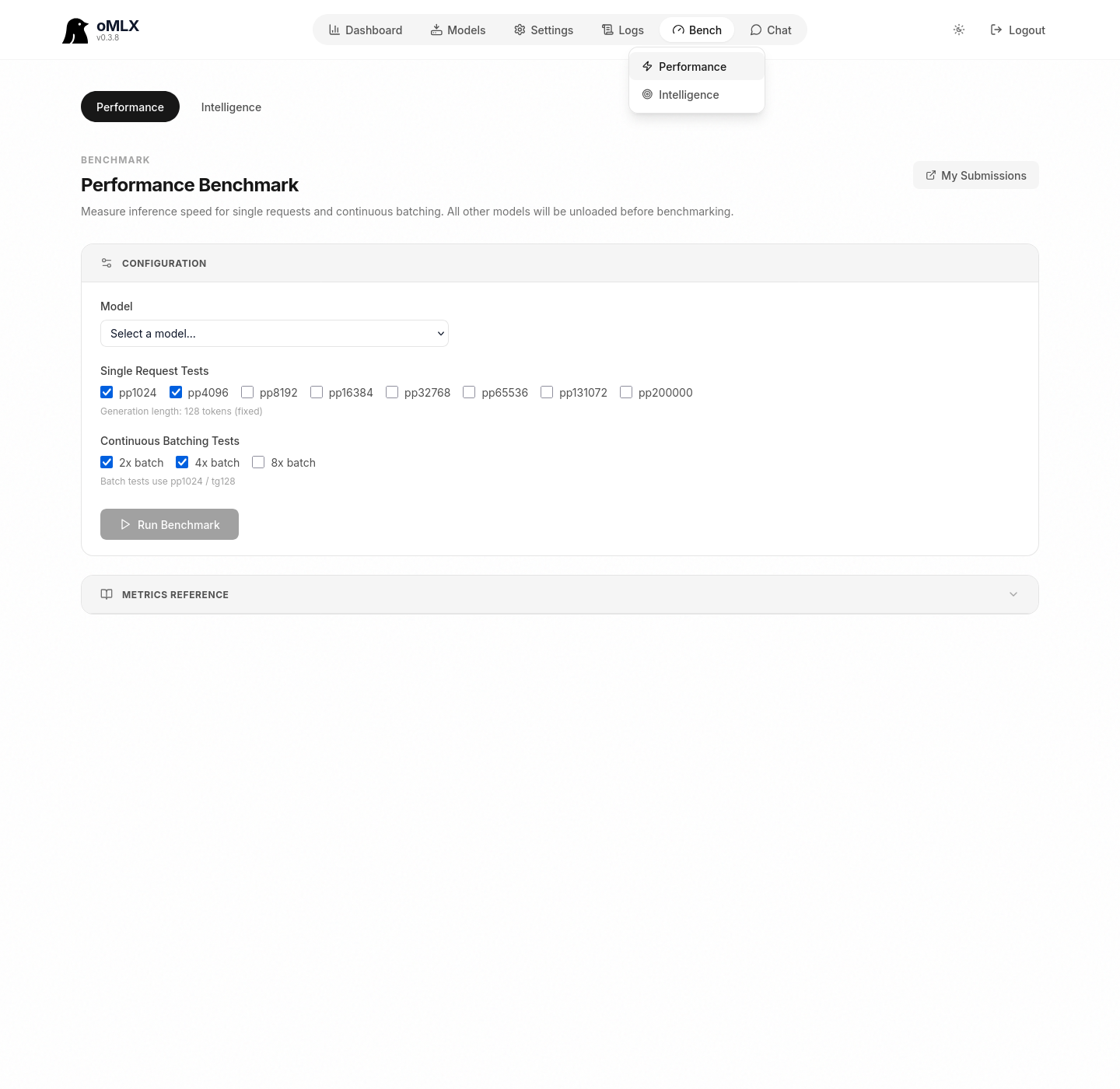

Benchmark the actual throughput

Bench → Performance runs tests on your real hardware. The panel covers prefill at several prompt sizes (pp512, pp1k, pp2k, pp4k, pp16k, pp32k) and continuous batching at 2x, 4x, and 8x concurrency. You can submit your results to the public oMLX leaderboard from My Submissions.

Expected numbers for an M5 Max 40-core with 128 GB, extrapolated from published M4 Max figures with a 15 to 25% uplift:

- Qwen3-Coder-30B-A3B 8-bit: around 70-90 tok/s on decode, 1,000-1,500 tok/s on prefill.

- Llama 3.3 70B 4-bit: around 15-20 tok/s on decode.

- Mistral Large 2 123B 4-bit: around 8-12 tok/s on decode.

When the real hardware lands, replace these estimates with what your own Bench → Performance reports: the MoE architectures are the ones that benefit most from the updated Apple Matrix coprocessors on M5.

End-to-end verification

A curl call to the OpenAI-compatible API to confirm the server is alive and a model responds:

curl http://127.0.0.1:8000/v1/chat/completions \

-H "Authorization: Bearer <your_api_key>" \

-H "Content-Type: application/json" \

-d '{

"model": "Qwen3-Coder-30B-A3B-Instruct-mlx-8bit",

"messages": [{"role":"user","content":"Hello from oMLX"}]

}'And from Claude Code, once you have exported the variables from the previous section:

claude --print "Are you running locally?"The reply should come back from local without touching api.anthropic.com. To confirm at the network level, open Activity Monitor → Network, filter by the claude process, and check that the outbound connection is against 127.0.0.1:8000.