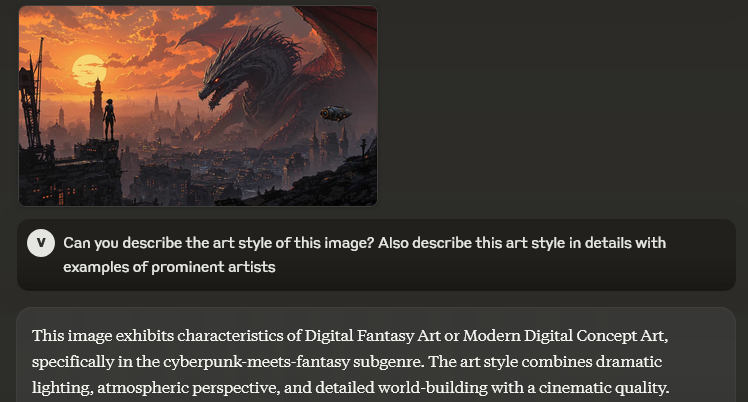

Claude 3 Family: Haiku, Sonnet and Opus Compared

Actualizado: 2026-05-03

Anthropic launched the Claude 3 family on March 4, 2024: three models — Haiku, Sonnet, and Opus — on the same date, each with a different cost/performance trade-off. A month in, adoption was clear: Claude 3 Opus competes head-to-head with GPT-4 Turbo on many benchmarks, and Haiku is one of the cheapest models with decent quality.

Key takeaways

- The three tiers cover clear trade-offs: Haiku for cheap high volume, Sonnet as pragmatic default, Opus for complex reasoning where a cheaper model’s error is expensive.

- All three have 200k token context — a concrete advantage over GPT-4 Turbo’s 128k.

- Multimodal vision (images in prompts) is available across all three models.

- Tiered routing (Haiku → Sonnet → Opus as needed) minimises total cost without sacrificing quality where it matters.

- Available on Amazon Bedrock and Google Cloud Vertex AI, opening European compliance options.

The Three Tiers

Haiku — fastest and cheapest:

- Price: $0.25 / 1M input tokens, $1.25 / 1M output.

- Context: 200k tokens.

- Ideal use: classification, simple extraction, tier-1 support chat, content moderation.

Sonnet — balanced:

- Price: $3 / 1M input, $15 / 1M output.

- Context: 200k tokens.

- Quality: near GPT-4 on many tasks.

- Ideal use: enterprise RAG, agents with tools, long-document analysis.

Opus — most capable:

- Price: $15 / 1M input, $75 / 1M output.

- Context: 200k tokens.

- Quality: competitive with GPT-4 Turbo.

- Ideal use: complex multi-step reasoning, research, advanced coding, legal/medical analysis.

Published Benchmarks

| Benchmark | Opus | Sonnet | Haiku | GPT-4 Turbo |

|---|---|---|---|---|

| MMLU | 86.8 | 79.0 | 75.2 | 86.4 |

| GSM8K (math) | 95.0 | 92.3 | 88.9 | 92.0 |

| HumanEval (code) | 84.9 | 73.0 | 75.9 | 85.4 |

| HellaSwag | 95.4 | 89.0 | 85.9 | 95.3 |

Opus is in GPT-4 Turbo’s class. Sonnet closes at ~GPT-3.5+ quality at lower price. Haiku is the surprise: very competitive for its price, 12× cheaper than Sonnet.

The 200k Context: The Real Differentiator

Cases where 200k suffices without needing Gemini 1.5:

- Books of ~150 pages or ~75k words.

- Mid-size codebases.

- Hour-long audio transcripts.

- Extensive technical reports.

Multi-Tier Usage Strategy

The most efficient productive pattern:

- Classify intent with Haiku (cheap).

- Process simple case with Sonnet (default).

- Escalate to Opus only if Sonnet fails or requires high precision.

This tiered routing with LiteLLM or custom logic minimises total cost without sacrificing quality where it matters.

Honest Limitations

- Aggressive rate limits at start of Pro plan.

- Data residency: US by default (Bedrock has regional options).

- No fine-tuning: Anthropic doesn’t offer custom fine-tune except via enterprise contracts.

Conclusion

The Claude 3 family closes the gap Anthropic had vs OpenAI in top-tier capability. Opus is a real option for frontier tasks. Sonnet is the pragmatic default. Haiku opens high-volume cases with decent quality. 200k context across all three is a concrete advantage vs GPT-4 Turbo. The Anthropic-vs-OpenAI choice is no longer “who has the best” but “which fits your case and provider preference”. Worth having both in your strategy, not just one.