DataFrames and Pipelines in Spark: Data Processing Optimisation

Actualizado: 2026-05-03

Apache Spark is the most widely used distributed data processing engine in the industry. Its two fundamental abstractions — DataFrames and pipelines — enable transforming, analysing, and modelling large data volumes with expressive code, automatically optimised by the Catalyst engine and executed in parallel across node clusters.

Key takeaways

- Spark DataFrames are distributed, schematised tables that can be queried with SQL or the Python/Scala functional API.

- Transformations in Spark are lazy: they are not executed until an action is needed, allowing the engine to optimise the execution plan.

- Pipelines organise transformations and models into a reproducible chain, essential for ML in production.

- Partitioning and caching are the two most direct performance optimisation levers.

- Spark scales horizontally: adding nodes to the cluster increases capacity without rewriting code.

DataFrames: Spark’s central abstraction

A Spark DataFrame is a distributed collection of data organised into named, typed columns — conceptually similar to a relational database table or a pandas DataFrame, but distributed across cluster nodes.

Spark DataFrames can be created from multiple sources:

- CSV, JSON, Parquet, and ORC files.

- Relational databases via JDBC.

- Storage systems like S3, HDFS, or Azure Data Lake.

- Real-time streams (Spark Structured Streaming).

The most common operations are:

- Filtering (

filter,where): selecting rows that meet a condition. - Transformation (

select,withColumn): adding or modifying columns with expressions. - Aggregation (

groupBy,agg): computing metrics per group. - Join: combining two DataFrames by one or more common keys.

A fundamental property is immutability: each transformation creates a new DataFrame; it does not modify the existing one. This makes pipelines reproducible and facilitates reasoning about data flow.

Lazy execution and the Catalyst optimiser

Spark does not execute transformations immediately. When you chain .filter(), .groupBy(), and .select(), Spark builds a logical plan of the query. Only when you call an action (.show(), .collect(), .write()) does Spark deliver that plan to the Catalyst optimiser, which:

- Analyses the logical plan and checks schemas.

- Generates multiple alternative physical plans.

- Selects the one with the lowest estimated cost (based on data statistics).

- Generates JVM code (or columnar code with Tungsten) to execute it.

This deferred execution is what allows Spark to optimise sequences of transformations that, if executed step by step, would be inefficient. It is analogous to a database query optimiser, but for distributed code.

Pipelines: reproducibility in production

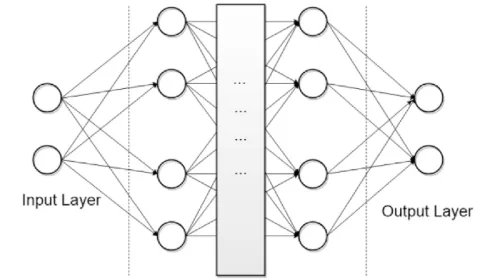

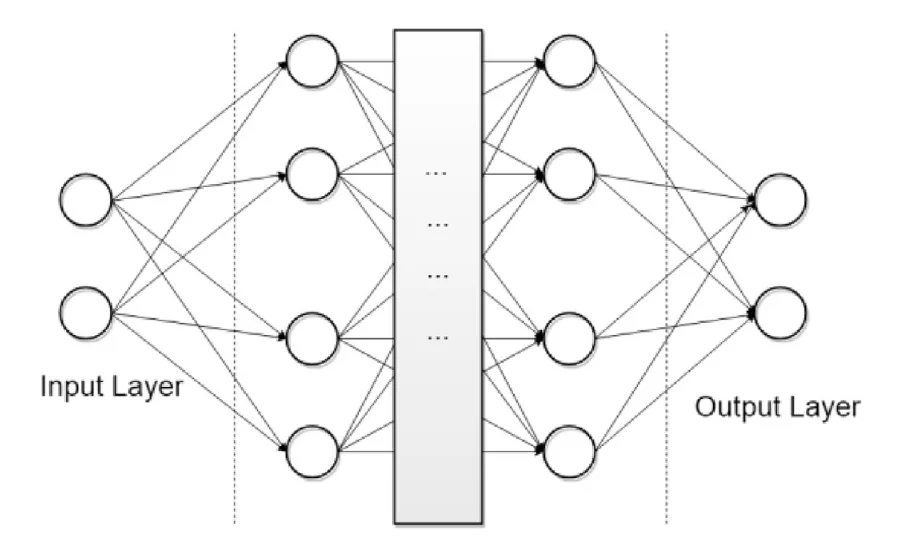

In the context of machine learning with Spark MLlib, a pipeline is an ordered sequence of stages (Stages) where each takes an input DataFrame, transforms it, and produces an output DataFrame:

from pyspark.ml import Pipeline

from pyspark.ml.feature import VectorAssembler, StandardScaler

from pyspark.ml.classification import RandomForestClassifier

assembler = VectorAssembler(inputCols=["feature1", "feature2"], outputCol="features")

scaler = StandardScaler(inputCol="features", outputCol="scaled_features")

rf = RandomForestClassifier(featuresCol="scaled_features", labelCol="label")

pipeline = Pipeline(stages=[assembler, scaler, rf])

model = pipeline.fit(train_df)

predictions = model.transform(test_df)The advantages of pipelines are:

- Reproducibility: the same

Pipelineobject applied to new data produces exactly the same transformation flow. - Serialisation: trained models can be saved to disk and loaded without retraining.

- Cross-validation integration:

CrossValidatorandTrainValidationSplitin Spark MLlib work natively with pipelines for hyperparameter selection.

Optimisation: partitioning and caching

Two techniques have the greatest impact on real performance:

Partitioning. Spark divides data into partitions processed in parallel. Inadequate partitioning creates bottlenecks:

- Too few partitions: underutilises the cluster; nodes are idle.

- Too many small partitions: coordination overhead outweighs the parallelism benefit.

- Skew: if one partition concentrates 80% of the data (e.g., a very frequent value in the join key), that node becomes the bottleneck.

repartition(n) redistributes data with a full shuffle; coalesce(n) reduces partition count without a shuffle, useful just before writing the result.

Data caching. When a DataFrame is used multiple times in the same flow (e.g., as the basis for several aggregations), persist it in memory with .cache() or .persist(). Without caching, Spark recomputes the DataFrame from source every time it is referenced. With caching, the read cost is paid only once.

For use cases where Spark connects with broader machine learning systems, the LazyPredict pattern for rapid model comparison is a natural complement once the dataset is prepared. It also parallels big data use in decision-making.

Conclusion

Spark DataFrames and pipelines are the standard infrastructure for processing data at scale in production environments. The key to performance is not the tool itself — it is correctly designing the partitioning, using caching strategically, and letting the Catalyst optimiser do its work. A well-built Spark pipeline is code that scales from a laptop to a hundreds-of-nodes cluster without structural changes.