Text Embeddings: Turning Words Into Useful Vectors

Table of contents

- Key takeaways

- What an Embedding Really Is

- Relevant Models

- OpenAI Embeddings (text-embedding-ada-002)

- Sentence Transformers (all-MiniLM, all-mpnet, etc.)

- BGE Models (BAAI General Embedding)

- How to Choose Between Them

- Use Cases Where They Add Value

- Cases Where They Aren’t the Best Option

- The Chunking Detail

- Conclusion

Actualizado: 2026-05-03

Embeddings are the numerical representation of text that lets AI models “understand” semantic similarity. Behind semantic search, RAG systems, modern text classification, and almost any current NLP product, there are embeddings. We unpack what they are exactly, how to choose a model, and the cases where they really add value over simpler alternatives.

Key takeaways

- An embedding is a vector of N dimensions (typically 384, 768, or 1536) where semantically similar texts produce nearby vectors measured by cosine distance.

- Three families dominate practical choice: OpenAI ada-002 (managed, simple), Sentence Transformers (open source, privacy), BGE (open source, maximum quality in English).

- Chunking matters more than the model chosen: bad chunking is the number-one cause of underperforming RAG.

- Embeddings aren’t the right tool for exact search or metadata filtering — for that there’s keyword search and SQL.

- Corpus quality and chunking outweigh the difference between top-3 models in practical impact.

What an Embedding Really Is

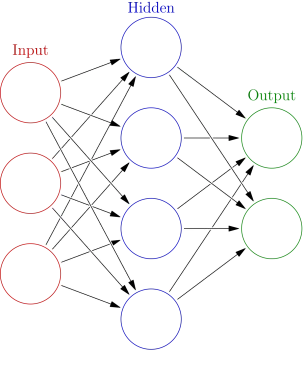

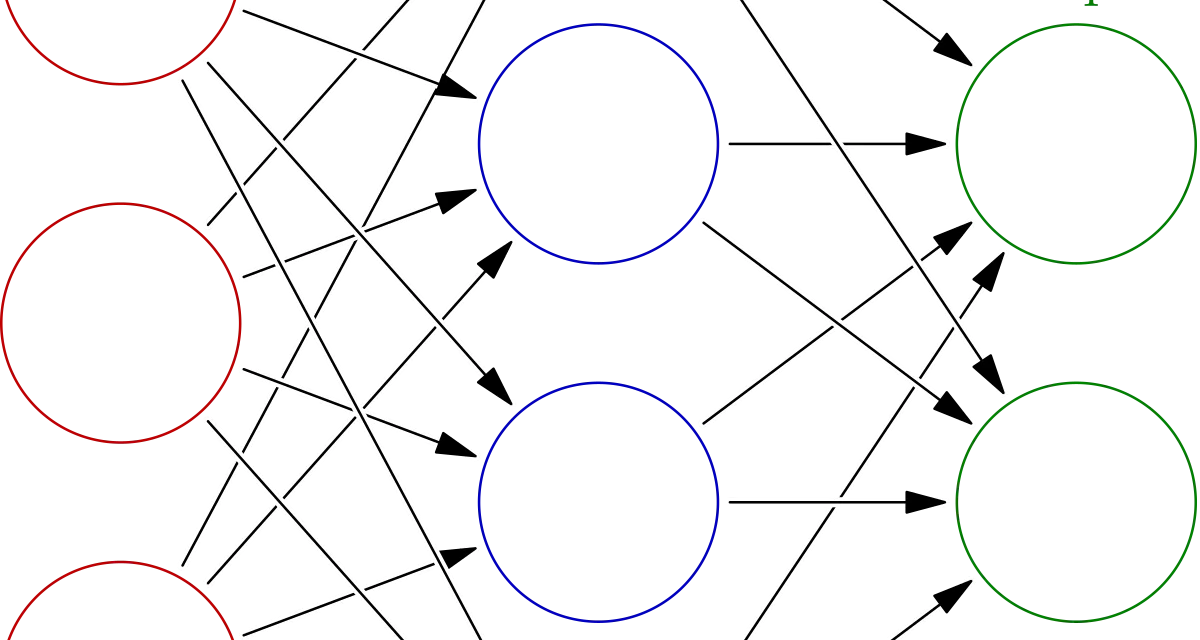

An embedding is a vector of N dimensions (typically 384, 768, or 1536) representing the meaning of a text. The key property: texts with similar meaning produce nearby vectors measured by cosine distance.

Conceptual example:

"dog" → [0.21, -0.43, 0.88, ...]

"hound" → [0.19, -0.41, 0.86, ...] # close

"car" → [-0.55, 0.71, 0.04, ...] # farModels that generate embeddings are neural networks trained on large corpora so this property holds. Today there are dozens, with different trade-offs in quality, dimension, speed, and cost.

Relevant Models

Three families dominate practical choice:

OpenAI Embeddings (text-embedding-ada-002)

- Dimension: 1536.

- Managed API: simple, no infrastructure, pay per token.

- Cost: around $0.10 per million tokens — very low.

- Quality: good for general English cases and reasonable multilingual.

- Lock-in: can’t run locally; data passes through OpenAI.

It’s the “reasonable default” if you don’t want to think about infra or models. For many small-medium projects, it’s the sensible choice.

Sentence Transformers (all-MiniLM, all-mpnet, etc.)

- Open source, downloadable from Hugging Face[1].

- Dimension: 384 (MiniLM) or 768 (mpnet) typically.

- Speed: MiniLM is very fast; mpnet slower but better quality.

- Cost: inference is local, you pay only compute (CPU works, GPU accelerates).

- Privacy: nothing leaves your infra.

Excellent when privacy matters or you want to avoid third-party dependence.

BGE Models (BAAI General Embedding)

- bge-large-en-v1.5 and bge-base-en-v1.5 published by the Beijing Academy of AI.

- Current leader in retrieval benchmarks (MTEB) in English.

- Open source, locally runnable.

- Dimension: 1024 (large) or 768 (base).

If you care about maximum retrieval quality and can run 1-2 GB models locally, BGE is probably the best free option.

How to Choose Between Them

A reasonable decision tree:

- Privacy critical or API cost is a problem → Sentence Transformers or BGE local.

- English-only, maximum quality → BGE or E5.

- Multilingual (ES, EN, FR…) and simplicity → OpenAI ada-002 or multilingual-e5-base.

- Speed above all → MiniLM (CPU encoder works reasonably).

- Don’t want to think about infra → OpenAI ada-002.

Quality differences among the top-3 are notable in benchmarks but smaller than expected in real apps — corpus quality and chunking usually matter more than the difference between top-tier models.

Use Cases Where They Add Value

Cases where embeddings are the right tool:

- Semantic search. “Find documents talking about X” where X can be expressed many ways. Much better than keyword search when users don’t use the exact corpus terms.

- RAG. Retrieve relevant context for an LLM before generating an answer. The central piece of 90% of applied LLM products. See also Chroma and pgvector as storage options.

- Zero-shot or few-shot classification. Categorise texts without training a classic classifier — text embedding + similarity to embedded labels.

- Duplicate or near-duplicate detection. Find similar content (catalog products, blog posts, FAQs).

- Content-based recommendation. “Other related articles” based on text similarity, not click history.

- Textual anomaly detection. Identify text that strays from the corpus’s typical pattern.

Cases Where They Aren’t the Best Option

Sometimes embeddings get applied by default when something else works better:

- Exact-match search. “I want documents with this exact word” → keyword search (Elasticsearch BM25, Postgres FTS) is better.

- Strict metadata filtering. “Posts by author X published in 2022” → SQL or a traditional index.

- Small corpus (<200 documents) → you can simply put them all in the LLM prompt (if they fit) and skip the vector DB.

- Hybrid search with complex filters → better a vector DB with native filters (Weaviate, Qdrant) than a homemade system.

The Chunking Detail

For long-document embeddings, you don’t embed the whole document — you split it into chunks. Decisions that matter:

- Size: 200-500 tokens per chunk is a typical range. Too small loses context; too large dilutes signal.

- Overlap: 10-20% of tokens shared between consecutive chunks to avoid cutting concepts in half.

- Structure: respect headers, paragraphs, and tables if possible. Cutting mid-table generates nonsensical chunks.

Bad chunking is the number-one cause of underperforming RAG — more than the choice of embedding model. This is why frameworks like LangChain include configurable splitters like RecursiveCharacterTextSplitter.

Conclusion

Embeddings are the most versatile piece of the modern NLP toolkit. Model choice matters less than it seems for many cases; what most impacts is how you process text before (chunking, normalisation) and what you do with vectors after (search, ranking, filters). Start with the simplest option meeting your privacy and cost constraints, measure, and migrate only when needed.