FinOps for AI workloads in 2026: the real pain

Actualizado: 2026-05-03

The first time a CFO asked me why their company’s AI bill had gone up six hundred percent in six months, I was the one surprised: on one hand, frontier-model prices had dropped; on the other, the team claimed everything was under control. Investigation revealed the usual mix: RAG without cache, a misconfigured agent that self-recursed, an evaluation loop firing the most expensive available model to validate the cheapest one’s answers. The sum of small errors produced a very large bill. In 2026 this kind of situation is the rule, not the exception.

Key takeaways

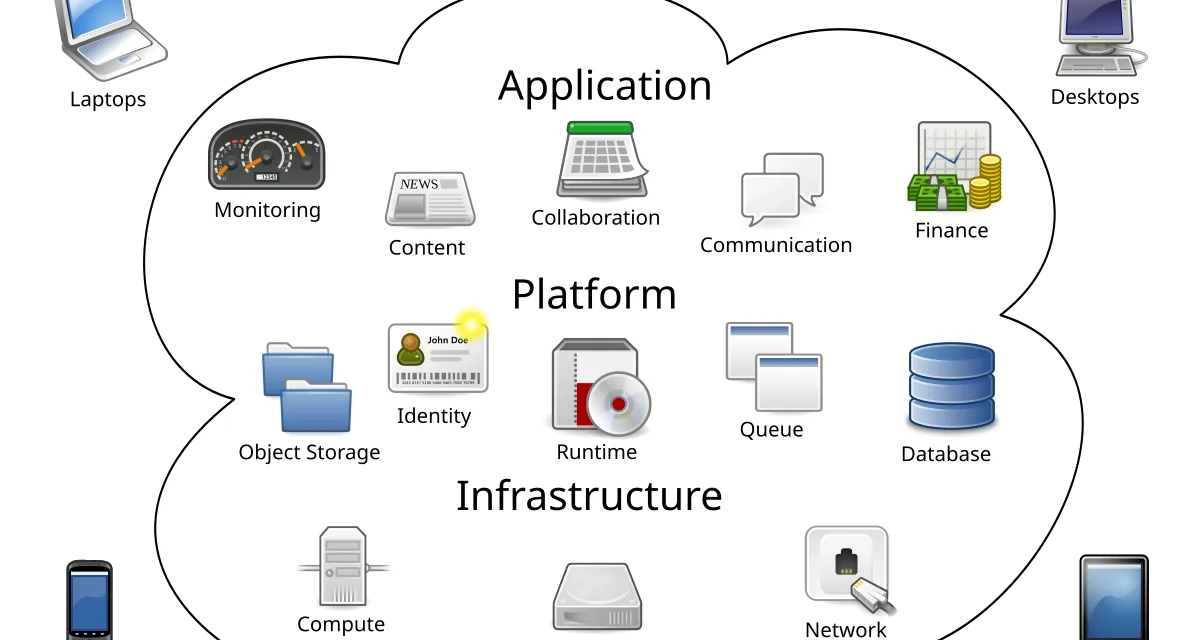

- Classic FinOps looks at cost per physical resource; for AI, units are tokens, calls, computed embeddings, and GPU time, which scales nonlinearly with use.

- The most expensive mistake is using frontier models for tasks that don’t need them: 40-70% of large-model calls can be made with medium models without perceptible quality loss.

- Tagging calls by feature with billing metadata is the prerequisite for any cost analysis.

- Per-feature budgets with 70% alerts prevent invoice incidents; trivial implementation, immediate ROI.

- For owned GPUs: measure real utilization per minute, consolidate workloads, and use spot market for interruption-tolerant loads.

Why classic FinOps isn’t enough

Traditional FinOps looks at cost per resource: EC2 instances, S3 storage, data transfer. It works well when consumption is relatively stable and units are physical. For AI, units are tokens, calls, computed embeddings, and GPU time in mixed workloads. A single badly designed agent can spend in a day what an instance costs in a month, and classic cost-per-service dashboards don’t capture the cause because they lump everything into one API spend line.

The added difficulty is AI spend tends to scale nonlinearly with use. An app with a thousand daily users consumes tokens relatively predictably. Add agents that reason several steps and give them tools chaining calls, and you’ve multiplied spend by ten without changing user count.

The most common expensive mistakes

Using frontier models for everything. Claude Opus and GPT-4o cost ten to thirty times more per token than their small siblings. The typical audit finds 40-70% of frontier-model calls could have used a medium or small model with no perceptible quality loss: basic classification, short summary, structured extraction, binary validation.

No cache in RAG. An app doing retrieval augmented generation without embedding cache, response cache for repeated queries, and prompt caching pays multiple times for the same work. Query patterns have heavily skewed distributions where the top 20% of queries produces 80% of traffic; caching those reduces the bill dramatically.

The evaluation loop with expensive model. Using a cheap model for the primary response and an expensive one to validate; seems reasonable until you add that each response generates two calls. Validation based on simple rules or a small binary-classification model works almost as well at five percent the cost.

Controls that actually move the bill

Tag calls by feature. Every model call should carry metadata on which product area fires it, which user consumes it, and what flow it’s in. Without this, there’s no way to know if bill spike comes from a product change, traffic spike, or bug. Tools like Helicone, LangSmith, or LangFuse aggregate this well for later analysis.

Per-feature budget with automatic alerts. Every component consuming AI should have a monthly ceiling and an alert when it passes 70%. When the misconfigured agent starts self-recursing, you find out in twenty minutes, not when the bill arrives. Setup cost is hours; the first incident it would have prevented costs much more.

Complexity-based model router. Classify each request by difficulty before sending to the model: trivial queries to the small model, medium complexity to the mid one, multi-stage reasoning to the big. A simple heuristic based on length and query type eliminates most overspend without hitting perceived quality. Tools like Martian, RouteLLM, or LiteLLM do this automatically with little config.

GPUs: the other front

For teams operating their own models, GPU management is the second FinOps front. Reserved H200s and B200s at CoreWeave or Lambda cost between three and seven dollars an hour. If average utilization sits below 50%, you’re throwing money away systematically.

- Measure real utilization per minute, not just peaks. Real average utilization is often much lower than peak.

- Consolidate workloads onto fewer better-used GPUs instead of splitting across many small instances with slack.

- Spot market for interruption-tolerant loads: training jobs, batch inference not latency-sensitive, periodic embedding generation. Spot prices drop 40-80% versus reserved.

Conclusion

FinOps for AI in 2026 is nothing especially complicated, just requires doing the usual controls people don’t do. Tag calls, budget per feature, alert before disaster, route by complexity, measure GPU utilization, and use spot for the interruption-tolerant. With those six controls, most teams reduce their bill between thirty and sixty percent without quality loss.

Usual resistance comes from these controls looking like bureaucracy that slows development, and in a culture where AI is perceived as cheap magic, nobody wants to be the brake-puller. But the bill arrives monthly, and when it first arrives with double or triple overrun, the whole company suddenly discovers it needed FinOps. Anticipating that moment with soft controls from the start is far less painful than implementing them in crisis mode.

Last reviewed: 2026-04-19.