Firecracker: microVMs for multi-tenant services

Actualizado: 2026-05-03

Firecracker arrived with AWS Lambda in 2018 and has since been the real isolation layer behind millions of serverless invocations and Fargate containers. For years it was an AWS-mostly project. In 2025 that changed: a growing number of third-party code-execution platforms are adopting it outside AWS.

Key takeaways

- Firecracker is a VMM written in Rust, based on KVM, with a narrow goal: boot microVMs in under 125 ms with under 5 MB of overhead per VM.

- It doesn’t replace Docker: it replaces the container runtime when stronger isolation than a shared kernel is needed.

- Three factors drive adoption: proliferation of untrusted-code execution platforms, security pressure on multi-tenant platforms, and ecosystem maturity (Kata Containers, Fly.io, Koyeb).

- Versus gVisor: Firecracker offers better I/O performance and KVM attack surface, in exchange for slightly slower startup and higher memory overhead.

- The three remaining rough edges are networking (tap devices, bridges), storage (rootfs conversion) and VMM observability.

What it is and isn’t

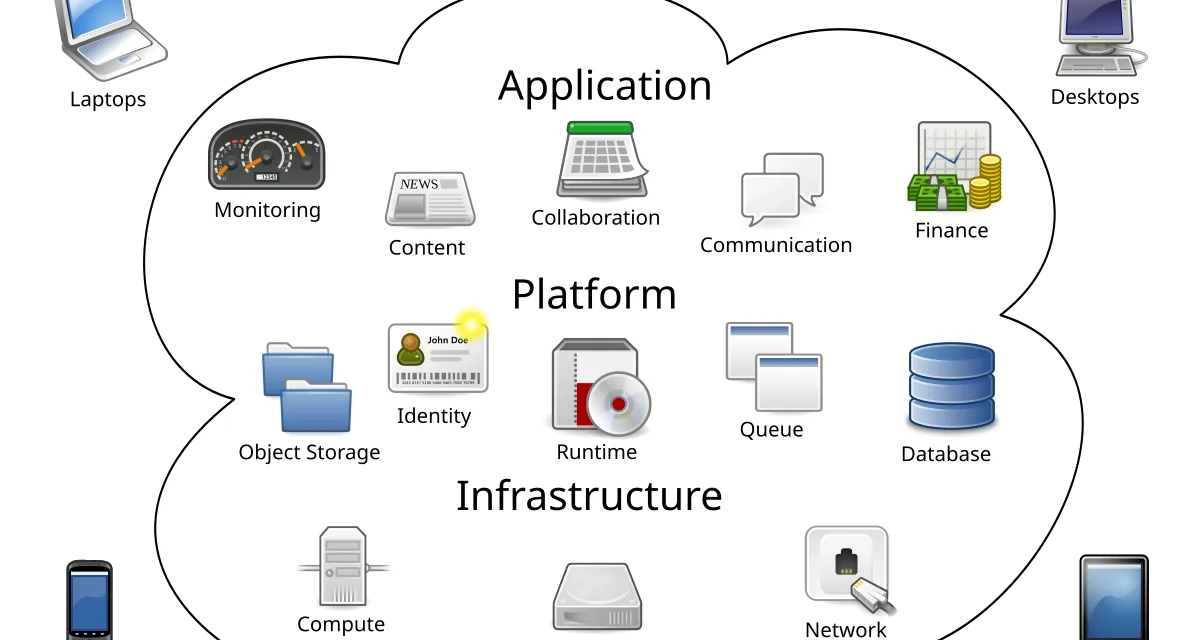

Firecracker is a virtual machine monitor (VMM) written in Rust, based on KVM, with a very narrow goal: boot microVMs in under 125 milliseconds, use under 5 MB of memory overhead per VM, and expose a minimalist control API. It’s not a general-purpose hypervisor like QEMU; it deliberately omits legacy devices (USB, graphics, audio) that make no sense for serverless workloads.

The architectural position matters: Firecracker doesn’t replace Docker. It replaces the container runtime (runc, crun) when you need stronger isolation than a shared kernel can offer. Application code still ships as an OCI image; what changes is that, instead of running in a host-kernel namespace, it runs inside a microVM with its own minimalist Linux kernel.

Why interest is returning

Three factors converge to explain the 2025 adoption:

- Proliferation of untrusted-code execution platforms. In the context of LLM agents, more products run model-generated code in an environment isolated from the main service. Firecracker provides that isolation with startup fast enough for human interactivity (hundreds of milliseconds, not seconds). Directly related to prompt injection defense: when an agent executes code, the microVM isolation is the last barrier.

- Security pressure on multi-tenant function platforms. Documented container escapes have made mid-sized security teams dismiss namespace-only solutions. A CVE in runc or containerd can compromise the host; a similar CVE in a microVM stays bounded inside the guest.

- Ecosystem maturity. Firecracker now has stable integrations with Kata Containers (compatible with Kubernetes’ CRI interface), with Fly.io as a public platform, with Koyeb, and with several sandbox code execution providers for agents. Two years ago choosing Firecracker meant building all the plumbing; now there are off-the-shelf options.

Versus gVisor: a concrete trade-off

The natural alternative in the reinforced-isolation space is gVisor, Google’s syscall-interception sandbox. Both solve the same problem but with different mechanisms:

gVisor: – Fast startup, comparable to a container. – Very low memory overhead. – Transparent to the guest. – I/O-heavy workloads take a performance hit. – Attack surface is the gVisor engine itself; a bug there compromises the host.

Firecracker: – Startup slightly slower than gVisor but still fast. – Slightly higher memory overhead. – Guest needs its own kernel. – I/O performance close to native. – Attack surface is KVM, far more audited than gVisor.

My practical rule: if the workload is short-lived (milliseconds to seconds) and tolerates syscall overhead, gVisor is operationally simpler. If the workload is longer or I/O-intensive (agent code execution, file processing, ML inference), Firecracker performs better and offers isolation most auditors accept without discussion.

Rough edges still there

Firecracker in 2025 is mature but not friction-free. Three areas need attention:

- Networking. Network plumbing requires tap devices, bridges, and typically a daemon managing address assignment and routes. Tools like

firecracker-containerdand the firecracker CNI plugin ease some of the work, but it remains more complex than standard Docker networking. - Storage. Firecracker uses raw or qcow2 disk images as volumes. Preparing a bootable image from an OCI image isn’t automatic; you convert the rootfs and generate a minimalist kernel.

- Observability. Inside the microVM the guest is a normal Linux system. But observing Firecracker itself (active VMs, VMM memory use, per-VM boot time) requires scraping the control API or integrating the metrics exporter. Without that, diagnosing a production incident is hard. Same discipline as continuous eBPF profiling.

My read

Firecracker moved from AWS internal curiosity to a real piece of the multi-tenant stack. For the concrete case of running untrusted user code (LLM agents, function platforms, cloud IDE sandboxes), the choice is no longer obviously containers; it’s between gVisor and Firecracker, with pure containers ruled out by the security team.

What’s notable is the window for intermediate platforms. Three years ago, only AWS could afford operating Firecracker at scale. Today, with tools like Kata Containers, Fly Machines or firecracker-containerd, a small team can assemble a multi-tenant platform with serious isolation in weeks. That shifts entry barriers for products that used to need a hyperscaler behind them.

My recommendation if you’re evaluating: if the case is running code your team doesn’t control (end users, agents, plugins), Firecracker deserves a proof of concept this week. If the case is isolating services your team does control, stay with standard containers and resist the temptation to complicate the stack for fears that don’t materialise in your threat model.