Enterprise GraphRAG: patterns after a year of adoption

Actualizado: 2026-05-03

When Microsoft published the GraphRAG paper in mid-2024, the promise was clear: where classical vector RAG failed — questions demanding synthesis across documents or transitive relationships between entities — a graph built ahead of time with the LLM itself would give better answers. A year and a half later, in April 2026, enough real deployment has accumulated to separate myth from pattern. The short answer: GraphRAG works where information has dense relational structure and fails expensively where there are only loose documents.

Key takeaways

- The entry rule: if you cannot explain to a human what the five most important entity types in your corpus are, your corpus probably isn’t ready for GraphRAG.

- LLM ingestion cost is ten to thirty times higher than vectorizing the same corpus.

- Surviving deployments use small models for extraction and frontier models only for querying.

- The three architectural patterns that survived: vector-plus-graph hybrid, layered extraction, and federated graph.

- For mid-size graphs (under a million entities), PostgreSQL with well-thought indexes beats proprietary graph solutions on operating economics.

What GraphRAG Actually Is in Practice

Classical vector RAG retrieves text fragments by semantic similarity to the question. It works well for pointed questions where the answer lives literally in a fragment. It fails when questions require:

- Transitive relations: “which customers are affected by last week’s policy change?”

- Global synthesis: “what are the recurring themes in complaints this quarter?”

No fragment contains the answer; the corpus has to be navigated.

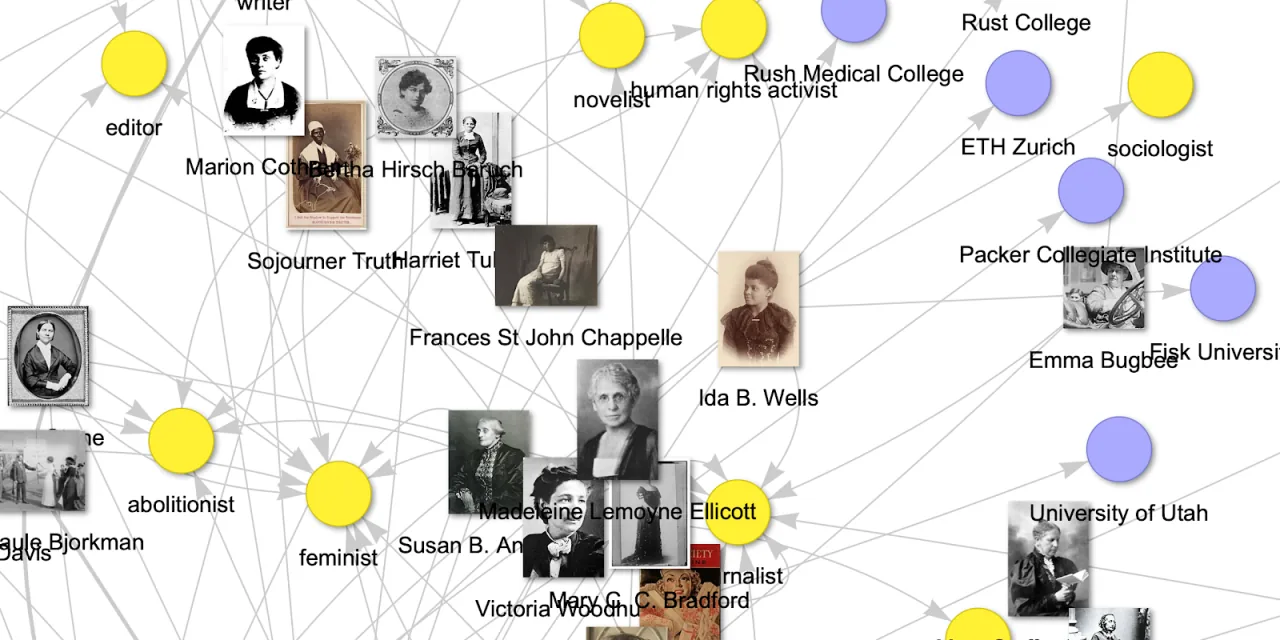

GraphRAG addresses this by building, at ingestion time, a graph of entities and relations extracted from documents by an LLM, plus a community scheme computed over the graph. At query time, the system decides whether the question is local (uses vector retrieval plus subgraph context) or global (aggregates community summaries for synthesis). The result is noticeably better on broad-scope questions.

What Works, What Doesn’t, With a Year of Data

Surviving deployments share a clear profile. Corpora with identifiable, recurring entities — customers, products, contracts, processes — where relationships are stable and nameable:

- Corporate helpdesks where entities are systems, modules, and customers.

- Legal areas where they are contracts, clauses, and parties.

- Sales support where they are accounts, opportunities, and products.

Here GraphRAG moves from novelty to operational tool and the return is visible.

Abandoned or downsized deployments share a different profile. Corpora of loose documents without clear hierarchy: unstructured meeting notes, personal notes, mixed corporate email, tickets without taxonomy. Here the LLM-extracted graph becomes noise dressed as signal: duplicate entities with spelling variants, relationships invented by hallucination, communities without semantic cohesion.

The emerging rule is simple: if you cannot explain to a human what the five most important entity types in your corpus are, your corpus probably isn’t ready for GraphRAG. This is not a technique defect, it is an entry requirement that many pilots skipped.

The Real Cost of Ingestion

The part demos don’t mention is the cost of building the graph. Extracting entities, relations, and communities with an LLM costs, in tokens, between ten and thirty times what it costs to vectorise the same corpus. For a corpus of a million medium documents, that is thousands of euros just for the first ingestion.

Emerging optimisations are pragmatic:

- Using small cheap models for extraction and frontier models only for querying. The saving can be an order of magnitude.

- Incremental ingestion: only new or modified documents are processed and their graph merged into the existing one. This separates prototypes from production systems.

- Entity deduplication with semantic name embeddings plus fusion rules, rather than purely LLM-based approaches.

# Incremental GraphRAG ingestion pattern, April 2026

def ingest(doc, graph_store, entity_resolver):

extracted = llm_small.extract_entities_and_relations(doc)

resolved = entity_resolver.resolve(extracted, graph_store)

graph_store.upsert_nodes(resolved.nodes)

graph_store.upsert_edges(resolved.edges)

affected_communities = graph_store.communities_touching(resolved.nodes)

for community in affected_communities:

community.invalidate_summary()

# summaries regenerate on demand, not at ingestMaintenance: What Nobody Warns You About

A corporate graph is not immutable. Customers merge, products get renamed, departments reorganise. A graph built six months ago with entities that are now different entities starts returning structurally stale information even when documents are fresh. Teams that have avoided issues run periodic reconciliation where entities merge, split, or get renamed following business changes, and where communities recompute after modification thresholds.

Traceability is the other uncomfortable piece. When a human asks why the system produced a given global answer, the graph offers a useful skeleton: entities involved, relations queried, communities aggregated, source documents for each edge. But that reasoning chain is only reviewable if it is stored from the start. For traceability patterns in broader agentic systems, see lessons from agents in production.

Architectures That Survived

Three patterns recur in productive deployments:

- Hybrid vector-plus-graph: the retriever decides per query whether to activate the graph or stay in vectors. Most common and most defensible.

- Layered extraction: a small LLM extracts entities and a big one consolidates and summarises communities on demand. The stable-cost pattern.

- Federated graph: each corporate domain maintains its own graph with its own schema and an upper graph of common entities bridges them. The pattern that survives reorganisations without full re-ingestion.

Storage has converged less than expected. Neo4j is still most common out of inertia and tooling, but PostgreSQL with Apache AGE or directly with edge tables has gained ground because many teams prefer not to add another database to the inventory. For mid-size graphs, under a million entities, PostgreSQL with well-thought indexes is economically sensible and operationally familiar.

When It’s Worth It

GraphRAG is neither a silver bullet nor an academic experiment. It is a tool with a clear application profile: if your corpus has explicit relational structure and questions demanding synthesis, it earns its cost. If not, a well-tuned vector RAG with reranking and better chunking remains enough for 80% of cases and far cheaper to operate.

The decision that most separates success from failure is the initial scope. Teams that started with a bounded domain — contracts, tickets for a specific product, a narrow legal area — learned the real cost, refined the extraction pipeline, and expanded from a proven base. Those who started aiming to unify all corporate knowledge from day one spent a lot and concluded little.

The lesson is classical and transferable: with GraphRAG, success is built by amplification from a working core, not by ambition from the full map.