gVisor: sandboxing for multi-tenant containers

Actualizado: 2026-05-03

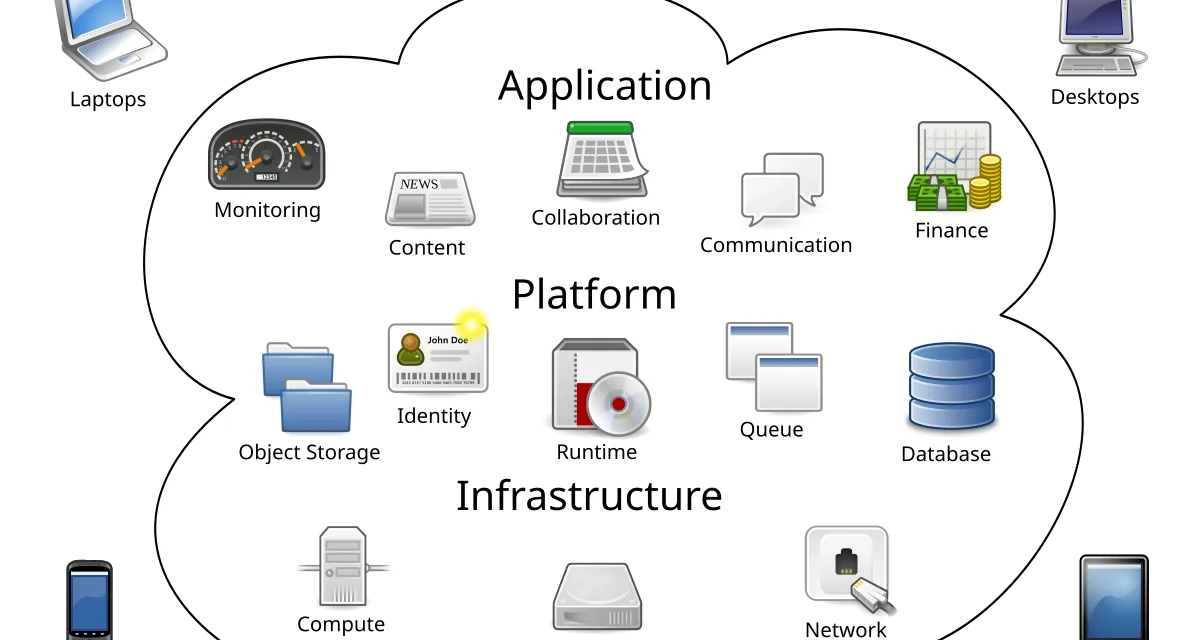

Containers share the host kernel, and that property, which explains much of their lightness, also sets their ceiling in multi-tenant environments where trust between workloads is low. gVisor was born inside Google precisely to raise that ceiling: insert a kernel written in Go between the container process and the real host kernel, reducing the exposed system-call surface and offering an intermediate isolation form between the traditional container and the lightweight virtual machine.

Key takeaways

- gVisor implements the OCI-compatible

runscruntime that replacesruncby interposing a user-space kernel called Sentry. - The

systrapmode (available since 2024) is the recommended default: good performance, portable, no special hardware requirements. - For CPU workloads the cost is 5–10 %; for I/O-intensive workloads the impact can reach 20–50 %.

- Integrating gVisor into an existing Kubernetes cluster requires installing

runscand declaring aRuntimeClass; other pods keep usingrunc. - Makes sense for multi-tenant workloads, serverless functions, and untrusted-code execution; not for heavy databases or high-IOPS loads.

What gVisor is and why it was built

gVisor implements an OCI-compatible runtime called runsc that replaces runc. The decisive difference is that runsc doesn’t let the container process talk to the host kernel directly. Instead, a component called Sentry, a Linux kernel reimplemented in Go in user space, intercepts those calls and responds to most of them itself, only talking to the host when unavoidable and always through a narrow perimeter.

The result is that a kernel exploit that would normally escalate from container to host has to traverse Sentry first, which is much smaller and written in a memory-safe language. Google open-sourced the code in 2018 and uses it in production to run customer code in App Engine, Cloud Run, and Cloud Functions.

Architecture: Sentry, Gofer and platform modes

When a container starts with runsc, the runtime creates two main host processes:

- Sentry: the user-space kernel that runs the container code.

- Gofer: a separate process that mediates filesystem access.

The separation is deliberate: even if an attacker compromises Sentry, they still have to cross Gofer to touch disk, and neither has privileged capabilities beyond what’s strictly needed.

System-call interception uses two main modes:

ptrace: portable but slow, rarely used in production.KVM: leverages hardware virtualization extensions for notably better performance, but requires/dev/kvm.systrap(recommended since 2024): usesseccompwith notification filters to intercept calls without depending on KVM or ptrace. Good performance, portable, no special hardware requirements.

Important detail: Sentry doesn’t implement every Linux system call. It covers most of what a typical program needs, but obscure calls will fail if the container tries them. This is deliberate: each implemented call is potential attack surface.

Performance: where it wins and where it loses

For CPU-heavy workloads with little kernel contact, gVisor is very close to a native container. Differences are on the order of 5–10 % for pure compute loads on systrap.

The story changes with I/O-heavy loads:

- Redis suffers little because its operations barely touch the filesystem.

- Postgres or MySQL with constant writes show 20–50 % penalties in transactions per second.

- Networking also has impact: gVisor ships its own Go TCP stack that doesn’t match the Linux kernel in raw throughput.

The operational lesson: gVisor is a good choice for HTTP APIs, short-running serverless functions, batch jobs, and untrusted user code execution. It’s a bad choice for heavy databases, distributed filesystems, or any workload whose main metric is IOPS.

Comparison with Kata Containers and microVMs

The obvious comparison is with Kata Containers, which also seeks reinforced container isolation but by starting a small virtual machine using Firecracker or QEMU. The threat models are different:

- Kata bets on the hardware barrier of the hypervisor.

- gVisor bets on surface reduction in user space.

Kata tends to better compatibility with I/O-heavy workloads because inside the VM runs a complete Linux kernel. gVisor tends to start faster and consume less fixed memory per container because there’s no full hypervisor to load. On Cloud Run, where cold start matters, choosing gVisor makes sense.

Firecracker alone is a different building block: strong threat model from hypervisor separation, but operating pure Firecracker implies much more orchestrator integration than runsc, which plugs into containerd with a handful of config lines.

Operation and deployment

Integrating gVisor into an existing cluster is relatively straightforward. Install runsc, configure containerd to recognize it as an alternative runtime, and use a Kubernetes RuntimeClass to mark which pods should start under it. Marked pods run with Sentry and Gofer; the rest keep using runc. This lets you apply gVisor only to workloads that need it without imposing its I/O cost on the whole cluster. This mixed-runtime pattern fits well with the containerd with Wasm model, where multiple runtimes coexist on the same node.

runsc exports Prometheus-format metrics with CPU and memory usage, and logs integrate with the usual logging stack. Diagnosis when something fails is trickier than with runc because messages can come from Sentry, but project documentation has improved a lot and there’s an active community.

When it pays off

gVisor has a clear niche: multi-tenant workloads where isolation matters and the usage pattern is CPU-heavy and I/O-light.

- Platforms running third-party code.

- Serverless functions.

- Test environments where different users share nodes.

- Educational clusters and malware analysis labs.

In all those cases the attack-surface reduction easily offsets the 5–10 % performance loss. Many operators use gVisor for part of the cluster, Kata for another, and runc for the rest, picking the barrier that best matches each workload’s trust level and performance profile. That heterogeneity is today’s mature answer to container isolation in many-actor environments. Where it doesn’t pay off is in first-party workloads from an organization that trusts its own code: if all pods come from the same team through the same pipeline, the extra sandbox rarely justifies the operational cost.