Kata Containers: strong isolation with a Docker-like experience

Actualizado: 2026-05-03

Kata Containers is one of those projects that has been in the conversation for years without ever becoming the default option. In October 2025 the story has a new nuance: the project is approaching version 3.10, now under the OpenInfra Foundation, with a concrete list of improvements that answer the most common criticisms of recent years. After testing it seriously in a lab cluster, opinions are clearer than in past attempts.

Key takeaways

- Kata runs each container inside a lightweight microVM with its own kernel, keeping the OCI interface for Kubernetes and containerd.

- The Rust rewrite in the 3.x series halved agent memory footprint and cut start times to under three seconds in most cases.

- Confidential container support (AMD SEV-SNP, Intel TDX) makes it one of the few viable options without rewriting the application.

- A Kata pod consumes around 450 MB versus 200 MB for runc; CPU penalty on CPU-bound workloads is 2 to 5 percent.

- The most promising 2025 use case is not the classic production cluster but AI agent code-execution platforms.

The underlying idea that has never changed

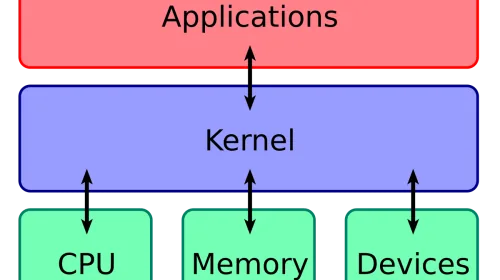

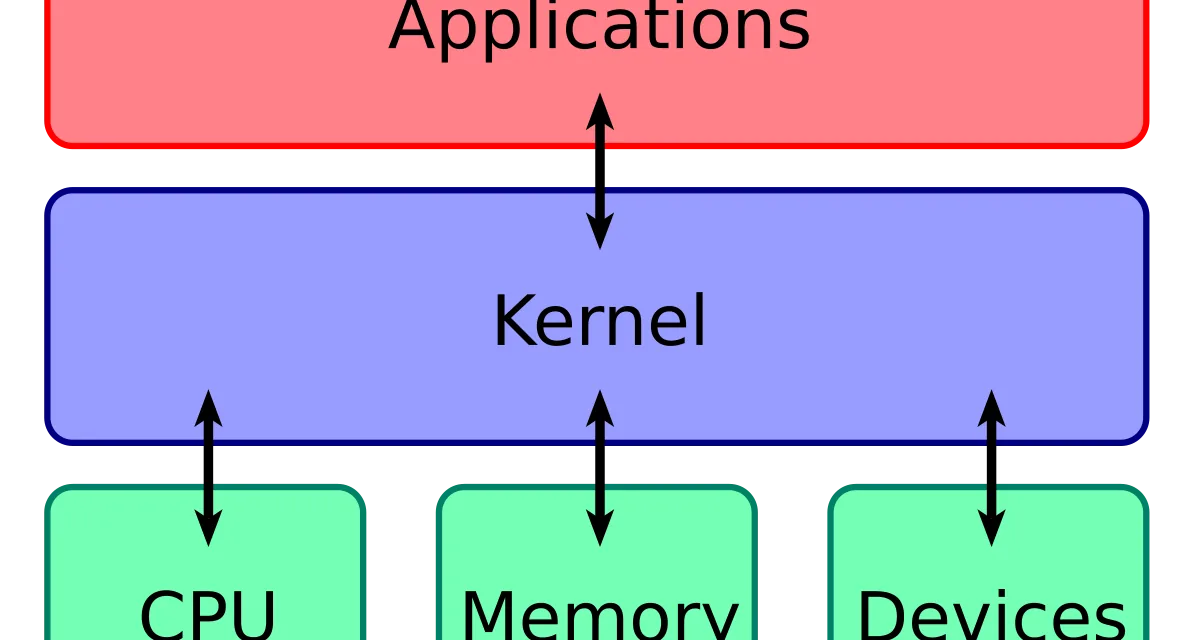

Kata Containers solves a specific problem and always solves it the same way: runc containers share the kernel with the host, and that is an unacceptable risk vector for certain use cases. The Kata answer is to run each container inside a lightweight virtual machine with its own guest kernel, while keeping the OCI interface so Kubernetes, containerd, and the rest of the ecosystem can use it as if it were runc.

The trick lies in combining three pieces:

- A fast hypervisor (trimmed QEMU, Firecracker, or Cloud Hypervisor depending on configuration).

- A minimal guest kernel compiled to boot in milliseconds.

- An agent inside the guest that receives requests from the host runtime and translates them into local calls.

The result is a container that starts almost as fast as a regular one, consumes slightly more memory, and has isolation equivalent to a VM.

What changes in the 3.x series

The most visible jump in Kata 3.0, released in late 2023, was the Rust rewrite of the runtime. The guest agent, previously Go, also moved to Rust. That change is not cosmetic: it halves the agent memory footprint and removes several classes of intermittent failures that affected containers with heavy I/O. Startup times dropped from six to eight seconds to under three in most cases.

The second major improvement is confidential container support, letting workloads run inside TEE enclaves like AMD SEV-SNP or Intel TDX. For anyone needing certification that not even the cluster operator can see container memory, that support makes Kata one of the few viable options without rewriting the application.

The third is hypervisor work. Cloud Hypervisor has settled as the default option in many scenarios because it starts faster than QEMU and is more complete than Firecracker for cases needing additional devices. Having three interchangeable hypervisors under the same interface lets you tune by workload characteristics.

How it compares to gVisor and runc

The obligatory comparison is with gVisor, Google’s alternative for strong container isolation. gVisor intercepts system calls in user space through a kernel implemented in Go, without needing a hypervisor. It is lighter than Kata but the isolation model differs: it depends on its own user-space kernel being free of flaws, and compatibility with real workloads is narrower because it doesn’t support all syscalls.

Kata, by using a standard Linux guest kernel, has near-total compatibility with any application that runs in a container. The price is more memory and slightly higher latency on network and storage operations. For typical web application or job queue workloads the difference is small. For latency-sensitive or I/O-heavy workloads it shows.

Against runc the trade-off is the usual one: Kata isolates more and consumes more. In a production cluster where every container is trusted, runc is enough and cheaper. In a multi-tenant cluster where you run third-party code or confidential workloads, Kata earns its overhead.

The operational model in Kubernetes

The most mature part is the Kubernetes integration. The Kata Containers operator covers the happy path: deploy the operator, create a RuntimeClass named kata, and any pod selecting it via spec.runtimeClassName runs isolated.

This enables a pattern that was theory three years ago: mixed clusters where most pods run on runc and only pods marked sensitive use Kata. The operator ensures nodes have the hypervisor, guest kernel, and agent ready; the Kubernetes scheduler places sensitive pods on labeled nodes.

In practice, three recurring problems appear:

- Persistent storage over NFS or host-mounted CSI volumes (requires care with virtiofs).

- Workloads needing GPU or FPGA (passthrough works but increases boot cost).

- Observability tools expecting to see container processes on the host, which doesn’t happen when the container runs in a guest VM.

Real memory and performance cost

Numbers matter because this is where the most wishful thinking lives. On a lab cluster with 32 GB nodes:

- A runc pod consumes about 200 MB above the application.

- The same pod on Kata consumes around 450 MB.

On a heavy Java application using 2 GB, that delta is 12 percent. On a small Node application using 100 MB, the delta doubles total cost.

On CPU, the penalty for CPU-bound workloads is minimal, around 2 to 5 percent. On network workloads with many short connections the penalty climbs to 10 or 15 percent because every packet traverses host, hypervisor, and guest kernel.

My take

Kata Containers is in the best moment of its history and still occupies a niche. The combination of Rust, a mature Kubernetes operator, and TEE support makes it a tool worth studying in three scenarios: multi-tenant clusters, confidential workloads, and environments with strong regulatory requirements. For the rest it remains a non-default choice.

Where it is most interesting now is in platforms for autonomous agents and code execution by language models. Those use cases combine untrusted code with the need to run it quickly and tear it down just as quickly. Kata fits there almost by design, and several sandbox execution providers have spent months building on it.

Conclusion

A reader operating small clusters with no strict isolation requirements can ignore Kata and miss nothing. Someone operating a platform where external customers deploy code, or where there is regulatory separation between teams, should evaluate it seriously. The investment in learning the operational model pays off quickly because the alternative is running separate clusters, which is more expensive and more complex.