LangGraph: State Graphs for More Robust Agents

Actualizado: 2026-05-03

The enthusiasm around LLM agents has hit an uncomfortable wall: the classic loop pattern —invoke the model, check if it requests a tool, execute it, repeat— works in demos and crumbles the moment the task has three steps and a condition. Agents lose context, get stuck in infinite loops, can’t be resumed after failure and, when something goes wrong, the operator stares at a flat log wondering why the model decided what it decided. LangGraph[1] tackles that problem by treating the agent as an explicit state graph rather than a conversational black box.

Key takeaways

- LangGraph replaces the ReAct loop with nodes, edges, and explicitly typed state.

- Checkpointing on SQLite, Postgres, or Redis enables long-running agents and failure recovery.

- Per-step streaming lets UIs show real-time progress without waiting for the final result.

- Explicit loops with exit conditions prevent the infinite recursion that kills naive agents.

- Not worth it for single-turn chatbots; yes for flows with non-trivial conditional logic or duration over a minute.

Why “simple” agents break

The hand-rolled ReAct-style agent is always the same template: a loop calling the LLM with message history, checking if the response contains a tool call, executing it, appending the result, and starting again. It has four problems that scale badly:

- No natural bound: if the model fixates on the same tool, you spin indefinitely until tokens run out.

- No persistence: a network blip, a timeout, or a container restart sends you back to square one.

- No observability: the “step” you’re on is implicit, living only in the length of the message history.

- Impossible to unit-test: all the logic sits inside the same loop body.

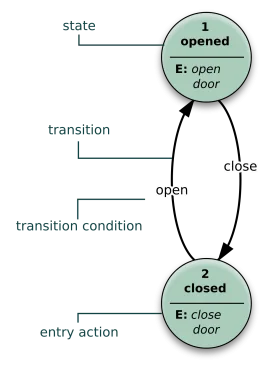

The LangGraph mental model

LangGraph changes the unit of composition. Instead of a loop, you define four things: a typed state dictionary, nodes as pure functions returning partial state updates, edges connecting nodes conditionally or unconditionally, and the graph as the compiled executable object.

from langgraph.graph import StateGraph, END

graph = StateGraph(AgentState)

graph.add_node("classify", classify)

graph.add_node("search", search)

graph.add_node("respond", respond)

graph.set_entry_point("classify")

graph.add_conditional_edges("classify", route_from_classify)

graph.add_edge("search", "respond")

graph.add_edge("respond", END)

app = graph.compile()The three features that do the work

Checkpointing: LangGraph persists graph state at every transition into SQLite, Postgres, or Redis. This enables long-running agents measured in hours or days, real cross-session memory, recovery from process failures, and the human-in-the-loop pattern where the graph pauses on an approval node while an operator decides.

Per-step streaming: the stream method emits one event per executed node with the partial state update. UIs can show the user what the agent is doing in real time.

Explicit loops with exit conditions: a node can point back to itself through a conditional edge distinguishing between “continue” and “terminate”. It’s the same ReAct pattern, but with the stopping condition declared in one place rather than buried inside the loop body.

When it pays off and when it doesn’t

LangGraph pays its cost when the flow has non-trivial conditional logic, agent duration exceeds a minute, human intervention is needed at intermediate steps, or production observability is a hard requirement. It does not pay for a single-turn chatbot, a one-shot tool call, or exploratory prototypes where building the graph is more ceremony than product.

Conclusion

LangGraph is one of those libraries that feel like a detour until you face your second serious production agent, and then it’s hard to go back. The underlying argument is organisational: the hand-rolled ReAct loop forces flow logic, error handling, persistence, and observability to coexist in the same code body. A state graph separates those concerns almost by design, and that value shows in every week of maintenance.