Mature LLM-as-judge: when to trust and when not

Actualizado: 2026-04-30

LLM-as-judge became standard technique by late 2024 and remains, in 2026, the only scalable way to evaluate qualitative quality in LLM systems. The question distinguishing mature teams isn’t whether to use it, but when to trust the number it produces.

Key takeaways

- Dimensional rubric (6 dimensions → 1-5 score + justification) produces more stability than a global score.

- Judge-human correlation must exceed 0.7 on a 30-case sample to consider the judge usable.

- The judge systematically fails on subjective criteria without rubric, exact ground-truth comparison, and self-evaluation.

- Full calibration quarterly or when changing model; quick 5-case check on any anomaly.

- If the criterion can be checked with deterministic logic, don’t use an LLM judge.

What an LLM judge does well

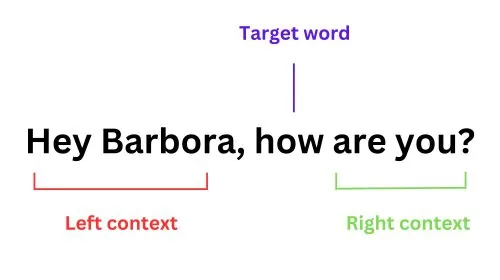

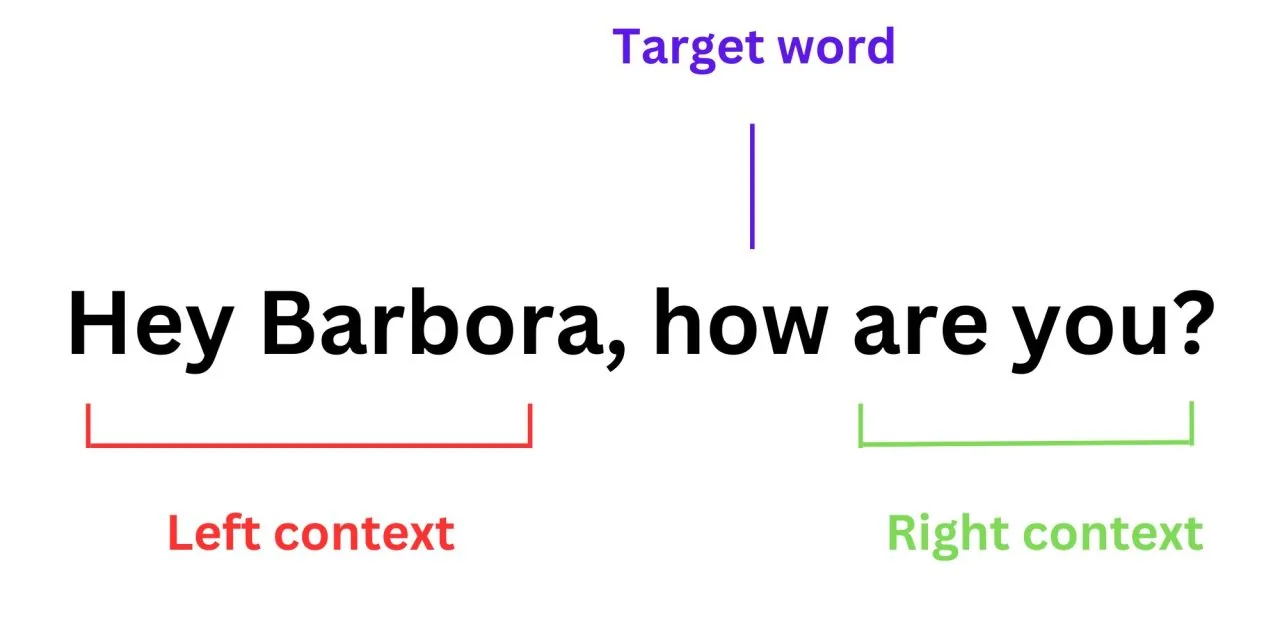

Dimensional rubric evaluation: coherence, relevance, format, absence of fabricated info, style adherence. Asking the judge for 1-5 scores on each dimension with one-sentence justification produces results correlating reasonably with humans when well calibrated.

The key is decomposition:

- Asking for a “global score” produces high inconsistency between runs.

- Asking for six dimensional scores and aggregating later produces stability.

What an LLM judge does poorly

Three patterns where it consistently fails:

- Subjective criteria without rubric: “is this answer useful?” without defining what counts as useful produces noise, not signal.

- Comparison against exact ground truth: the judge accepts correct paraphrases but also incorrect ones that sound similar.

- Evaluation when the judge is the same model being evaluated: systematically overrates its own family.

Human calibration

The verification you can’t work without is calibrating the judge against humans on a small subset:

- Humans score 30 cases.

- The judge scores the same 30.

- The correlation between them should be above 0.7 to consider it usable.

- Below 0.7: adjust the judge’s prompt or change model.

Recommended frequency:

- Full calibration quarterly, or when changing the judge model.

- Quick check (~5 cases) whenever anomalous behaviour appears in metrics.

When NOT to use LLM judge

When verification can be automated with deterministic logic:

- Valid schema.

- Resolvable URL.

- Correct type.

- Value within expected range.

Use that logic: cheaper, more reliable, no calibration. LLM judge only when the criterion is genuinely qualitative.

Conclusion

LLM-as-judge in 2026 is a mature technique with known limits. Used with dimensional rubric, periodic calibration, and as complement (not substitute) to deterministic metrics, it produces useful signal for CI regression and drift detection. Used with blind trust, it’s a mirror reflecting the judge’s biases without anyone noticing.