Agent-to-agent protocols v1: what we have in hand

Actualizado: 2026-05-03

In December 2025 I wrote about the Agent2Agent protocol, which Google had donated to the Linux Foundation mid-year and which looked set to become MCP’s natural counterpart for communication between different agents. Six weeks later, with version 1 formally published, several reference implementations operational, and the first real deployments in enterprise products, time to update the read.

Key takeaways

- A2A v1 stabilizes the agent-card format, the stateful-task model, and the authentication scheme based on OAuth 2.1 + JWT.

- MCP and A2A are complementary, not rivals: MCP covers agent-tool, A2A covers agent-agent.

- Official Python and TypeScript SDKs are in production; Go is the most mature from the community.

- V1 leaves out temporary identities, real-time multi-agent conversations, and long-term relationships.

- Adopting now makes sense if you already have multiple agents with custom integrations between them.

What version 1 formalizes

Version 1 of the A2A protocol, formally published by the Linux Foundation in January 2026, consolidates several design decisions that were iterating fast through 2025. The technical core is:

- HTTP-based model with extensions for event streaming.

- Strict JSON schema for agent cards describing capabilities.

- Standardized verb set to initiate tasks, receive partial progress, deliver results, and cancel conversations.

The most relevant piece of v1 is stabilization of the agent-card format. A card describes what the agent can do, what kind of input it expects, what its output format is, and what metadata a client needs to decide whether this agent is a candidate for a task. Without a standard card there’s no real interoperability.

The second stabilized piece is the stateful-task model. When an agent asks another to do something, the task has an identifier, state, accumulated event list, and optional final result. This model lets a conversation between agents be durable, resumable after disconnections, observable for audit, and cancelable if circumstances change.

The third piece is the authentication and authorization agreement, leaning on OAuth 2.1 and asymmetric signatures compatible with JWT, letting you reuse existing identity infrastructure.

Implementations available

Six months after the Linux Foundation donation, reference implementations have matured enough for real use:

- Official Python SDK: covers full v1, in production in several Alphabet internal systems.

- TypeScript SDK: functional parity with Python but fewer production hours.

- C# (Microsoft): integrated with Copilot and Azure AI SDKs, works well in that environment but isn’t the standard path outside the Microsoft stack.

- Go: most advanced from the open community, used within Anthropic’s ecosystem for Claude-to-third-party-agent interoperability.

- Rust and Java: in validation phase, cover basic cases but not yet recommended for production.

Most visible deployments: Google Agentspace, Salesforce Agentforce, and A2A connectors in n8n and Zapier. Not ubiquitous yet, but out of demo territory.

How it fits with MCP

MCP and A2A are complementary and that clarity is one of the best pieces of news from v1:

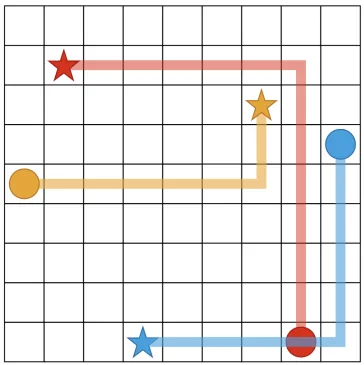

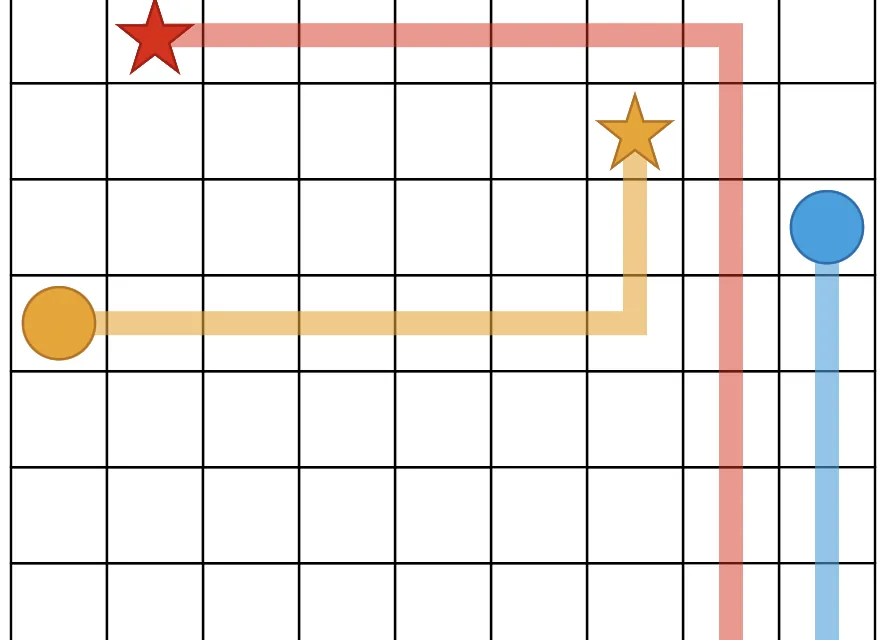

- MCP solves the agent-tool relationship: a client agent talks to servers exposing static capabilities. Asymmetric communication, the server has no judgment of its own.

- A2A solves the agent-agent relationship: two systems with their own intelligence collaborate on a task with negotiation and shared state.

In practice, a typical system combines both. A main agent with access to tools via MCP discovers it needs to consult another agent with domain knowledge. It starts an A2A conversation; that agent may in turn use its own MCP tools internally. Confusing the two cases when designing an integration usually produces ugly solutions.

Where v1 doesn’t yet reach

V1 leaves out several areas v2 will have to address:

- Temporary identities: the model assumes each agent has stable identity. For agents appearing and disappearing dynamically, v1 works but requires additional engineering.

- Real-time multi-agent communication: v1 defines point-to-point conversations well; three or more coordinating agents remain the orchestrator’s responsibility.

- Long-term sustained relationships: an A2A conversation in v1 is naturally short. For month-long relationships with shared memory and accumulated context, you build that layer elsewhere.

Adopt now or wait

V1 is ready for production in bounded cases, and waiting doesn’t bring much. Implementations cover the main languages, interoperability between SDKs has been tested, and Linux Foundation governance guarantees orderly evolution.

The case where I recommend adopting without hesitation: when you’re already building a system with multiple agents and currently have custom integrations between them. Replacing those with A2A reduces debt, standardizes observability, and opens the door to external agent collaboration. Net-positive refactor.

The case where waiting still makes sense: when you have a single agent talking to tools (use MCP, not A2A) or when you’re in a high-regulation environment where every new protocol requires formal approval.

Conclusion

A2A v1 is the first open layer for agent communication with real critical mass behind it and healthy governance in the Linux Foundation. Combined with MCP, it forms the basic interoperability stack the industry needed: tools on one side, agents on the other, with open standards on both planes. The useful comparison is with HTTP in the nineties: when it consolidated, everything else organized around it. A2A v1 is at that moment.

Last reviewed: 2026-04-01.