Modern SCADA in Containers: Advantages and Risks

Table of contents

Actualizado: 2026-05-03

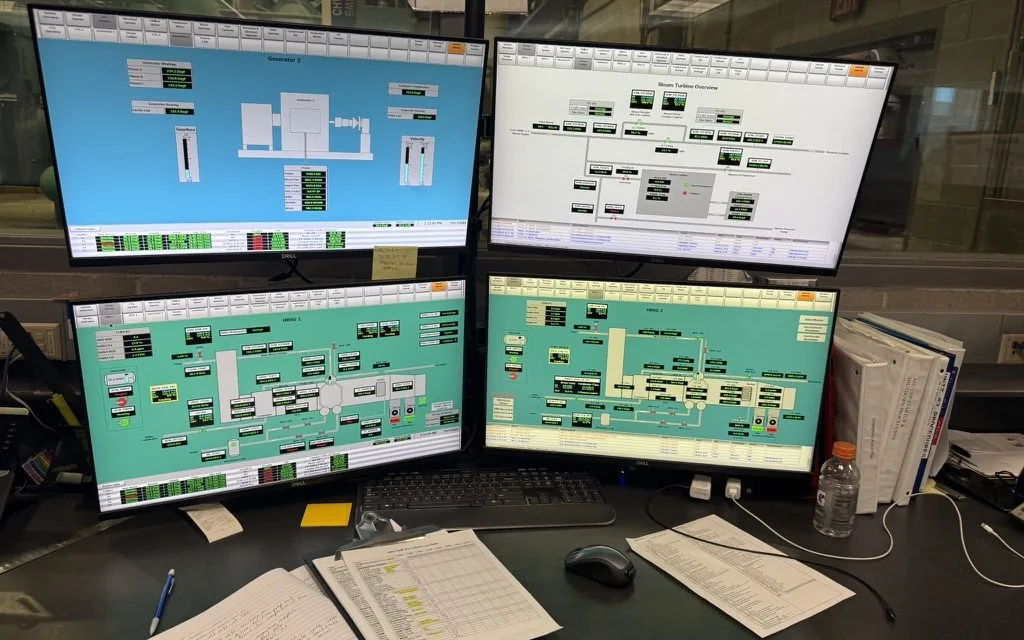

SCADA (Supervisory Control and Data Acquisition) systems were born as monolithic software on Windows Server with proprietary databases. Today the trend is to containerise those workloads to gain declarative deployment, horizontal scaling, and standard observability. The question isn’t whether it makes sense, but which layers it makes sense for and which must stay outside the container.

Key takeaways

- PLCs remain the foundation of deterministic control; containers modernise the upper layers.

- Products like Ignition 8 and PTC ThingWorx officially support containerisation.

- The biggest risk is not technical but cultural: applying DevOps patterns without respecting OT context produces incidents.

- Air-gap, strict policy enforcement, and OT-specific monitoring are requirements, not options.

- NIS2 demands compliance for critical infrastructure regardless of whether containers are used.

Why containerise SCADA

The concrete advantages sit in the upper layers of the architecture:

- Reproducible deployment: the same setup in dev, staging, and production eliminates the “works on my machine” problem endemic to historical OT environments.

- Incremental updates: a rolling update replaces the traditional “power off and migrate”, which in continuous plants can cost millions.

- HMI frontend scaling: multiple instances with load balancing without duplicating licences or hardware.

- Standard observability: Prometheus, Grafana, and Loki work on Kubernetes exactly as on any IT workload.

Products that support containerisation

Not all SCADA systems are equal when it comes to containers:

- Inductive Automation Ignition[1]: Ignition 8+ has official Docker images. The module ecosystem works in container.

- PTC ThingWorx[2]: Kubernetes for enterprise deployments with maintained Helm charts.

- FUXA[3] (open-source): natively containerised, ideal for small installations.

- AVEVA System Platform: emerging options with partial support.

The fundamental problem: OT is not IT

SCADA operates physical machinery. A failure doesn’t produce a 500 in a log — it produces a plant shutdown, a safety risk, or equipment damage. This difference invalidates most DevOps assumptions:

- A

kubectl rollout restartis not acceptable if it affects a reactor’s control loop. - The “always available with retries” model collides with PLCs’ temporal determinism.

- Automatic dependency upgrades can break functional safety invariants.

Consequence: the decision of what to containerise must start from criticality, not from technical fashion.

Architectures that work

Edge layer with containers + real PLC

The most pragmatic architecture separates control from supervision:

- PLCs (Siemens, Rockwell, Omron) control hardware directly with hard determinism.

- A containerised gateway aggregates data via OPC UA and exposes it to the historian.

- The HMI runs in a container with web access: easy updates, no per-seat licences.

- The containerised historian accumulates historical data without depending on dedicated servers.

Dedicated OT Kubernetes cluster

For larger installations:

- On-premise Kubernetes dedicated to OT workloads, physically separated from corporate IT Kubernetes.

- GitOps for HMI and historian deploys: every change traceable and reversible.

- Prometheus in the same cluster for application and operational metrics.

Hybrid transition

The safest sequence for modernising without stopping the plant:

- New HMIs in containers first.

- Historians migrate to containers while preserving the original write path.

- PLC interfaces remain bare-metal until a validated plan exists.

- Aggregated dashboard over everything from day one.

OT security risks

Honest about what gets opened when containerising:

- Exposed Docker socket equals full host control; in OT that means control of the operational network.

- Kubernetes API accessible by mistake through a network policy error gives cluster-wide access.

- Unsigned images open the door to supply chain attacks, critical in infrastructure that operates machinery.

- Poorly segmented overlay network enables lateral movement from IT into OT.

ISA/IEC 62443 defines the Purdue model (L0 field, L1 control, L2 HMI, L3 operations, L4 enterprise). Containers can erase those boundaries without network and policy discipline.

Essential mitigations

Five practices that reduce risk to operationally acceptable levels:

- Real air-gap between the OT cluster and any IT network or the internet.

- Immutable infrastructure: no hot changes; always rebuild and redeploy.

- Strict policy enforcement with Kyverno or Gatekeeper: which images may run, which capabilities they hold, which namespaces may communicate.

- OT-specific monitoring with tools like Claroty or Nozomi layered over standard Prometheus.

- Degradation runbook: a written protocol for operating manually if there is a cluster incident.

NIS2 compliance

In the EU, NIS2 mandates critical infrastructure to manage vulnerabilities, maintain asset inventories, have an incident response plan, and secure the supply chain. Containerising does not exempt from compliance: the container is one more asset in the inventory, and the image registry is part of the supply chain.

Conclusion

Containerising SCADA makes sense in the right layers: HMI, historians, and data gateways. Critical control still belongs to PLCs until mature standards and proven cases exist. The journey is worth it for most organisations with a modern plant, but requires respecting OT context in every design decision.