Constrained Decoding for Structured LLM Outputs

Actualizado: 2026-05-03

Getting an LLM to return a JSON object that matches a schema is one of those tasks that looks trivial until you push it to production at thousands of documents per hour. As soon as the prompt gets complex, the model starts making the usual mistakes: a missing comma, a brace that closes too early, a friendly preamble, a Markdown fence wrapping the answer, or simply a field not in the schema. With careful prompting and low temperature you can reach 98% success, but that remaining 2%, multiplied by volume, ends up eating more engineering time than anyone admits.

Key takeaways

- Constrained decoding mathematically guarantees the output matches the schema — not statistically, by construction.

- Outlines (Python) is the reference: works with Hugging Face Transformers, llama.cpp and vLLM; allows expressing constraints as Pydantic, JSON Schema, regex or Lark grammar.

- Instructor isn’t strictly a decoder — it wraps the OpenAI or Anthropic API and uses Pydantic to validate and retry — but it’s the pragmatic path for cloud models without access to the inference runtime.

- Constrained decoding pays its tax inside the same forward pass: each token is 10-30% slower because of masking, but there’s never a second round. For batches of thousands of documents, the arithmetic clearly favours it.

- The guarantee is syntactic, not semantic: the JSON will be valid and match the schema, but if the model misunderstood the document, the extracted values will still be wrong.

What Constrained Decoding Is

At every generation step, the model produces a probability distribution over the entire vocabulary — typically around fifty thousand tokens. Instead of sampling from that distribution as-is, a mask is applied: tokens that cannot extend a valid string under the target grammar are zeroed out, and we then renormalise and sample only over the legal subset.

The output complies with the grammar as a mathematical guarantee, not a statistical one. No branch of the generation tree can produce broken JSON because the missing brace is simply not a reachable token.

The Three Tool Families

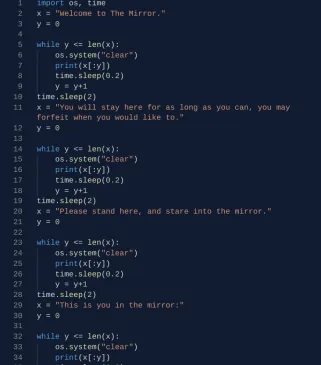

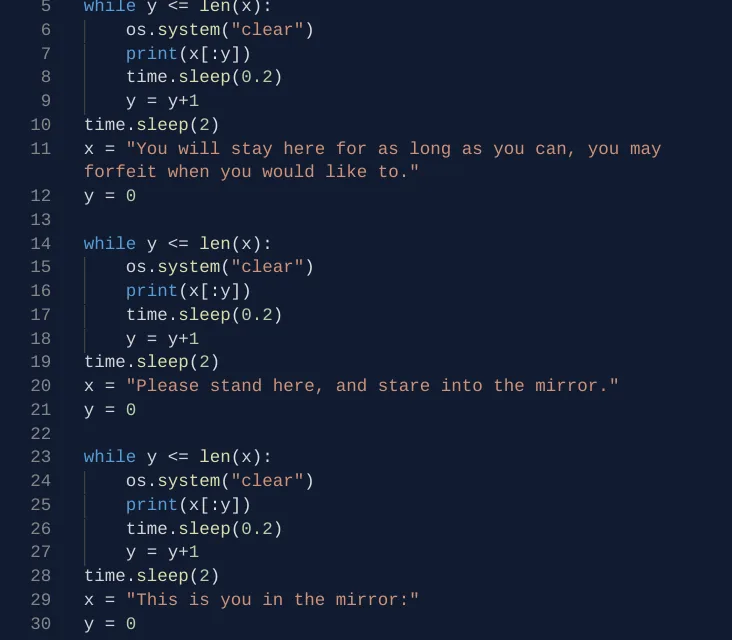

Outlines is the Python reference. Works with Hugging Face Transformers, llama.cpp and vLLM:

from outlines import models, generate

from pydantic import BaseModel

class Invoice(BaseModel):

number: str

total: float

lines: list[str]

model = models.transformers("meta-llama/Llama-3-8B-Instruct")

generator = generate.json(model, Invoice)

invoice = generator("Extract data from: Invoice A-0012, total 128.50, two lines")Microsoft’s Guidance takes a more template-oriented approach: interleave fixed text with constrained-generation regions. It shines when the output is not one structured blob but a conversation with partial structure.

Instructor wraps the OpenAI or Anthropic client and uses Pydantic to validate and retry. Not strictly a decoder, but it addresses the same pain with a different philosophy and is the pragmatic path for cloud models.

What You’re Competing Against

The classic retry loop: ask for JSON, try to parse, call again with the error as context if parsing fails. Works reasonably but every retry is a full call to the model. In bulk-extraction workloads, the time lost to retries dominates the budget.

OpenAI’s JSON mode (November 2023) guarantees the output parses as JSON, but not that it matches your schema. Function calling goes one step further but still has drifts on optional fields and enums not always respected.

Constrained decoding pays its tax inside the same forward pass: each token is 10-30% slower because of masking, but there is never a second round. For batches of thousands of documents the arithmetic clearly favours it.

Where It’s Worth Adopting

In large-scale structured extraction — invoices, resumes, clinical results, contracts — the difference between 98% and 100% valid output is enormous because it removes the error queue that otherwise needs to be reviewed or reprocessed by hand.

In agents that emit tool calls, guaranteeing the argument JSON is always parseable prevents a formatting error from tearing down an entire chain of reasoning.

In synthetic data generation with a fixed schema, the guarantee lets you chain steps without intermediate safety nets.

Conversely, for conversational chat with semi-free outputs, or when a frontier model with well-written prompts already delivers over 99%, the integration cost won’t amortise.

Two Misconceptions Worth Pushing Back On

The first: thinking that constrained decoding improves the semantic quality of the answer. It does not. The JSON will be valid and match the schema, but if the model misunderstood the document, the extracted values will still be wrong. The guarantee is syntactic, not about content.

The second: assuming integration is trivial. Moving from the OpenAI API to a local runtime with Outlines means managing GPUs, model versions, KV caches, and the whole inference-serving apparatus. For small teams without their own ML platform, Instructor on top of OpenAI remains the pragmatic path even if you give up the formal guarantee.

The Near Horizon

vLLM v0.4 integrates Outlines natively. llama.cpp has exposed --grammar for months. TGI has its own Guidance-based variant. Inference runtimes are treating constrained decoding as a first-class citizen.

Conclusion

The message for anyone building on LLMs is that format validation should move out of application code and into the generation layer, because that is where the marginal cost is lowest and the guarantee strongest. Keeping on patching with retries and json.loads wrapped in try/except is a form of technical debt that ages poorly. For bulk extraction, agents with tool calling, or synthetic data generation, constrained decoding turns a probabilistic problem into a guarantee — and that changes the system design.