containerd is probably the most-used container runtime on the planet and at the same time the least known. When Kubernetes 1.20 announced Docker shim deprecation, thousands of clusters silently migrated to containerd as runtime — and most operators didn’t notice because, done well, it must be invisible. We cover what it is exactly, how it fits with Docker and Kubernetes, and basic commands to operate it directly.

What containerd Is, and What It Isn’t

containerd is a high-level container runtime — it manages the complete lifecycle of containers on a system:

- Pulling images from registries.

- Storing images on disk.

- Creating containers from images.

- Running, pausing, stopping containers.

- Managing network namespaces.

- Filesystem snapshots.

What it doesn’t do:

- Build images (it has no

Dockerfile). - Multi-container composition (no compose).

- UI or developer experience (it’s a daemon, not an interactive tool).

- Orchestration (Kubernetes does that on top).

Originally containerd was Docker’s internal part. In 2017 it was donated to the CNCF and became an independent project. In 2019 it graduated as a CNCF project.

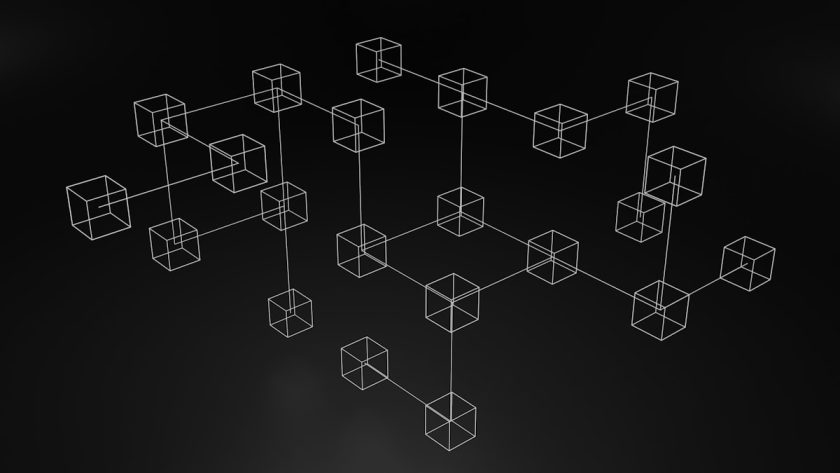

The Layered Architecture

To understand containerd it helps to see the full container stack on Linux:

Kubernetes (orchestration)

│

▼ via CRI (Container Runtime Interface)

containerd (high-level runtime)

│

▼ via OCI runtime spec

runc (low-level runtime — or crun, gVisor, kata)

│

▼ namespaces, cgroups, capabilities

Linux kernel- Kubernetes orchestrates — decides which pods go where.

- containerd runs — speaks CRI with Kubernetes and OCI with runc.

- runc is the reference implementation that creates the actual container using kernel primitives.

This separation lets you swap pieces: you can change runc to gVisor for more isolation, or containerd to CRI-O without touching Kubernetes.

containerd vs Docker: The Real Relationship

Lots of confusion exists about this. The simple truth:

- Docker Engine internally uses containerd as runtime for years.

- When you run

docker run, Docker calls containerd internally. - In Kubernetes 1.24+, the “Docker shim” was removed — Kubernetes talks directly to containerd, no Docker Engine in between.

- Kubernetes clusters said to “use Docker” actually almost all used containerd underneath. Migration meant changing the adapter layer.

For standalone servers, Docker Engine remains valid — it’s Docker + containerd + additional tools. For Kubernetes, direct containerd removes an unnecessary layer.

containerd vs CRI-O

CRI-O is the main alternative — a lightweight runtime designed specifically for Kubernetes. Practical differences:

- containerd is more general purpose, used outside Kubernetes (Docker, dev environments).

- CRI-O is exclusively for Kubernetes, with no extra features.

- Both implement CRI and OCI. Performance is comparable.

- containerd has wider global adoption; CRI-O is standard in Red Hat OpenShift-style distributions.

Choosing between them is more about ecosystem and support than technical capability in 2023.

Working With containerd Directly

When something goes wrong on a Kubernetes node, knowing how to operate outside kubectl helps. Two tools to talk to containerd directly:

ctr — The Basic CLI

Comes with containerd. Useful but not very friendly:

# List images

sudo ctr -n k8s.io images list

# List containers in Kubernetes namespace

sudo ctr -n k8s.io containers list

# View tasks (active container processes)

sudo ctr -n k8s.io tasks list

# Get container logs (use journalctl or crictl instead)The -n k8s.io is the namespace Kubernetes uses; without it you don’t see the pods.

crictl — The Official Tool for Kubernetes

More useful in Kubernetes clusters:

# List pods

sudo crictl pods

# List containers

sudo crictl ps

# Container logs

sudo crictl logs <container-id>

# Exec into one

sudo crictl exec -it <container-id> sh

# Inspect image

sudo crictl inspecti <image>Config at /etc/crictl.yaml:

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.socknerdctl — Docker Syntax for containerd

If you miss docker run and friends, nerdctl is API-compatible with Docker but talks to containerd directly:

sudo nerdctl run -d -p 80:80 nginx

sudo nerdctl ps

sudo nerdctl exec -it <id> shFor developers coming from Docker, it’s the most comfortable transition.

Cases Where Touching containerd Directly Helps

In day-to-day Kubernetes operations, you normally don’t touch containerd. Where it does help:

- Debug pods that won’t start. containerd logs in

journalctl -u containerdoften have detail kubelet doesn’t propagate. - Cleanup of orphan images.

crictl rmi --prunefrees space when Kubernetes GC is slow. - Image pull problems.

crictl pulldirectly reveals credentials or network issues without going through the Kubernetes abstraction. - Verify active runtime.

kubectl get nodes -o wideshows runtime; auditing it takes 30 seconds.

Relevant Configuration

Main config lives at /etc/containerd/config.toml. Typical changes:

- Registry mirror (speeds up and reduces Docker Hub usage).

- Configure different runtime (gVisor for more isolation in multi-tenancy).

- Logging plugin and rotation.

- systemd cgroup driver (must match kubelet — common installation error).

Changes require systemctl restart containerd and, in production clusters, doing it node by node with cordon/drain.

Conclusion

containerd is the foundational piece on which most modern Kubernetes runs. Knowing its architecture helps with debugging, alternative-runtime decisions, and understanding what your cluster does. For daily operations, it remains a layer you normally don’t touch — but knowing how to move when needed turns an hours-long problem into minutes.

Follow us on jacar.es for more on Kubernetes, container runtimes, and cluster operations.