AI-assisted code review: an honest adoption story

Actualizado: 2026-05-03

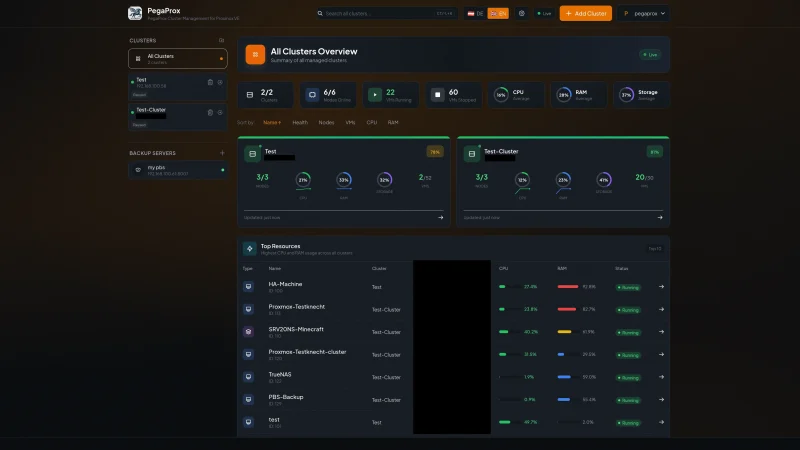

In early 2023 we started experimenting with AI-assisted code review in the team. First with a bot commenting pull requests using GPT-3.5, then with more sophisticated integrations: Copilot for Pull Requests, CodeRabbit, and specific tools like Qodo. Two years in, habits have settled and I have reasonably firm opinions on what works and what doesn’t.

This isn’t a product roundup or a “best tools” list. It’s more like the balance I would have liked to read when starting: where AI in code review adds real value and where it just adds noise the team has to filter.

Key takeaways

- AI covers mechanical oversights well (unused imports, orphaned TODOs, stale docstrings) with more context than a traditional linter.

- Automatic PR summaries reduce friction for reviewers without prior context.

- False-positive rates on subtle bugs are high (≈80%); filtering them costs real time.

- Not blocking merges on automated comments is the single decision with the most team impact.

- One well-configured tool outperforms three overlapping bots.

What we expected

The initial expectation was that AI would end up replacing the first pass of human review. Reviewers spend a lot of time pointing out style issues, obvious oversights, trivial errors. If a tool could cover all that automatically, human time could go to architecture and judgment.

What happened in practice is partly that and partly something quite different.

What has worked

AI is useful for detecting several very specific patterns:

- Mechanical oversights: a

TODOwithout a reference, an import added but not used, a variable declared and never read, a modified test that no longer runs. LLM-based tools phrase the warning in natural language and with context, making it much harder to ignore than “unused import.” In large teams where linters become background noise, this helps. - Code-documentation inconsistencies: if you change a function but the docstring still says something else, the AI catches it quite reliably. Humans do too, but it’s the classic case where we skip the detail.

- Summaries for the reviewer: an automatic summary of what the PR does, generated from the diff, is a reasonable entry point. If the summary doesn’t match what the PR actually does, that’s already a useful signal.

- Suggested tests: tools that propose test cases deliver a modest but consistent benefit. They don’t replace human-designed tests, but they catch forgotten edge cases, especially in functions with many parameters.

What hasn’t worked

On the flip side, there are several things we’ve stopped expecting:

- Detecting subtle bugs: when a tool says “possible race condition” or “possible null dereference”, the false-positive rate is still very high. We soon found 80% were impossible in the real context. Today we filter these unless the code is clearly risky.

- Architectural judgment: “this function should be a class,” “this module should be elsewhere.” Without understanding project context they become permanent noise. AI doesn’t know that function has a deliberately narrow purpose.

- Deep security reviews: tools detect obvious patterns (hardcoded credentials, SQL via concatenation), and that’s valuable. But they don’t replace a real audit, and we’ve seen tools approve diffs with clear problems because the pattern didn’t match their training.

The pattern that emerged

After trying several configurations, the pattern that worked best is:

- AI makes a first automatic pass when the PR opens: summary, mechanical oversights, untested paths, known risk patterns.

- The PR author processes that pass: fixes oversights, answers questions, dismisses false positives with a brief note.

- The human reviewer focuses on what matters: architecture, trade-off choices, consistency, long-term readability.

An important detail: AI comments don’t have blocking authority in our process. They’re explicit suggestions. No one has to justify ignoring an automated comment.

The decisions that made a difference

Three concrete decisions mark the difference between teams that integrate AI well into code review and those that abandon it:

- Not requiring all automated comments to be resolved. If you do, noise becomes unbearable within months.

- Picking one tool and using it consistently. Having three bots commenting on the same PR is counterproductive: they overlap, contradict each other, and raise cognitive cost.

- Periodically reviewing which comment types add value and tuning configuration. Most tools let you disable categories or raise confidence thresholds. We’ve silenced several categories that produced noise.

Looking ahead

What I see coming is a deepening of what already works: better summaries, more reliable oversight detection, integration with incident history so a comment might say “this pattern is similar to what caused last quarter’s incident.”

What I don’t expect is AI replacing human review in architectural decisions or judgment calls. That part remains human work, probably better assisted but not delegated.

My advice for anyone thinking of adopting these tools today is pragmatic: start with one, configure aggressive disables, don’t block merges on automated comments, and revisit every six months whether the tool contributes more than it costs. That modest approach is what leaves a real improvement without having wasted time on oversized promises.