Agent OS in production: real cases without the marketing

Actualizado: 2026-05-15

The Agent OS concept, a layer specifically designed to run AI agents rather than traditional applications, had been in the air since mid-2024 but stayed on slides for quite a while. During 2025 several platforms moved from announcement to real deployment, and in April 2026, with six full months of production in some cases, patterns are visible. This article avoids vendor marketing and focuses on what has been deployed, what works and what doesn’t, and whether the concept has substance of its own or is repainted classical orchestration.

Key takeaways

- Deployments with a differentiated agent stack had slower start but show more long-term stability.

- Deployments on Kubernetes with agent orchestration on top gained initial velocity but are hitting ceilings on observability and policy granularity.

- The threshold where an Agent OS pays its adoption cost is five or more active production agents.

- Model cost savings (30-50% over raw API) come from systematic prompt caching and complexity routing.

- Five traits separate a platform with substance from a repainted one.

What’s promised and what’s observed

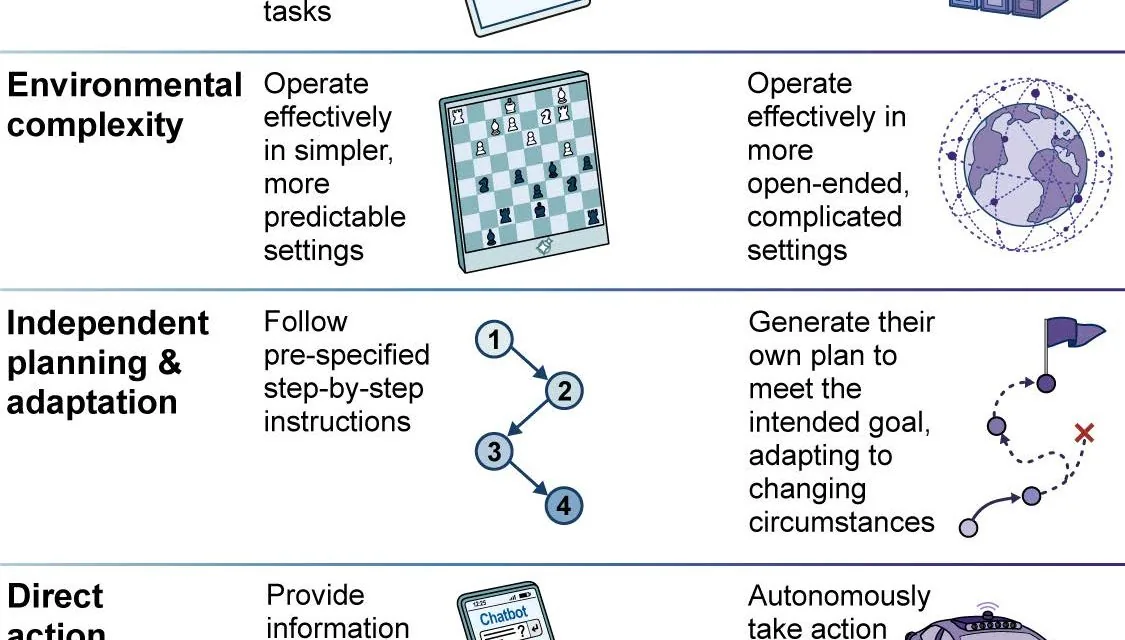

The Agent OS promise has several components:

- A specialised runtime where an agent is a lighter unit with persisted state that allows suspend, migrate, and resume.

- An identity and permission model designed for non-human entities with dynamic scope.

- A uniform tool layer where exposed capabilities are tractable, versionable, and auditable.

- Observability with native agent concepts: reasoning trace, tool calls, budgets, human-decision points.

Six months of production leave a clear first read. Deployments that leaned on a differentiated agent stack, proprietary runtime, specific event bus, new identity model, had a slower start but are showing more long-term stability. Deployments that reused Kubernetes with agent orchestration on top gained initial velocity but are hitting ceilings on observability and policy granularity that force rewriting layers.

Architectures That Survived

The most repeated architecture in successful cases separates three planes:

- Execution plane: where the agent runs, orchestrator calling the LLM, maintaining conversation state, controlling the reasoning loop, executing tools.

- Control plane: where agent inventory, policies, budgets, change approval, and identity management live.

- Data plane: where traces, results, and auditable events are persisted.

This separation has avoided the classical anti-pattern where the orchestrator accumulates control responsibilities and stops being able to scale.

An architectural pattern that has gained traction is the suspendable-process runtime. Instead of running each agent as a persistent container or process, the runtime serialises agent state between steps, stores it in fast storage, and rehydrates only when there is work to do. This allows thousands of nominally active agents with compute cost proportional to actual use, not to the number of existing agents.

Another consolidated pattern is the separation between external and internal capabilities. External capabilities, third-party APIs, email, corporate databases, are exposed via an MCP gateway that applies policies, approvals, and audit. Internal capabilities, agent memory, reasoning tools, specialised sub-agents, run inside the runtime without a gateway. This distinction has proven crucial because policies for actions with external side effects are not the same as for internal reasoning.

# Minimal agent descriptor in a mature runtime, 2026

apiVersion: agent.os/v1

kind: Agent

metadata:

name: reconciliation-finance

owner: finance-platform

spec:

model:

provider: anthropic

name: claude-opus-4-7

pinned_revision: "2026-02-14"

memory:

type: persistent

retention_days: 90

tools:

- mcp://gateway/sap-invoice-read

- mcp://gateway/ledger-read

- mcp://gateway/email-draft

budgets:

calls_per_hour: 200

usd_per_day: 40

approval:

on_action_value_usd_gt: 1000Where the Model Breaks

Burst scaling is the first real breaking point. A popular agent can receive a thousand concurrent requests, and if the runtime isn’t prepared to multiply instances while maintaining state coherence, problems appear: race conditions over shared memory, queues growing unbounded. More mature runtimes address this with explicit “single instance per session” or “instance per partition” primitives.

Tracing when an agent delegates to sub-agents is the second point. If each sub-agent runs in its own context with its own identity, maintaining full trace from original intent to executed action requires explicit context propagation. When this fails, incidents become much harder to explain, the same problem documented in AI incident postmortems.

Model evolution is the third point. LLM providers update models with some frequency and behaviour changes even when the model name stays the same. Runtimes that allow pinning exact model versions and that facilitate shadow testing when the provider publishes a new version protect agents from silent regressions.

Real operating cost

The business question is whether an Agent OS pays its cost versus classical orchestration. Numbers from mature deployments are more nuanced than announcements:

- On model cost: savings appear when the runtime uses prompt caching systematically, complexity-based routing, and retry with small models before escalating. On platforms where this ships by default, cost reduction over “agent running on raw API” is typically 30% to 50% without touching code. Related to the complexity-routing architecture with Haiku 4.5.

- On infrastructure cost: a well-sized Agent OS is comparable to Kubernetes for equivalent load; not cheaper, not more expensive.

- Where savings are clear: in the human cost of operating. Having a living agent inventory, uniform traceability, dashboards you don’t have to build, and approvals with integrated flow saves weeks per production agent. On teams with ten or twenty active agents, this economy of scale justifies the initial investment.

What separates a useful platform

After six months observing, the traits that separate a platform with substance from a repainted one are concrete:

- Agent identity as a native primitive, not as a label on a service account.

- Observability where the logical agent trace, not the structured process log, is the main analysis object.

- Approval mechanisms integrated in the runtime language, not bolted on as external middleware.

- Per-agent cost management broken down by tokens, external actions, and compute time.

- Shadow-run capability, where a new agent runs in parallel with the human for a period and its behaviour is compared before real autonomy is authorised.

If an Agent OS platform does not ship these five pieces integrated, you’ll end up building them on top, and at that point the difference from Kubernetes plus agent libraries blurs.

When It’s Worth It

Agent OS is more real in April 2026 than its detractors admit and less revolutionary than vendors sell. What has consolidated is not a new technology but a platform profile with five well-delimited responsibilities, and serious implementations are recognised by treating them as platform primitives, not as add-ons.

When adoption pays off: when the organisation already has or will have more than five production agents and the cost of operating each starts to dominate. Below that threshold, reusing Kubernetes with agent libraries and judgement is more pragmatic. Above it, the integration economics flip.

The decision is not ideological but of scale: below, dedicated infrastructure is ceremony; above, operational savings and error reduction from uniformity end up justifying the investment comfortably. The same scale logic applies to enterprise agent governance: rigor paid upfront is always cheaper than emergency remediation.