Cerebras-GPT: 7 Open-Source LLM Models Ready to Use

Actualizado: 2026-05-03

Cerebras-GPT demonstrated that specialised hardware can change the training equation for large language models. While the community debated whether open-source LLMs could match proprietary performance, Cerebras Systems published a complete family of seven models — from the smallest (111M parameters) to the largest (13B) — efficiently trained on their CS-2 processors.

Key takeaways

- Cerebras-GPT is a family of 7 open-source language models, available on Hugging Face and GitHub.

- Models range from 111 million to 13 billion parameters, all trained with the same scalable methodology.

- The Cerebras CS-2 hardware enables training large models without the model fragmentation conventional GPUs require.

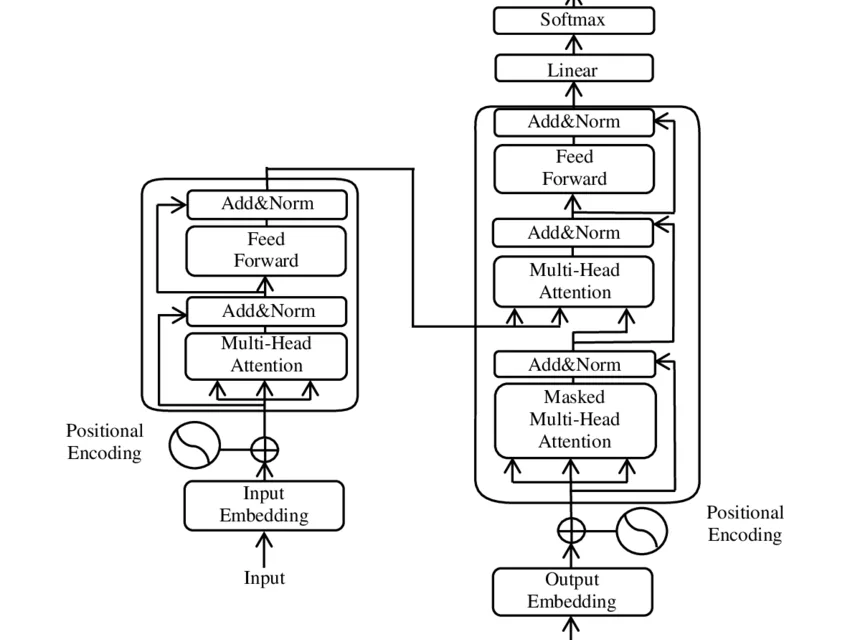

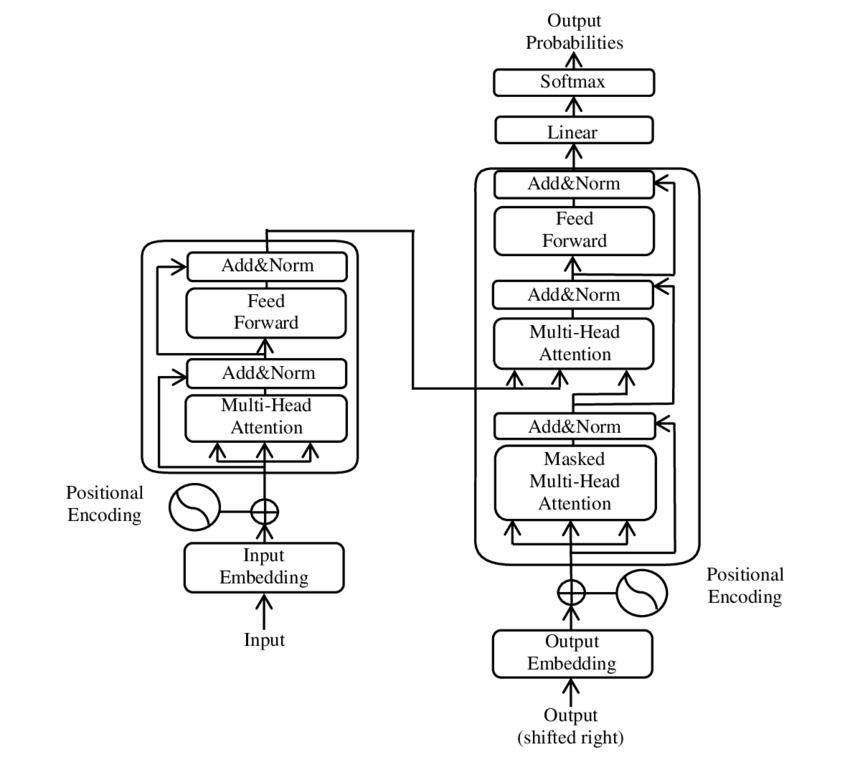

- The models follow the standard GPT-3 architecture and are compatible with the Hugging Face ecosystem.

- Known limitation at launch: trained only on English using the Pile dataset.

The 7 Cerebras-GPT Models

Cerebras Systems[1] published the following models on Hugging Face, ranging from 111M to 13B parameters. All models can be downloaded from:

The Hardware Behind It: CS-2 and the WSE-2 Chip

The Cerebras proposition is not just the model — it is the training infrastructure. The Wafer-Scale Engine 2 (WSE-2) chip is the largest AI processor ever manufactured on a single silicon die: 2.6 trillion transistors and 850,000 AI cores on a single wafer-sized chip.

This architecture solves a fundamental problem with GPU LLM training: the need to fragment the model across multiple devices (model parallelism) and manage inter-device communication, which becomes a bottleneck at scale.

Practical Uses of Cerebras-GPT

As open-source models with published weights, Cerebras-GPT can be used for:

- Supervised fine-tuning: adapting the base model to a specific domain with own datasets.

- NLP research: studying model behaviour at different scales using the same family.

- Local inference: running small models (111M-590M) on conventional hardware for applications with strict privacy or latency requirements.

- Scaling comparisons: simultaneous publication of 7 sizes with the same methodology facilitates scaling law study.

Known Limitations

- Language: all models are trained exclusively on English.

- Training dataset: the Pile includes inherent biases from internet text, books, and code.

- Alignment: base models are not instruction-aligned (RLHF). Production use as conversational assistants requires additional alignment fine-tuning.

- Context window: 2048 tokens limit applications requiring long document processing.

Conclusion

Cerebras-GPT contributed two valuable things to the AI ecosystem: quality open-source models and evidence that GPU-alternative hardware can change training efficiency. For teams needing controllable, auditable, and tuneable LLMs without depending on external APIs, this model family represents a real alternative. The future of LLMs is not only about making them larger but making them more efficient — and Cerebras demonstrated there is more than one path to achieve that.