Vector Database Comparison: Qdrant, Pinecone, and Weaviate

Actualizado: 2026-05-03

Vector databases went from an academic curiosity to a fundamental piece of generative AI infrastructure in under two years. Any application using embeddings — semantic search, RAG (Retrieval-Augmented Generation), similarity-based recommendation — needs to store and query them efficiently. Qdrant, Pinecone, and Weaviate are three of the most mature options on the market, with distinct approaches.

Key takeaways

- Vector databases store high-dimensional embeddings and retrieve them by semantic similarity, not exact equality.

- Qdrant is open-source, self-hostable, and optimised for performance using the Rust library.

- Pinecone is managed SaaS: minimal setup, but no self-hosting option.

- Weaviate is open-source with native embedding modules: it can ingest text without needing an external model.

- The choice depends primarily on three factors: deployment control, cost at scale, and need for native semantic modules.

What Makes Vector Databases Special

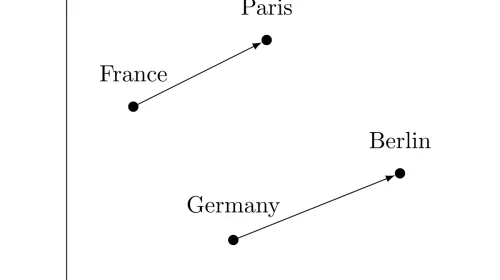

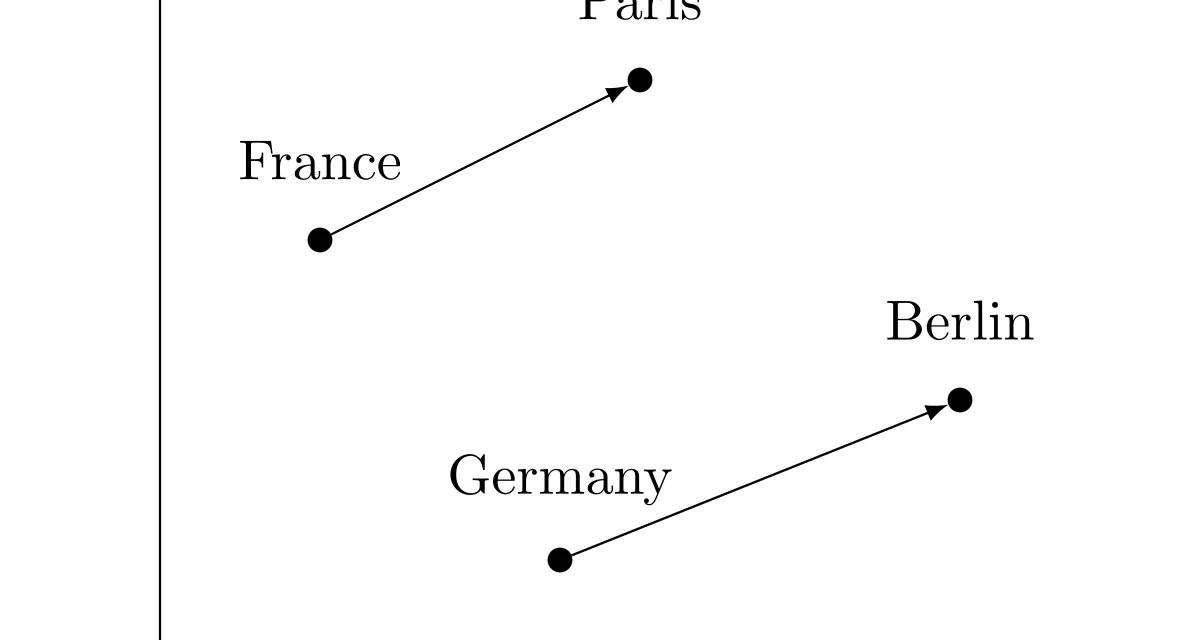

The algorithm that makes this efficient at scale is HNSW (Hierarchical Navigable Small World): a graph index that enables approximate nearest neighbour (ANN) search with controllable precision and millisecond response times, even for collections of millions of vectors.

The typical usage cycle:

- Generate embeddings for documents/images/entities using a model (OpenAI

text-embedding-ada-002, Cohere, local models). - Store embeddings with metadata in the vector database.

- At query time: generate the embedding for the query and find the k nearest vectors.

- Filter and rerank results combining semantic similarity with metadata filters.

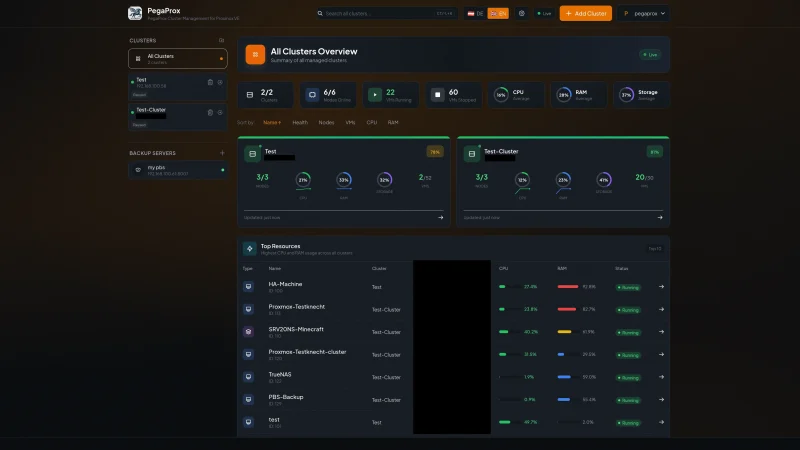

Qdrant: Open-Source, Rust Performance

Qdrant[1] is written in Rust, translating into memory efficiency and inference speed that is hard to replicate in Python implementations. It is open-source (Apache 2.0) and can be deployed self-hosted or via managed cloud.

Standout features: – Combined filter + search: Qdrant applies metadata filters during vector search (not post-filtering). – Rich payloads: each vector can have a structured JSON payload with indexable metadata. – Vector quantisation: scalar and product quantisation to reduce memory footprint up to 32x. – Multi-vector collections: store multiple vector representations of the same object.

Choose Qdrant when: full control over deployment and data, complex filters combined with vector search, teams capable of operating container infrastructure.

Pinecone: Managed SaaS, Zero Ops

Pinecone[2] is the fully managed option: no infrastructure to operate, no indices to configure, no servers to maintain.

Standout features: – Serverless and dedicated pods with automatic scaling. – Namespaces for multi-tenant data isolation. – Hybrid search combining dense embeddings with sparse BM25. – Native integrations with LangChain, LlamaIndex, Haystack.

Choose Pinecone when: teams without capacity or desire to operate own infrastructure, prototypes where speed to market is priority, variable workloads where auto-scaling has real value.

Main limitation: no self-hosting option.

Weaviate: Native Embeddings and Knowledge Graphs

Weaviate[3] takes a different approach: rather than being just a vector store, it is an object-oriented database with native vector capabilities.

Standout features: – Native vectorisation modules (text2vec-openai, text2vec-cohere, text2vec-transformers). – Hybrid search (BM25 + vectors) with controllable alpha parameter. – Knowledge graph with explicit cross-object relationships. – Native RAG via generative search modules.

Choose Weaviate when: semantic schema and object relationships matter, RAG use cases where search and generation are tightly integrated, teams preferring a unified pipeline.

RAG Context: The Primary Use Case

The most common current application is RAG: document chunks are indexed in a vector database and only the most relevant ones are retrieved for each query. The LLM receives retrieved fragments as context and generates a grounded response.

Conclusion

Qdrant, Pinecone, and Weaviate solve the same core problem — efficient vector retrieval by similarity — with distinct product philosophies. Qdrant is the option when control and performance are priority. Pinecone is the option when speed to market and absence of operations matter more than control. Weaviate wins when the integrated semantic pipeline — embedding, search, and generation — has more value than the flexibility to choose each component separately.