Inference routers: choosing a model based on the request

Actualizado: 2026-05-03

An inference router decides, for each request arriving at your application, which specific model to send it to. Throughout 2023 and 2024 the dominant architecture was an application pointing to a single model, and that simplicity felt reassuring. Serious teams have since realized a single model is not optimal for cost, latency or fit to request type, and inference routers have become a standard piece of production deployments.

Key takeaways

- A well-designed router cuts total token cost by 30–70 % while preserving perceived quality.

- Requests are not homogeneous: deciding which go to the cheap model and which to the large one is the core of the problem.

- Four patterns cover 90 % of cases: length, task type, auxiliary classifier, and learned routing.

- Without observability a router can make bad decisions for months without anyone noticing.

- The added complexity only pays off when volume is significant and requests are heterogeneous.

The problem they solve

Language-model requests are not homogeneous. A simple classification, a short entity extraction or a known support query can be perfectly resolved by a cheap and fast model. A long code analysis, exhaustive technical writing or multi-step reasoning benefit from a large model. Sending everything to the big one is expensive; sending everything to the small one hurts quality where it matters. The middle solution is routing.

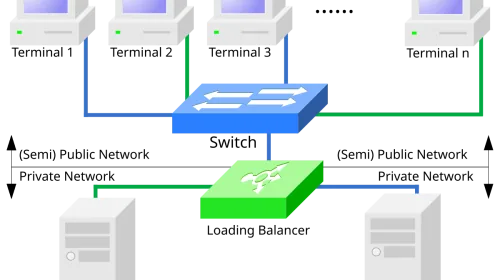

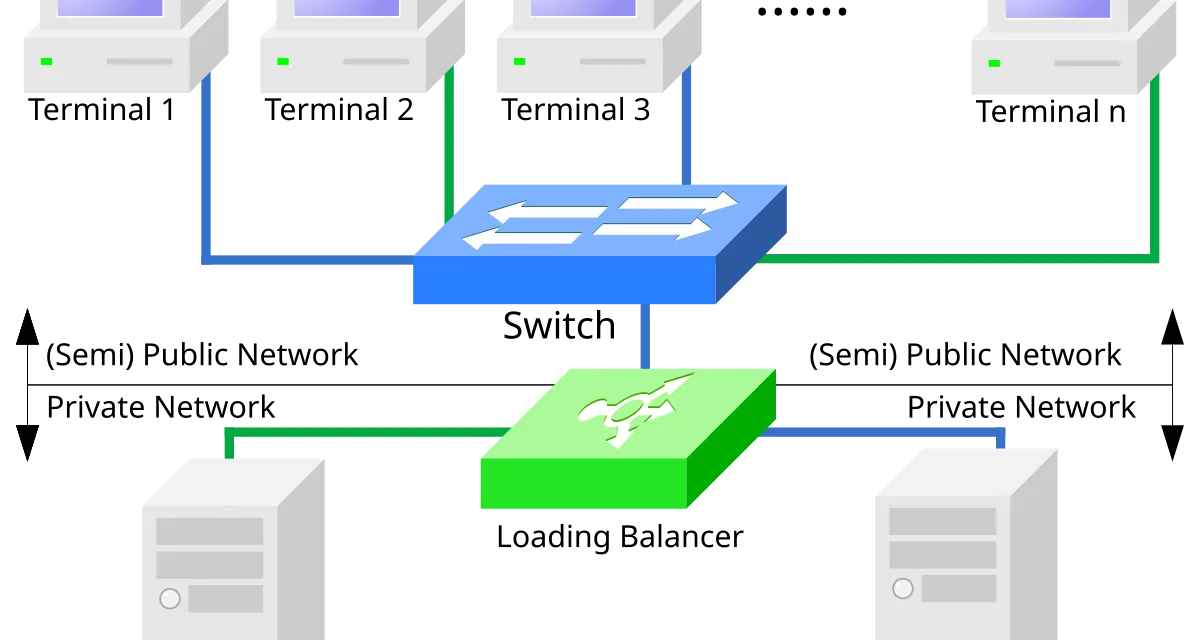

The typical router receives the request, evaluates it, decides which model should handle it, and forwards the request returning the answer to the client. That decision can use simple heuristics, a trained classifier, a small model acting as triage, or a combination of all three.

Decision patterns

By length. Short request, small model; long request, big model. This heuristic captures a lot of value with minimal complexity because simple tasks are usually short and complex ones, long. It’s the natural starting point.

By task type. The application knows which function is invoked: summarization, extraction, creative generation, reasoning. Each function has a preconfigured optimal model. When the structure allows it, the decision is deterministic and auditable.

Auxiliary classifier. A very cheap small model decides whether the request is simple or complex and routes to the corresponding executor. If it’s right 90 % of the time, the savings more than offset the few hundred milliseconds of added latency.

Learned routing. Production logs are collected, which requests the cheap model handled well versus which needed the large one are labeled, and a specialized classifier is trained. This cuts the most cost but demands the most operational effort: retrain periodically and watch for distribution drift.

Providers and options

- LiteLLM[1] has consolidated as the reference abstraction layer: a local proxy speaking to most providers with a uniform interface, allowing model switching without touching application code.

- OpenRouter[2] offers routing as a service with optimization policies for cost, latency or balance.

- Portkey[3] and Helicone[4] focus on observability and governance: traces, aggregated metrics, and budget control.

For teams wanting full control, building on LiteLLM or directly on official SDKs is reasonable. The core logic isn’t complex; the complexity lives in observability, controlled failure, and retry policy.

Minimal working example

def route_request(prompt: str, history: list) -> str:

tokens = estimate_tokens(prompt) + sum(

estimate_tokens(m) for m in history[-6:]

)

has_code = "```" in prompt or any("```" in m for m in history[-3:])

complexity_keywords = ["analyze", "explain why", "compare", "design"]

is_complex = any(k in prompt.lower() for k in complexity_keywords)

if tokens > 2000 or has_code or is_complex:

return "sonnet-4-5"

return "haiku-3-5"This twenty-line router captures useful patterns: length, code presence, and complexity keywords. In real applications it cuts cost around 40 % without additional sophistication. More complex variants are only worth it when marginal savings justify the work.

Common traps

- Testing only with synthetic data. Real requests have a different distribution: more diversity, more atypical cases, more unexpected formats. A router tuned on a hundred examples can behave very differently against ten thousand real requests.

- Not measuring quality degradation. The router may be saving money at the cost of worse experience without anyone noticing. Automated evaluations on production samples are the only reliable way to detect this.

- No failure escalation. When the cheap model returns something inadequate there must be an escalation flow to the large one. Correct pattern: try cheap model → if criteria met use it → if not, retry with large model.

- Ignoring conversation context. In a chat, if the user has asked six complex questions, the seventh is probably complex too even if it looks brief.

Minimal observability

A blind router is dangerous. For each request you must log which model was chosen, by what rule, what latency it took, what cost it incurred and what outcome it produced. Without that telemetry the router can make bad decisions for months without anyone noticing.

It’s also worth exposing aggregate metrics to the team: model distribution, average cost per request, proportion of escalations to the large model, and latency distribution. A simple alert on the escalation proportion catches drift before it becomes a perceived problem. This connects directly with what a good LLM observability strategy covers and with the tools reviewed in the 2026 observability stack.

When it pays off

A router makes no sense if the application uses a single model with low volume. It makes sense when:

- Request volume is meaningful.

- Requests are heterogeneous in complexity.

- The team can invest time in measuring and tuning.

In that combination savings are consistent and degradation risk is managed with proper observability. The operational recommendation is to start simple, with clear heuristics from day one, iterate with real data, and only move to learned approaches when heuristics have been exhausted. A fifty-line router with good telemetry almost always beats a trained classifier without metrics, because the first adapts and the second rusts. This same logic applies when combining the router with LLM caches to maximize savings.