Community MCP servers: which ones are worth it

Actualizado: 2026-05-03

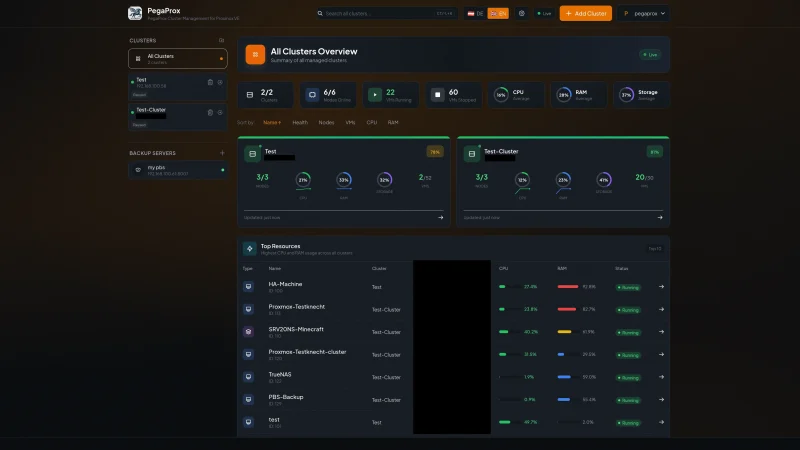

Six months after Model Context Protocol (MCP) became the common protocol to connect agents with external tools, the community server catalog has comfortably passed a thousand entries. The problem is no longer finding an MCP server for a specific task, it’s deciding which of the six on the first page of GitHub deserves trust. This post gathers the servers I use daily in my Claude Desktop and Claude Code workflow, the ones I installed and then removed, and the criteria I apply to separate wheat from chaff.

Key takeaways

- The five official Anthropic servers I use daily are: filesystem, git, Postgres (read-only), fetch, and memory.

- Slack, email, and broadly-scoped SaaS API servers present attack surfaces too large for the real benefit they bring.

- The tool-chain risk (a malicious server directing the agent to invoke another legitimate server with data-leaking arguments) remains unresolved in current MCP clients.

- Five criteria before installing any new server: provenance, scope, traceability, revocability, and update model.

- Don’t mix official servers with community servers you don’t know well in the same session.

What has changed since November

When Anthropic announced MCP in late 2024, the protocol had a dozen official servers and a handful of experiments. Six months later the adoption question is answered: the catalog grows quickly, clients exist beyond Claude Desktop, and enterprise integrations already appear that assume MCP as the default mechanism.

The part that hasn’t aged well is catalog governance. There’s no central registry equivalent to npm with minimal review. Servers live in individual repositories, are installed with shell commands that clone third-party binaries, and the only guarantee is the maintainer’s reputation. That model works for mature projects and doesn’t work for three-day-old novelties.

The five I use every day

- Filesystem MCP server, maintained by Anthropic: lets you give the agent bounded access to specific local directories with controlled permissions. I use it so Claude reads and writes files in a specific project without roaming the rest of the system.

- Git MCP server, also official: lets the agent read repository state, see diffs and consult commit history. Instead of copying and pasting terminal output into the conversation, the agent can ask for information when it needs it.

- Postgres MCP server, also maintained by Anthropic and focused on read-only queries: connects Claude with a development database for schema exploration. I wouldn’t connect it to a production database.

- Fetch MCP server, basic but indispensable: lets the agent download web pages and return them in processable markdown. The official implementation respects robots.txt and has reasonable size limits.

- Memory MCP server, also official: provides per-session persistent memory the agent can consult and update. It’s the only server where I’m careful with privacy: what gets saved can leak through unexpected sources when mixed with other servers. This server is also the persistence layer that feeds knowledge graphs for LLMs.

What I tried and ended up removing

I tried the community Slack MCP server and removed it within days. The functionality was correct but the permission model was poorly designed: once connected, the agent could read any channel the user had access to, including private ones with sensitive information. The indirect prompt injection exfiltration risk was too high for the real benefit.

I also tried several email servers and retired them all. The pattern is similar: the attack surface of a server that reads and acts on email is enormous, and community servers didn’t have security reviews justifying the risk.

The other group I removed is servers for broadly-scoped SaaS APIs. A server that gives the agent full access to the Stripe or AWS API sounds useful until you review the code and see credentials passed as environment variables without rotation, without fine-grained permission control, and without audit logging.

Criteria for deciding what to install

Over time I’ve ended up applying five criteria before installing any new MCP server:

- Provenance. Official Anthropic servers, servers from recognised organisations, or repositories with an identifiable maintainer and sustained activity. Anonymous repositories with little activity are rejected outright.

- Scope. What the server does and over what. A server that reads a specific local directory is very different from one with arbitrary network permission. Applying least privilege here saves future headaches.

- Traceability. What the server logs when it acts. A well-built MCP server logs each tool invoked with arguments, which lets you audit behaviour later if something goes wrong.

- Revocability. How it’s removed if you decide you don’t want it. Verifying that uninstallation is clean is part of the evaluation.

- Update model. How you learn about a security update. Follow repositories with regular releases and distrust those that don’t publish them.

The risk no one is looking at

There’s a cross-cutting risk I haven’t seen discussed enough in the community. When an MCP client has several active servers in the same conversation, servers implicitly share context through the agent. A malicious server can insert instructions in its responses that direct the agent to invoke another legitimate server with arguments that leak information.

This tool-chain attack is hard to detect because each individual server behaves correctly. What fails is the composition. Mitigating it requires isolating contexts across untrusted servers, something current MCP clients don’t do by default.

My recommendation while this isn’t resolved: don’t mix official servers with community servers you don’t know well in the same session.

My read

MCP has won the adoption bet in only six months. It has achieved something similar to what OAuth achieved: establishing itself as the default mechanism for integrating agents with external services.

The unresolved problem is the community catalog’s trust model. Npm learned the hard way that an unreviewed registry is a supply-chain attack vector, and MCP is walking the same accelerated path. Anthropic has started signing official servers and there’s conversation about a formal registry, but at the catalog’s growth rate, each month of delay is hundreds of new servers without a verification frame.

My practical recommendation to any team adopting MCP: start only with official servers and add community servers one at a time, with manual code review, and remove them if in a month they don’t provide measurable value. The catalog is broad and tempting, but the attack surface grows with each install.