AI agent observability: what to instrument first

Actualizado: 2026-05-03

A poorly instrumented AI agent is a black box that spends money. Model calls are expensive, tool calls can be expensive too, and the decision flow is usually non-deterministic. Without instrumentation designed for this kind of system, when something fails or the bill comes in higher than expected, the team ends up reading loose logs and trying to reconstruct the sequence by hand.

Key takeaways

- Agents break the traditional observability mold in three ways: a single user input can trigger dozens of chained model calls, each call carries an explicit economic cost in tokens, and input/output content is relevant for debugging.

- OpenTelemetry consolidated during 2025 a set of semantic conventions for generative AI defining how to name spans, which attributes to use, and how to represent agent-tool relationships.

- The five aggregated metrics to always instrument: cost per conversation, end-to-end latency, successful completion rate, mean steps per conversation, tool-use rate per step.

- The most expensive failure in production is not having a way to answer “why did this conversation cost thirty euros”.

- Recommended minimum path: OpenTelemetry in the model SDK, Langfuse-type backend, five basic business metrics on a visible dashboard, simple automatic evaluation over a traffic sample.

Why classical systems aren’t enough

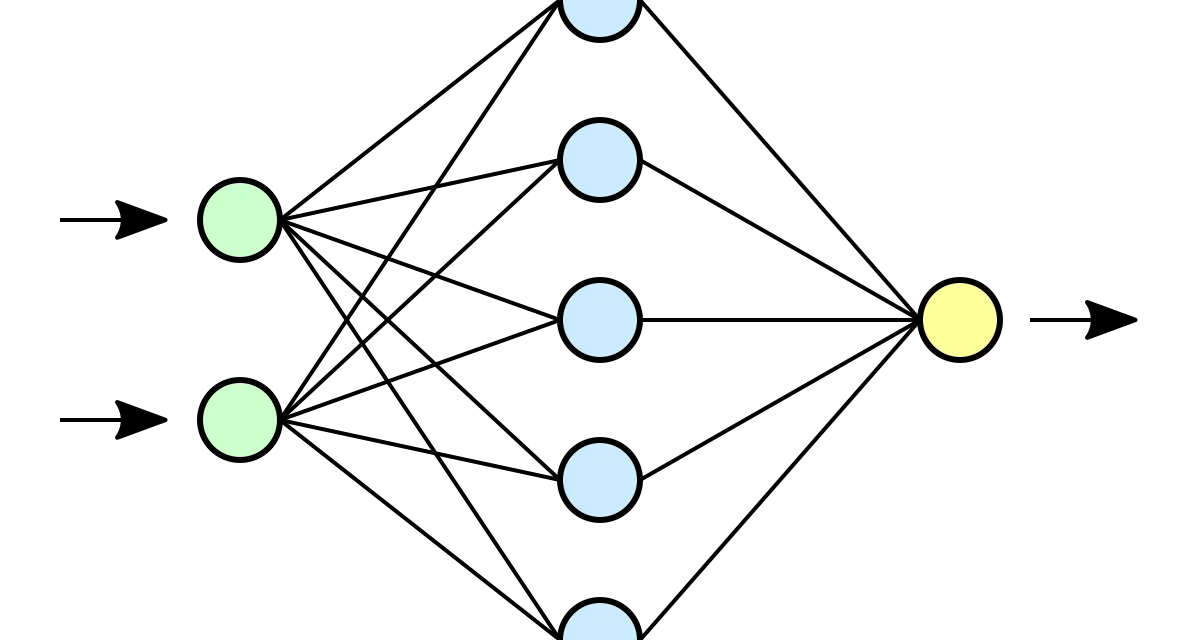

Traditional observability was built around synchronous HTTP services. Agents break the mold in three ways:

- Dozens of chained calls: a single user input can trigger dozens of chained model calls with branches and loops only known after execution.

- Explicit economic cost: each call carries a token cost that matters as much as latency.

- Content relevant for debugging: unlike a normal API JSON, input and output content is necessary to understand why the agent made one decision over another.

First layer: one trace per run

The first thing to have is a trace per agent run, with each model call and each tool call as a nested span. OpenTelemetry consolidated during 2025 semantic conventions for generative AI. All major LLM SDKs (OpenAI, Anthropic, Google, Azure) already have automatic instrumentation emitting these spans.

What to capture on each model-call span: model name, sampling parameters, input and output token counts, estimated cost, time-to-first-token if streaming, total time, and critically, input messages and output text or function calls. The last point is sensitive because those contents may include personal data.

Second layer: aggregated metrics

The five metrics to always instrument:

- Cost per conversation in provider currency.

- End-to-end latency as seen by the user.

- Success completion rate versus abandonment or error.

- Mean number of steps per conversation.

- Tool-use rate per step.

Third layer: production evaluations

Agents need one more layer: evaluations running on real conversations or samples to measure quality. Knowing a conversation ended isn’t enough; you need to know whether it ended well. A pyramid approach works: a small fraction of conversations evaluated by humans, a larger fraction by a judge model, and the rest with cheap heuristic metrics.

The most common failure pattern

The most expensive failure: not having a way to answer “why did this conversation cost thirty euros”. Without that traceability the team can’t prevent it from recurring, and the monthly LLM bill becomes a black box growing without explanation.

The cure is to instrument from day one, even if it feels like overhead. Agents start small and grow fast.

A minimum path

from opentelemetry import trace

from opentelemetry.instrumentation.openai_v2 import OpenAIInstrumentor

import openai

OpenAIInstrumentor().instrument()

tracer = trace.get_tracer("agent.bookings")

with tracer.start_as_current_span("conversation", attributes={"user.id": uid}) as span:

response = openai.chat.completions.create(

model="gpt-4o-mini",

messages=messages,

tools=tools,

)

span.set_attribute("agent.steps", len(response.choices[0].message.tool_calls or []))That quartet covers eighty percent of the value.

Conclusion

Instrument early, instrument with open conventions, and revisit when more consolidated versions appear. Observability is not an add-on built when the agent is already in production; it is part of the design from day one. There is no excuse for running agents blind.