SGLang: Fine Control Over LLM Execution

Actualizado: 2026-05-03

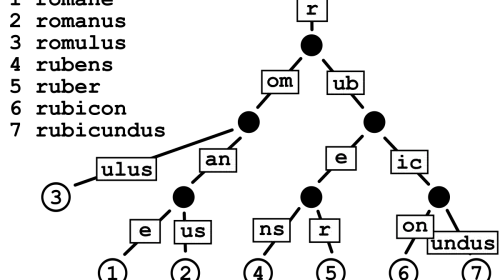

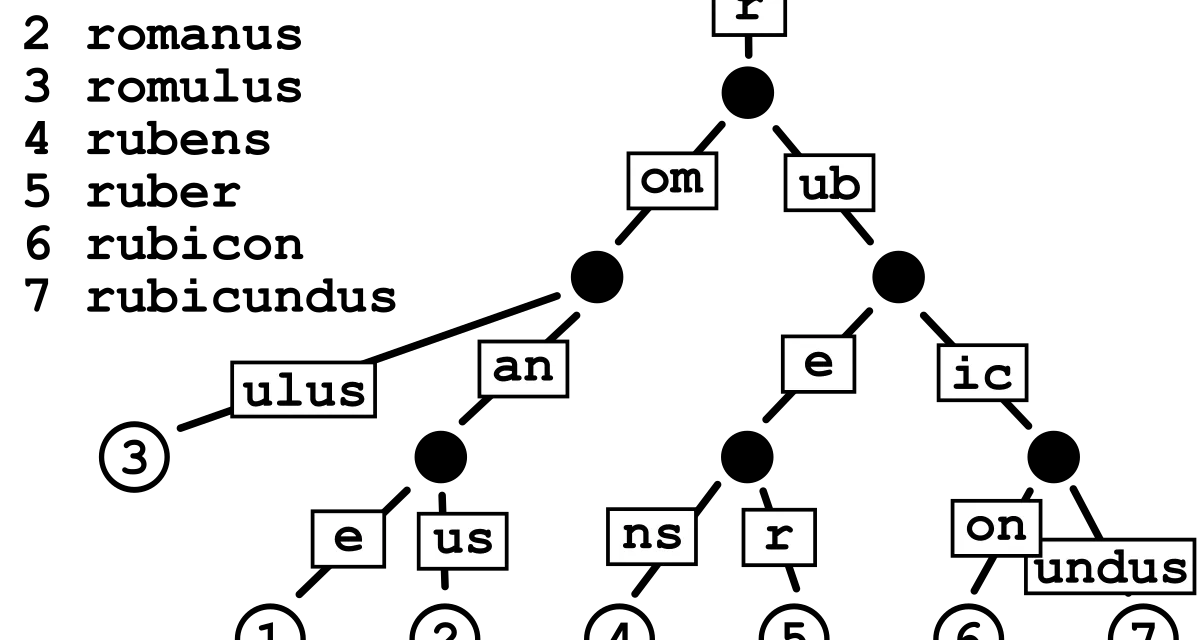

SGLang[1] —Structured Generation Language— showed up in early 2024 as an alternative to vLLM and TGI in the LLM inference layer, but its ambition goes beyond “serve tokens fast”. It proposes a small Python-embedded language for describing programs over LLMs, with explicit branching, constrained decoding, and aggressive cache reuse. Its differentiating contribution is RadixAttention: a data structure indexing the KV cache in a radix trie so distinct requests can share prefixes without recomputing them.

Key takeaways

- RadixAttention indexes the KV cache as a radix trie: shared prefixes are computed once.

- On workloads with long shared prefixes (thousand-token system prompts, few-shot, repetitive RAG), speedups vs vLLM sit between 3x and 5x.

- The DSL enables parallel branching and constrained decoding without HTTP round-trips.

- Where no shared prefix exists, SGLang behaves similarly to vLLM with additional overhead.

- For a basic chatbot behind a public API, vLLM remains the correct default.

The problem it actually solves

Modern LLM traffic often has shared prefixes: multi-step agents reuse a system prompt of thousands of tokens on each loop hop; few-shot pipelines prepend the same examples to every query; chatbots with memory accumulate context that grows over the session but changes only at the tail; RAG flows inject retrieved documents that repeat across users. In all these cases, the shared prefix is not a curiosity — it’s most of the prompt. Recomputing the KV for those tokens every time is work thrown away.

RadixAttention as a value proposition

The practical consequence: the amortised cost of a ten-thousand-token prefix shared by a hundred requests approaches the cost of generating it once. In workloads like agent loops, benchmark evaluations where all items share the template, or iterative tool-calling, reported speedups vs vLLM sit between 3x and 5x. Not marketing numbers: they come from work that genuinely no longer happens.

The DSL and why it matters

SGLang embeds in Python primitives (gen, select, fork, user, assistant) that the runtime interprets with semantic awareness. The scheduler sees the program’s dependency graph and decides what to execute in parallel, where to reuse cache, and how to apply decoding constraints — all in-process, without HTTP round-trips. Constrained decoding: an automaton recognising the desired grammar filters logits at every sampling step. Output is valid by construction, not by hope.

SGLang vs vLLM

vLLM remains the sensible choice for a generic inference service — wider model catalogue, lower learning curve, mature ecosystem. SGLang enters when the problem changes shape: when orchestration and serving are the same piece, when prefixes are long and shared, when structured output with in-generation validation matters.

Honest limitations

SGLang is younger, its API shifts between releases, the model catalogue with optimised kernels is narrower, and the documentation is what you’d expect from a project at that stage. The runtime consumes a CUDA GPU just like vLLM. And the DSL adds a layer your team has to learn.

Conclusion

SGLang deserves serious attention if your workload has long shared prefixes, parallel branching, or structured output requirements with in-generation validation. In those cases the benefit is not marginal. If your workload doesn’t have that shape, vLLM will remain the correct default and SGLang will be a tool worth knowing for when the problem actually fits.