How to Install Ollama on macOS with Apple Silicon

Actualizado: 2026-05-03

Ollama[1] is the most direct way to run large language models on an Apple Silicon Mac. A single command is enough to have Llama 3.1 8B or Mistral 7B answering from inside your laptop, with no accounts, no API keys, and not a single word of your conversation leaving the disk. This guide covers installation from scratch, model choice by available RAM, and integrating the service with the applications you already use.

Key takeaways

- Apple Silicon has an architectural advantage over traditional PCs: unified memory eliminates the PCIe transfer bottleneck; llama.cpp exploits Metal for GPU acceleration.

- Model selection rule: a 4-bit quantized model takes up roughly half as many GB as it has billions of parameters; reserve 2–4 GB for the OS.

- The OpenAI-compatible endpoint at

localhost:11434/v1/chat/completionsmakes any GPT-oriented client work without code changes. - Ollama is not the answer for serious production (vLLM or TGI live there); it is the best entry point for development and personal use.

- For lawyers, doctors or journalists handling sensitive documents, the guarantee that nothing leaves the device is a verifiable system property, not a provider promise.

Why Ollama works so well on Apple Silicon

The advantage is not anecdotal, it is architectural. M1, M2 and M3 chips share memory between CPU and GPU instead of keeping it separate as a PC with a discrete graphics card does. That unified memory means an 8B-parameter Llama 3.1 does not need to be copied across the PCIe bus for the GPU to process it: the same bytes are visible to both and inference accelerates without transfer tolls. Add Metal, Apple’s graphics layer, on top of which llama.cpp has had a very polished backend since 2023.

The upshot: a silent, fanless MacBook Air M2 can serve a 7–8B parameter model at speeds perfectly usable for real work, while power draw barely exceeds that of a browser with a handful of tabs. The memory bandwidth of these chips (100 GB/s on base models, up to 800 GB/s on an M2 Ultra) is precisely the bottleneck that dominates inference for a quantized LLM.

Installation

Two paths to the same background service:

Graphical installer: download from the official site, drag to Applications, on first run asks permission to launch as a background service.

Homebrew (preferred for technical users):

brew install ollama

brew services start ollamaVerification:

ollama --version

curl http://localhost:11434

# Should return: Ollama is runningThe daemon listens on port 11434 and any ollama command talks to it.

Picking a model by available RAM

Simple rule: a 4-bit quantized model takes up roughly half as many GB as it has billions of parameters, plus 2–4 GB for the OS and whatever application you are using.

8 GB (MacBook Air M1/M2 base):

- Phi-3 mini (~2.3 GB): useful for rewriting, summarisation, translation. Limited on complex reasoning.

- Gemma 2B (~1.5 GB): similar.

16 GB (MacBook Pro M2 Pro, Air M3):

- Llama 3.1 8B instruct Q4_K_M (~4.7 GB): the best general-purpose balance available. Leaves headroom for the rest of your workflow.

- Mistral 7B instruct: strong alternative, especially for code.

- Code Llama 7B: for coding-specific use.

32 GB (MacBook Pro Max):

- Llama 3.1 70B quantized (~40 GB): quality close to closed frontier, noticeably higher latency.

- Mixtral 8x7B (~26 GB): strong in multilingual work.

64+ GB (top-spec M3 Max, Mac Studio M2 Ultra):

- Llama 3.1 405B quantized: only viable on M2 Ultra with 192 GB, at exploration rather than production speed.

Interactive use and the local API

Conversational mode:

ollama run llama3.1:8bUseful internal commands: /set parameter temperature 0.7, /set parameter num_ctx 8192 to widen the context window, /show info, /bye.

The most interesting part is the HTTP API on localhost:11434. The OpenAI-compatible endpoint at /v1/chat/completions makes any GPT-oriented client work by simply pointing it at the Mac:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:11434/v1",

api_key="not-needed",

)

response = client.chat.completions.create(

model="llama3.1:8b",

messages=[{"role": "user", "content": "Explain RAG in three sentences."}],

)

print(response.choices[0].message.content)The same pattern works from Node, from a VS Code extension, or from any utility you previously pointed at OpenAI. For local RAG pipelines, Ollama can serve both the embedding model and the generation model, eliminating external pipeline dependencies.

Modelfiles: customising behaviour

When you repeat the same system prompt across several projects, pin it down in a Modelfile:

FROM llama3.1:8b

PARAMETER temperature 0.7

PARAMETER num_ctx 8192

SYSTEM "You are a technical assistant, concise and direct."ollama create my-assistant -f Modelfile

ollama run my-assistantIt is the local equivalent of a “custom GPT”.

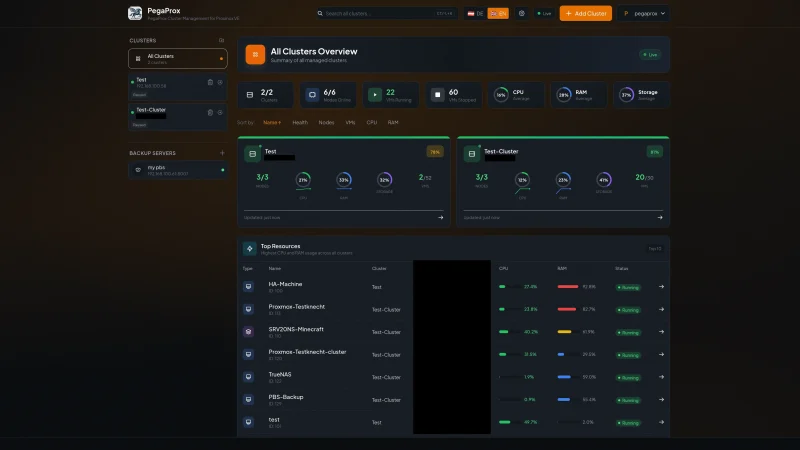

Integration ecosystem

Tools that speak to the same local Ollama service:

- OpenWebUI: ChatGPT-style web interface connecting to local Ollama.

- Continue (VS Code): code assistance with the local model.

- Aider: terminal refactoring with

--model ollama/llama3.1:70b. - Raycast: quick queries from the menu bar.

- Obsidian Copilot plugin: reasoning over your notes.

Operation

- RAM at idle: ~100 MB.

- LAN exposure: set

OLLAMA_HOST=0.0.0.0before starting. - Stop the service:

brew services stop ollama. - Downloaded models:

~/.ollama/models/, managed withollama list,ollama pull,ollama rm.

Indicative throughput:

- M1 base with Phi-3: ~30 tokens/s.

- M2 Pro with Llama 3.1 8B: 40–50 tokens/s.

- M3 Max with Llama 3.1 70B Q4: ~15 tokens/s (conversationally fine).

Conclusion

That a laptop without a discrete graphics card can run models equivalent to last year’s GPT-3.5 with full privacy, no network connection and a familiar API is still one of the more surprising facts of 2024. Ollama is not the answer for serious production — vLLM or TGI live there, designed for concurrency and throughput — but it is the best entry point for development and personal use. For any professional handling sensitive documents, the guarantee that nothing is shipped to a third party stops being a promise and becomes a verifiable property of the system.