Development and Advances in Artificial Intelligence

More about this article

Quick summary

- Machine learning improves without anyone writing explicit rules — the algorithm finds patterns in labelled data on its own.

- Deep networks (CNNs, transformers) have rewritten what computers can do with images, speech, and text; large language models are the most visible result.

- With reinforcement learning, an agent learns by playing against itself — that's how DeepMind beat the world's best Go players and how Google cut data centre energy use.

- Algorithmic bias, explainability, and privacy are not future problems: they are already affecting real decisions in medicine, justice, and hiring.

Key concepts

- Machine learning and neural networks: You train the model with examples, not rules; CNNs dropped below 3 % error on ImageNet and transformers did the same for language.

- Pattern recognition and NLP: These systems already detect tumours in mammograms better than average radiologists; the transformer (2017) brought that same logic to text, code, and speech.

- Reinforcement learning: The agent learns by trying things and collecting reward signals — no rulebook needed — which is how AlphaZero mastered chess and Go within hours.

Useful links

Keep reading

Actualizado: 2026-05-16

Artificial intelligence has moved in two decades from a niche research field to becoming the infrastructure on which products, processes, and decisions are built across virtually all sectors. The engine of this leap has been the confluence of three factors: more powerful deep learning algorithms, massive data volumes, and specialised computing hardware at accessible prices.

Key takeaways

- Machine learning allows systems to improve their performance with experience, without explicit programming for each case.

- Deep neural networks have revolutionised image, speech, and text recognition.

- Natural language processing is one of the most visible advances, with models capable of generating coherent text and translating between languages.

- AI does not work alone: it requires quality data, adequate infrastructure, and continuous human oversight.

- Ethical challenges — algorithmic bias, transparency, responsible use — are as important as technical ones.

Machine learning and neural networks

Machine learning is the branch of AI that develops algorithms capable of learning from data without being explicitly programmed for each situation. Instead of manually coding rules, the model is trained with labelled examples and the algorithm finds the patterns itself.

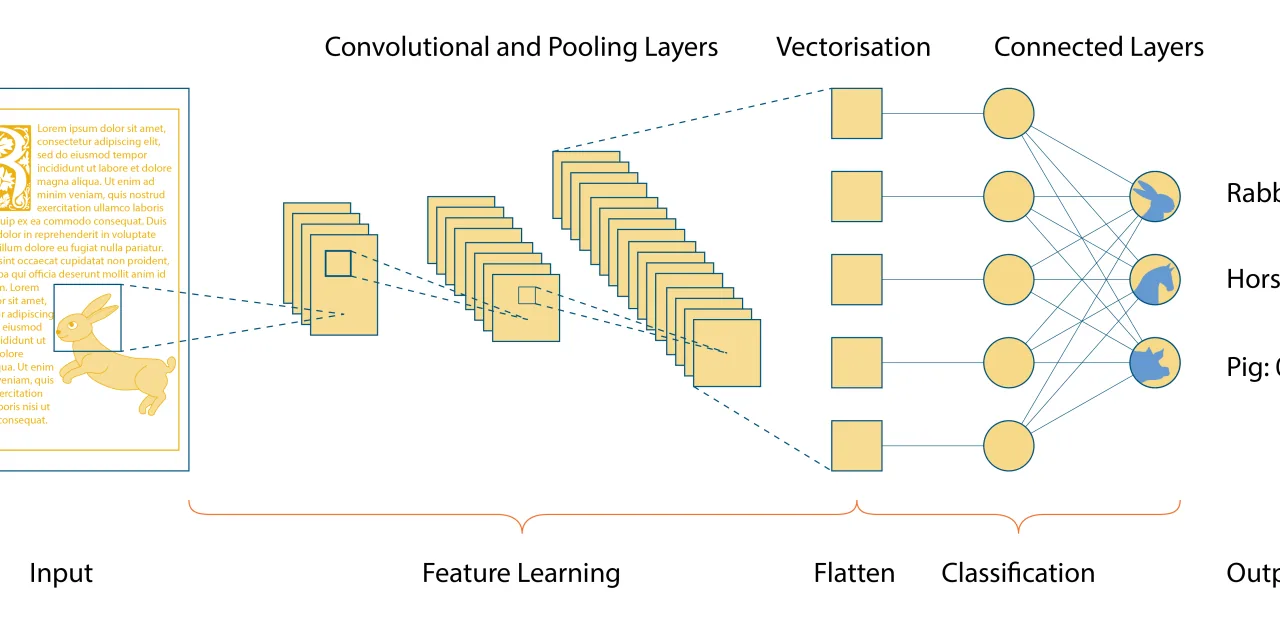

Artificial neural networks are the computational model that has best captured this approach. Organised in layers — input, hidden, output — they vaguely mimic the structure of the biological brain. The more layers a network has (deep networks), the more abstract representations it can learn.

The most significant advances in this area include:

- Image recognition: convolutional networks (CNNs) achieved error rates below 3% on standard benchmarks like ImageNet, surpassing the average human observer in certain categories.

- Large-scale data processing: the combination of GPUs, TPUs, and frameworks like PyTorch or TensorFlow has made it possible to train models with billions of parameters.

- Transfer learning: a model pre-trained on millions of images can be fine-tuned with few examples for a new task, drastically reducing data requirements.

Diagram of a convolutional neural network showing convolution, pooling, and classification layers

Pattern recognition and natural language processing

Pattern recognition is a system’s ability to identify regularities in complex data. Its applications are broad:

- Text classification: determining the topic, sentiment, or intent of a message with accuracy exceeding 90% in many domains.

- Anomaly detection: in cybersecurity, models detect anomalous behaviour in network logs that would be invisible to manual analysis.

- Medical diagnosis: computer vision systems already outperform radiologists in detecting certain types of cancer in mammography or CT images.

Natural language processing (NLP) deserves special mention. The transformer architecture, introduced in 2017, transformed the field: models like BERT, GPT, and their successors learn rich language representations and enable:

- Professional-quality automatic translation between dozens of languages.

- Code generation from natural language descriptions (see GitHub Copilot).

- Chatbots capable of maintaining coherent conversations in complex contexts (see ChatGPT 4).

- Voice assistants with sustained contextual understanding.

For a deeper exploration of NLP, our article on NLP advances covers the technical evolution in more detail.

Reinforcement learning: AI that learns from its mistakes

Beyond supervised learning, reinforcement learning (RL) allows an agent to learn optimal strategies by interacting with an environment and receiving reward or penalty signals. Its best-known achievements are:

- DeepMind’s AlphaGo and AlphaZero, which defeated the world’s best human players at Go and chess.

- Data centre optimisation systems that reduced Google’s energy consumption by 40%.

- Industrial robots that learn complex assembly movements without explicit programming.

RL is also the technique behind fine-tuning large language models with human feedback (RLHF). For more technical context, see the article on reinforcement learning.

Ethical challenges and considerations

Technical advances come with challenges that are not purely technological:

- Algorithmic bias: models learn from historical data that may reflect social biases. A personnel selection system trained on past hiring data may perpetuate discrimination.

- Explainability: many deep learning models are black boxes. In critical domains (medicine, justice), lack of explainability is unacceptable.

- Privacy: training models on personal data requires robust legal frameworks (GDPR in Europe) and techniques like federated learning.

- Labour impact: automation of cognitive tasks is transforming entire labour markets. Managing this transition is a social responsibility, not just a technological one.

The same mass data processing capacity that drives AI is at the heart of Big Data and business decision-making.

Conclusion

Artificial intelligence has completed the transition from experimental technology to productive infrastructure. Deep learning, transformers, and reinforcement learning are the engines of this transformation. What separates organisations that get real value from AI from those that don’t is the quality of their data, the clarity of their objectives, and the seriousness with which they address ethical challenges.