Industry 4.0 and data sovereignty: the 2026 tension

Actualizado: 2026-05-03

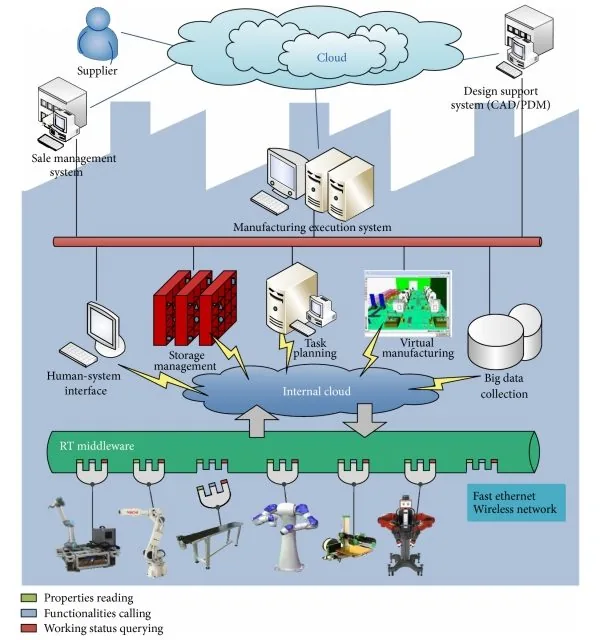

For ten years, the Industry 4.0 narrative was a straight line: connect the plant, push telemetry to the hyperscaler of the moment, run machine-learning models over history, and close the loop with orders going back down to the PLC. That straight line has bent. Not because technology failed, but because political, regulatory, and accounting reality shifted under the feet of those who signed contracts in 2018 or 2020.

Key takeaways

- Three simultaneous changes: regulatory (European Data Regulation, NIS2), geopolitical (tariffs, executive orders, international data transfers), and economic (sustained cloud price increases, expiry of adoption discounts).

- Workloads returning to the edge: near-real-time control and supervision, raw telemetry filtered at source, and ML models deployed in hybrid mode (controlled training, edge inference).

- “Theatrical sovereignty” is a real risk: a provider headquartered in the EU but with infrastructure and technical staff outside doesn’t offer the protection the marketing suggests.

- Effective sovereignty requires three aligned layers: operator’s legal jurisdiction, physical location of infrastructure, and operational control of the human team.

- The winning pattern is neither all-cloud nor all-own: it’s a decision map by workload type, where each piece sits where it belongs.

What has changed on the surface

The first visible change is regulatory. Full entry into force of the European Data Regulation and new NIS2-derived obligations have raised the cost of keeping critical operational data on platforms whose ultimate operator answers to foreign jurisdictions. A factory with production history, recipes, quality parameters, and stock movements in a non-European provider’s cloud is no longer a minor technical decision; it’s a legal, contractual, and reputational exposure the risk committee reviews with a magnifying glass.

The second change is geopolitical. Cross tariffs, currency volatility, and uncertainty about international data transfer agreements have pushed the sovereignty factor into meetings where only performance and monthly cost used to be discussed. A plant director no longer asks only about latency to the nearest region; they ask what happens if tomorrow an executive order forces the provider to cut service or hand over data to a foreign government.

The third change is economic. Sustained price increases at global clouds through 2024 and 2025, along with the expiry of many adoption discounts signed in the golden era, have swung spreadsheets back toward mixed architectures.

Which workloads return to the edge

Not everything gets repatriated, but patterns are clear:

Real-time or near-real-time control and supervision workloads — which should never have been fully in public cloud, have come back to edge computing more forcefully. Lightweight containers running anomaly detection, predictive maintenance, and parameter optimization stay at the plant, on servers or small edge Kubernetes clusters, and only aggregates go to the central layer.

Raw telemetry — previously uploaded whole to a remote data lake, is filtered and compressed at source. What travels is the analytical summary and labeled samples for training, not the full sensor stream. This cuts egress cost, storage cost, and exposure surface. It also aligns better with the new data-minimization obligations auditors are starting to scrutinize.

Machine-learning models in hybrid mode — training with synthetic or anonymized data in controlled environments, inference at the edge where the process lives, and periodic supervised update. More and more teams prefer smaller, specific models in exchange for keeping control over source data.

Where cloud still makes sense

It would be dishonest to present 2026 as a year of full return to on-prem. Global cloud remains the best option for:

- Workloads without sensitive data.

- Aggregated historical analysis.

- Collaborative development with external partners.

- Any scenario where punctual scale clearly exceeds internal capacity.

What has changed is the opening conversation. Three years ago, the default starting point was public cloud and you had to justify why anything stayed at home. Today the starting point is a serious question about where the data lives, who has legal access to it, and what impact a contractual interruption has. Only after answering those does infrastructure get chosen, and the increasingly common answer is mixed with a tilt toward European and controlled.

The theatrical-sovereign trap

A necessary warning: not every European cloud is equally sovereign. A provider headquartered in the European Union but with physical infrastructure and technical staff fully in another jurisdiction doesn’t offer the real protection the marketing suggests. Effective sovereignty requires three aligned layers:

- Operator’s legal jurisdiction — under what law does the company managing the system operate.

- Physical location of infrastructure — where the data is physically stored and processed.

- Operational control of the human team — who can access the system and under what mandate.

Through 2024 and 2025 several supposedly sovereign providers, on closer inspection, critically depended on non-European components or support, practically voiding the initial promise. Serious due diligences ask for complete dependency maps, not just flashy certifications. An ISO seal or a logo next to a flag isn’t sovereignty; it’s marketing.

How to design now

For a team starting an Industry 4.0 initiative, or reviewing an existing one, the sensible path begins by classifying data before infrastructure:

| Data type | Where it can live |

|---|---|

| Purely operational with no regulatory implication | Global or own cloud |

| With employee or customer personal data | European jurisdiction, GDPR |

| Critical industrial property (recipes, processes) | Own or European infrastructure with explicit contractual clauses |

| Aggregate metrics useful to share with partners | Global cloud with data agreement |

Next comes network and flow design. Sensitive workloads must have a path that doesn’t pass through managed third-party services without very concrete jurisdiction and access clauses. The edge, with lightweight Kubernetes or even well-operated Docker Swarm, covers much more than it seems if the team is willing to take on some operations.

Contracts matter as much as architecture. Clear clauses on jurisdiction, on data handling in case of external requirements, on migration windows, and on real, not theoretical, technical portability. In 2026 legal and technical teams must talk from day one; a pretty design without contractual backing is wet paper the day a crisis hits.

Conclusion

Industry 4.0 isn’t dead; what’s dead is its naive version, the one that assumed connecting everything to global cloud was always the best idea. Data sovereignty has stopped being an empty political slogan and become a practical requirement that shows up in tenders, risk committees, and contract renewals. Ignoring it gets expensive, and increasingly fast.

Whoever designs a connected plant today must integrate three dimensions: technical, regulatory, and strategic. None alone is enough:

- Technical without regulatory yields elegant deployments an inspector can dismantle.

- Regulatory without technical yields expensive, inefficient architectures.

- Strategic without the other two yields pretty speeches and deliveries that don’t work.

Teams that manage to integrate all three will be well-positioned for the next decade. Those treating sovereignty as a decorative add-on will keep paying more and exposing themselves to more risk than they can measure.