Explaining AI Through XAI

Actualizado: 2026-05-03

Artificial intelligence models make decisions that affect people — in medical diagnoses, court sentences, credit approvals — yet for years those decisions have been black boxes. XAI (Explainable Artificial Intelligence) is the collection of techniques and frameworks that allow us to open that box and answer the most important question: why did the model make this decision?

Key takeaways

- XAI is not a single technique but a collection of methods for making AI model decisions understandable.

- The most widely adopted approaches are LIME (local linear approximations), SHAP (Shapley values), and activation map visualisation.

- Explainability is necessary to detect bias, comply with regulations such as the GDPR, and build trust with end users.

- The sectors with the highest adoption are healthcare, justice, and financial services.

- There is a real tension between model performance and explainability: the most accurate models tend to be the least interpretable.

Why AI opacity is a problem

Modern AI systems — deep neural networks, transformers, gradient boosting models with thousands of trees — achieve exceptional performance on specific tasks. But that capability comes from the composition of millions of parameters that no human can read intuitively.

Opacity creates three concrete problems:

- Trust: users don’t adopt decisions from systems they don’t understand, especially when the cost of an error is high.

- Hidden bias: if the variables influencing a decision can’t be seen, indirect discrimination based on race, gender, or postcode is impossible to detect.

- Regulatory compliance: Article 22 of the GDPR recognises people’s right not to be subject to automated decisions without explanation. Sector-specific regulations in banking and insurance add additional layers of requirements.

XAI is the technical response to all three problems.

Main XAI methods

Explainable AI is not a single tool: it is a family of approaches with different trade-offs between fidelity, computation, and comprehensibility.

LIME (Local Interpretable Model-agnostic Explanations) LIME builds a simple model — usually linear — that locally approximates the behaviour of the complex model around a specific prediction. The idea: even if the global model is opaque, its behaviour in the neighbourhood of a concrete example can be approximated comprehensibly. Very useful for instance-level explanations: “this email was flagged as spam because it contained words X and Y.”

SHAP (SHapley Additive exPlanations) SHAP uses cooperative game theory — Shapley values — to assign each variable a fair contribution to the prediction. Unlike LIME, SHAP has solid mathematical properties (consistency, efficiency) and allows comparison of variable importance across multiple examples. It has become the de facto standard for explainability in tabular models.

Activation visualisation In computer vision models, techniques like Grad-CAM (Gradient-weighted Class Activation Mapping) generate heatmaps showing which regions of an image most activated a prediction. They are the standard tool for validating that the model looks where it should: if a pneumonia classifier activates on the hospital name in the corner rather than lung tissue, there is a data contamination problem.

Intrinsically interpretable models Sometimes the best XAI solution is not to use an opaque model. Shallow decision trees, logistic regressions with few variables, and attention models with well-designed architectures offer direct interpretability without needing an additional explanation layer.

Practical applications of XAI

Healthcare Clinical decision support systems that use AI models to predict risks (sepsis, hospital readmission, tumour progression) must be able to explain their recommendations to clinicians. Without explainability, clinicians either don’t trust the system or can’t detect when it fails. SHAP is widely used here to show which laboratory values or vital signs most influenced the risk prediction.

Justice and risk assessment Systems like COMPAS, used in the US to estimate recidivism probability, have been criticised for lack of transparency and racial bias. Regulatory and academic pressure has driven XAI adoption in this domain to audit the variables that determine risk scores.

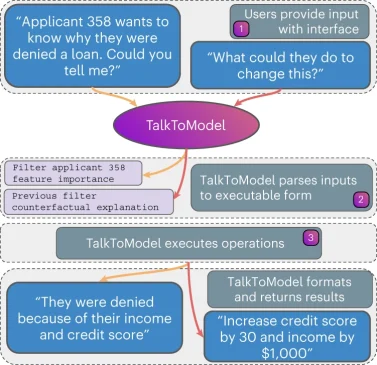

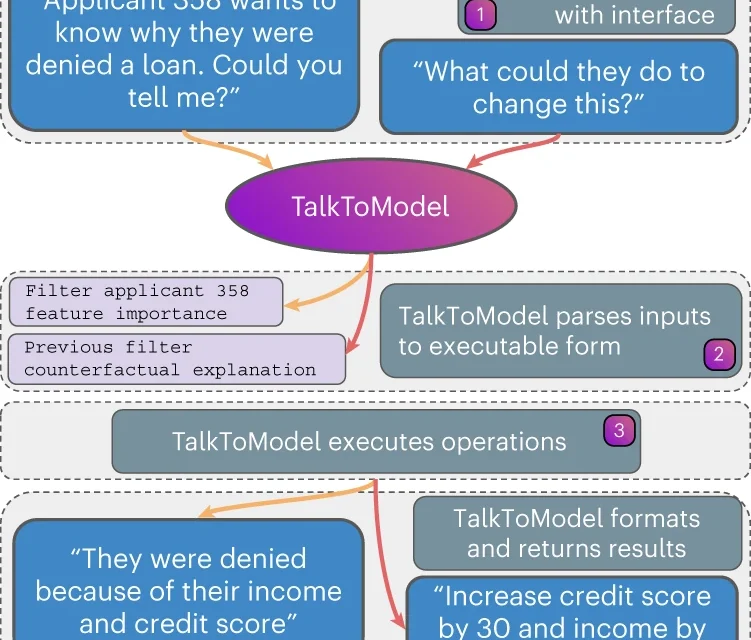

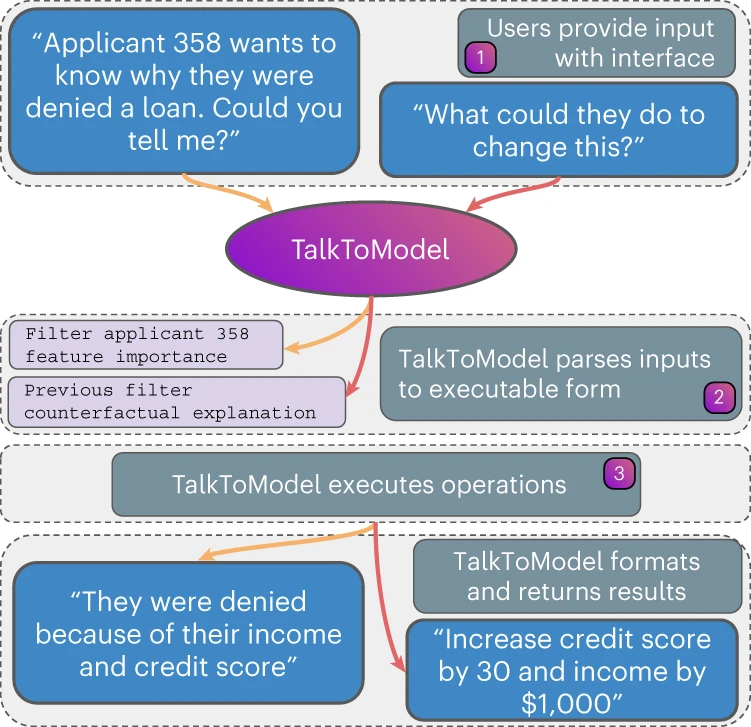

Financial services Approvals and denials of credit and insurance are subject to regulations requiring individualised explanations. Banks and insurers use XAI to generate the required denial letters and to detect proxy variables that may act as indirect discriminators.

Marketing and personalisation Recommendation and segmentation systems benefit from XAI so marketing teams understand why the model groups certain customers or suggests certain products. This connects to the recommendation and collaborative filtering techniques detailed elsewhere.

The tension between accuracy and explainability

The most honest problem in XAI is that there is a real trade-off between performance and interpretability. A gradient boosting model with 5,000 trees will outperform a depth-4 decision tree on most tasks. If the most accurate model is needed, explainability must be added as a post-hoc layer (LIME, SHAP); if direct interpretability is prioritised, a performance penalty may be accepted.

This tension parallels the one described in readable code with tools like GitHub Codespaces: readability is a value in itself, not just a convenience. The same applies to models: explainability is not decoration, it is part of system quality.

Recent research trends point to models that are both accurate and more interpretable — transformers with more transparent attention mechanisms, neural rule models — though none has definitively resolved the tension yet.

Conclusion

XAI responds to the legitimate demand that AI systems justify their decisions. Methods like LIME and SHAP allow the black box to be opened without sacrificing the performance of the best models. Adopting XAI is not optional in regulated or high-stakes environments: it is the difference between an AI system that builds trust and one that generates resistance.