Parca, Beyla and Grafana: a sidecar-free observability stack

Actualizado: 2026-05-03

Observability in 2025 has a recurring promise: see what is happening inside your processes without touching code. The combination of Parca for continuous profiling, Beyla for eBPF auto-instrumentation, and Grafana for visualisation has been one of the stacks drawing most conference interest for a year and a half. This post gathers what I learned integrating the three pieces in a couple of test clusters and what still does not quite fit for production.

Key takeaways

- Parca captures CPU profiles from all node processes continuously via eBPF — you do not turn profiling on when you suspect a problem.

- Beyla generates RED metrics, distributed traces, and logs from network syscalls, without modifying the binary or injecting a library.

- All three pieces share eBPF as a foundation, which eliminates sidecars: one agent per node instead of one container per pod.

- Correlation between Beyla traces and Parca profiles still has gaps in async processes — up to 40% of profiles may arrive without a linked

trace_id. - The stack requires

hostPIDandhostNetworkprivileges — the trust model changes and security advisories are worth watching.

What we are talking about

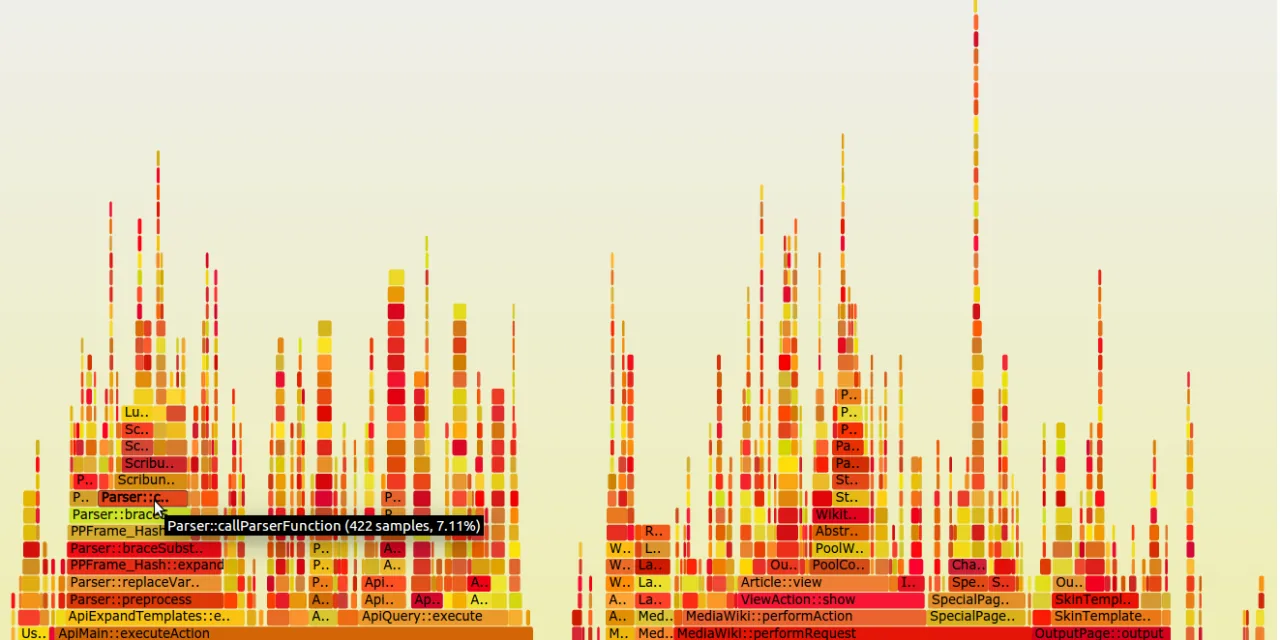

Parca is a Polar Signals project that does continuous profiling. It captures CPU stack samples from every process on the system via eBPF, aggregates them with configurable criteria, and persists them in its own columnar storage. The idea is to have profiles available all the time, not only when a manual tool is started. When a CPU spike hits at 3 in the morning, you can see exactly which function caused it without reproducing the incident.

Beyla is Grafana Labs’s auto-instrumentation agent released in 2023. It also uses eBPF, but with a different goal: generating RED metrics, distributed traces, and logs by observing the process’s network calls and user-space functions. It does not require binary modification or library injection, and it supports HTTP, gRPC, SQL, and several messaging systems. The big advantage is that you get standard OpenTelemetry telemetry without developers having to touch the code.

Grafana is the familiar piece: dashboards, alerts, PromQL, LogQL, and TraceQL queries. In this stack it acts as a single layer where Beyla metrics, logs, traces, and Parca profiles are queried. The Parca Grafana plugin integration is the most recent piece and the one that deserves evaluation.

What they share and why it matters

The three pieces share a foundation: eBPF. This is not decorative — it defines the stack’s properties. eBPF allows adding kernel probes without modifying it, at low cost, isolated and safe by design. This contrasts with older models where instrumentation required modifying the observed process or injecting a library via LD_PRELOAD.

The practical consequence is that the stack needs no sidecars. There is no extra container per pod to maintain, version, and monitor. The eBPF agent runs once per node, observes all processes, and sends data to the backend. In my environment I went from 120 trace sidecars to 3 eBPF agents, and cluster simplicity improved noticeably.

Parca in detail

Parca captures CPU profiles via the perf_event eBPF probe, which samples stacks every 10 or 20 milliseconds. Samples are aggregated in memory and compressed into the pprof format. The agent sends aggregates to the Parca server every minute, where they are stored in a custom columnar database built on FrostDB.

What differentiates Parca from a classic profiler is continuity. You do not turn profiling on when you suspect a problem; it was already on when the problem happened. In my tests I found two performance issues that would have been impossible to capture with manual profiling: a JSON serialisation regression appearing on a rare branch, and a Java GC spike lasting 200 ms every 6 hours.

The cost of running Parca is surprisingly low. On a 32-core node with 150 active processes, the agent consumes between 0.5% and 1% CPU. Storage grows about 30 MB per node per day at default sampling. Parca supports tiered retention with hot data on local disk and cold on S3.

Beyla in detail

Beyla takes a complementary approach. Instead of sampling stacks, it observes kernel calls associated with network operations: accept, connect, send, recv syscalls, and maps them to HTTP, gRPC, and SQL protocols. With that information it automatically generates three signals: rate, error, and duration metrics per service; trace spans with request flow; and structured logs of each call.

The magic is that it does not require process cooperation. A binary compiled two years ago without observability in mind gets, when run next to Beyla, standard OpenTelemetry traces. I tested this with a Django app that was nearly impossible to instrument manually and with an old Go binary whose source we no longer had. In both cases RED metrics appeared within minutes.

Beyla’s limit is that it only observes what flows through network or well-known syscalls. It cannot add spans inside code, it cannot read local variables, it cannot follow logical flow inside a process. For deep business logic observability, manual instrumentation is still required. Beyla is excellent for getting 70% value with no work, but the remaining 30% demands explicit work.

Where integration still rubs

The stack works well together, but I hit two points of friction.

First is signal correlation. Grafana lets you jump from a trace to its associated Parca profiles, but correlation depends on the trace carrying the correct trace_id and on Parca tagging the profile with that trace_id. In theory Beyla emits trace_id and Parca receives it, but in practice context propagation in async processes still has gaps. In a Python service using aiohttp I saw profiles with no linked trace_id 40% of the time.

Second is language support. Parca captures profiles from all processes equally, but the ability to symbolise stacks — translating memory addresses to function names — depends on the binary. For Go symbolisation works out of the box. For C and C++ it depends on having debug symbols available. For Java and the JVM an additional agent is needed to export the JIT compile map. For Node.js and Python, support has improved in 2025 but is not perfect.

Configuring the stack

Setting up the three pieces in a test cluster is not hard, but order matters:

- Parca agent as a DaemonSet with privileged permissions for eBPF, and the Parca server as a Deployment with persistent storage.

- Beyla as a DaemonSet or as a per-node sidecar depending on preference, with configuration for services to instrument.

- Grafana with Parca plugins and datasources for Tempo, Loki, and Mimir or Prometheus.

Complication lies in permissions and networking. eBPF requires hostPID, hostNetwork, and several capabilities. In clusters with strict Pod Security policy, explicit exceptions are needed. The trust model changes: agents have very wide visibility over the node.

Where this stack is not the answer

There are cases where the sidecar-free stack is not appropriate:

- Very old kernels (earlier than 5.4): eBPF support is limited. In 2025 this affects mainly vSphere clusters or legacy systems.

- Internal business logic instrumentation: deciding which part of price calculation is slow still requires manual OpenTelemetry instrumentation.

- Teams that lack observability culture: having continuous profiles and automatic RED metrics is of little use if no one looks at them.

When it is worth it

My practical rule is that the Parca, Beyla, Grafana stack pays off mainly in two scenarios:

- Teams with many heterogeneous applications where manual instrumentation is unworkable by volume. With thirty services in different languages, the saving from not adding the OTel SDK to each is enormous.

- Teams already using Grafana who want depth without switching tools. Native integration reduces friction and the learning curve is short.

In homogeneous environments with few services, manual OpenTelemetry instrumentation is comparable in effort and gives more control.

My take

What I find interesting about the stack is that it represents a mental shift about observability. Before, instrumentation was the developer’s responsibility, who had to add code to be observable. Now observability can be an infrastructure layer, external to code, that the operator activates. This separation of responsibilities lets platform teams deliver observability as a service without depending on each product team doing its part.

Stack integration still has rough edges. Signal correlation, JVM stack symbolisation, async context propagation are areas lacking polish. I do not think these will be resolved before 2026, but the direction is clear and quarterly progress is visible.

What does not change is that observability remains a discipline, not a tool. If a team already watches metrics and has a mature on-call chain, this stack gives a real depth jump. If not, first build the discipline and then add the tools.