Federated Learning and Privacy: Data Protection

Actualizado: 2026-05-03

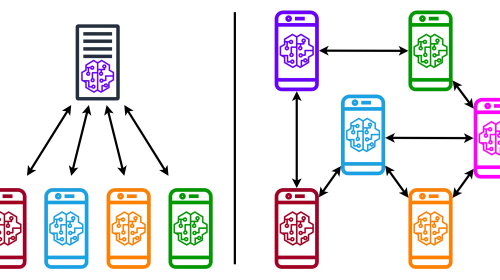

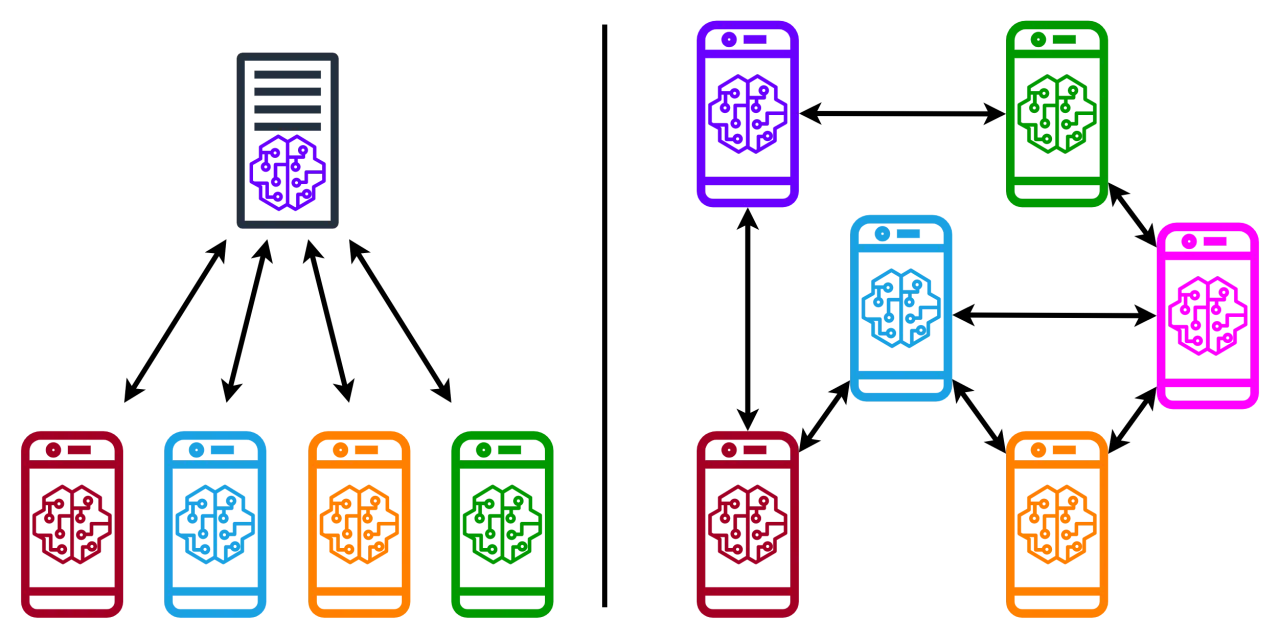

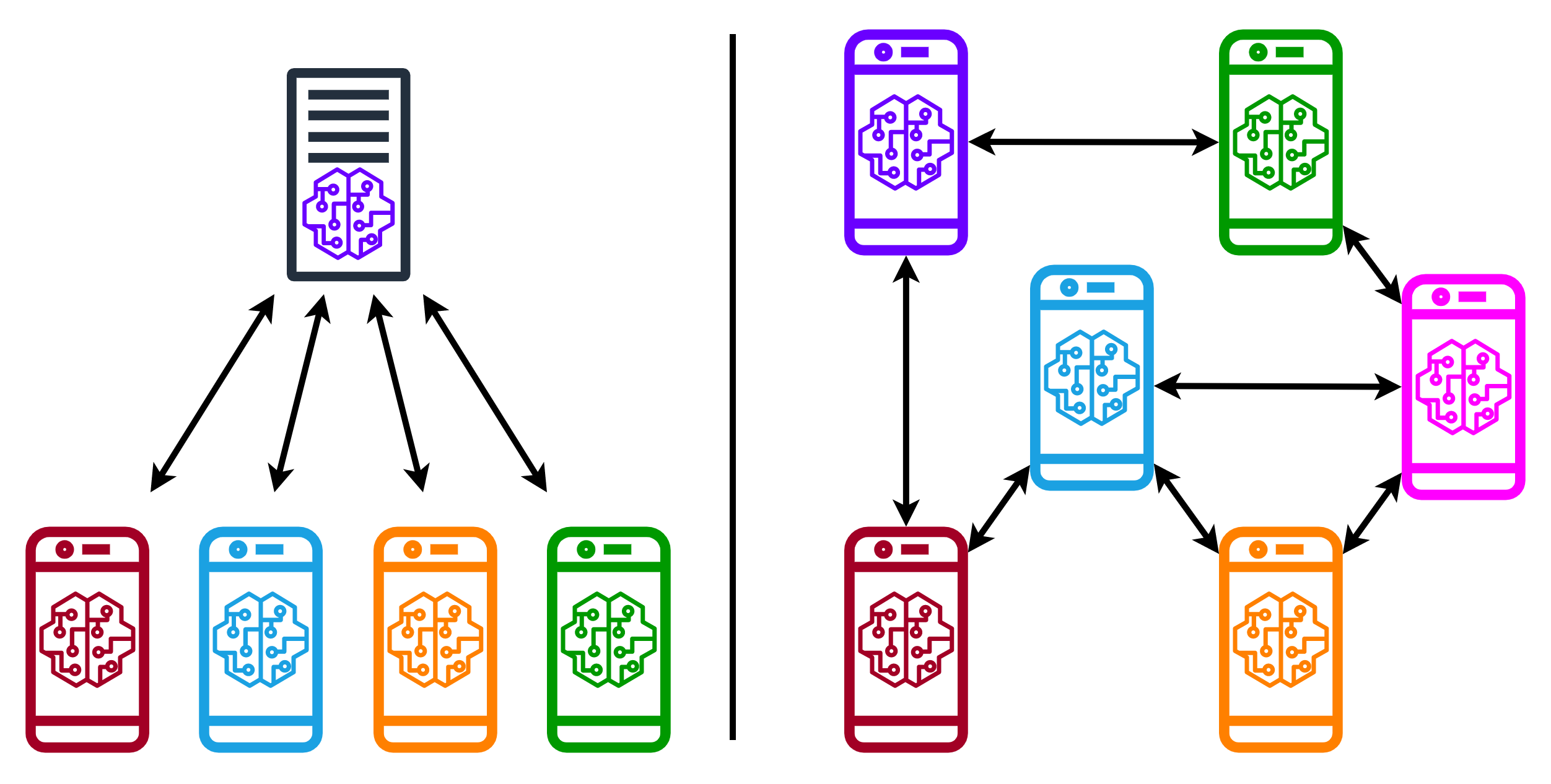

Federated learning is a distributed machine learning technique that lets multiple organisations collaborate on building AI models without sharing their private data. Instead of centralising data on a common server, each participant locally trains a model on their own data and sends only the model updates (gradients or weights) to the central server, where they are combined. This preserves data privacy at source while enabling more robust models thanks to the diversity of distributed data.

Key takeaways

- Federated learning separates data from the model: organisations retain their data locally and only share model updates.

- Privacy is not guaranteed by default — model updates can leak information about training data without additional defences.

- The main protection techniques are differential privacy (adding noise to gradients) and secure aggregation (combining updates without the server seeing individual ones).

- Federated poisoning attacks are a risk specific to this paradigm: malicious participants can corrupt the global model.

- Federated learning is compatible with regulations like GDPR because data never leaves the owner’s environment.

What is federated learning?

Federated learning was introduced by Google in 2016 to train text prediction models on mobile devices without sending typing history to servers. The basic process is:

- The central server distributes a version of the global model to participants.

- Each participant locally trains the model on their data.

- Each participant sends the updates (gradients or weight differences) to the server.

- The server aggregates the updates — typically via FedAvg (Federated Averaging) — and updates the global model.

- The cycle repeats until the model converges.

Data never leaves the owner’s environment, making the paradigm compatible with regulations like the GDPR (General Data Protection Regulation) and data sovereignty requirements in sectors such as health, finance, and energy.

Privacy challenges in federated learning

Although raw data is not shared, federated learning does not guarantee privacy by default. The main risks are:

- Gradient inference attacks: by analysing the gradients sent by a participant, an adversary can partially reconstruct the original training data. This is especially problematic when training batches are small.

- Membership inference attacks: determining whether a specific example was part of a participant’s training set, based on model behaviour.

- Untrusted central server: the server aggregating models can be compromised or act maliciously, accessing individual updates.

Additionally, federated environments present data heterogeneity (participants’ datasets have different distributions) that can make training unstable or inefficient.

Data protection techniques

Differential privacy

Differential privacy (DP) adds calibrated random noise to gradients before sending them to the server, making it impossible for the server to deduce whether a specific example was in the training data. The parameter ε (epsilon) controls the amount of privacy guaranteed: the lower ε, the greater the privacy but the lower the model utility. The DP-SGD (Differentially Private Stochastic Gradient Descent) variant is the most widely used implementation in practice.

Secure aggregation

Secure Aggregation uses cryptographic techniques so the server can compute the sum of individual updates without seeing any of them individually. The server only obtains the final aggregate, not individual contributions. This protects against a curious or compromised central server.

Homomorphic encryption

Homomorphic encryption allows performing mathematical operations — such as summing gradients — directly on encrypted data without decrypting it. The decrypted result is equivalent to what would be obtained by operating on plain data. Its main practical limitation is high computational cost, making it difficult to scale to large models.

Secure multi-party computation

Secure multi-party computation (MPC) lets multiple parties jointly compute a function over their combined data without any party learning the others’ data. It is more general than secure aggregation and can be combined with other defences.

Attacks specific to the federated paradigm

Federated learning introduces attack vectors that don’t exist in centralised training:

- Federated poisoning: a malicious participant sends false updates designed to degrade the global model or insert backdoor behaviours. The standard defence is outlier detection in updates (Byzantine-robust aggregation) and the use of robust aggregation functions such as RFA or Krum.

- Free-riding: a participant sending random updates to benefit from the global model without contributing. Detectable with update verification techniques.

These risks overlap with those studied in the field of adversarial machine learning, which more broadly addresses attacks on AI systems.

Applications and future

Federated learning has established and emerging applications in:

- Healthcare: hospitals training imaging diagnosis models by sharing gradients without exposing patient records.

- Finance: collaborative fraud detection between competing banks without revealing private transactions.

- Mobile and edge computing: Google’s Gboard keyboard uses federated learning to improve text predictions without sending data to the server.

- Industrial IoT: sensors from multiple plants training predictive maintenance models without centralising proprietary production data — an Industry 4.0 use case.

For environments where visual data is part of training, such as distributed computer vision systems, federated learning enables building quality models without exposing sensitive images.

Conclusion

Federated learning redefines the balance between utility and privacy in AI model training: it enables collaboration without data centralisation. However, real privacy requires additional defences — differential privacy, secure aggregation, or homomorphic encryption — because model updates alone don’t guarantee protection of the underlying data. Combining these techniques with strong regulations is the path toward collaborative and responsible AI.