SLMs at the industrial edge: when the small model is better

Actualizado: 2026-05-03

Small language models have stopped being laboratory curiosities. Phi-3.5, Gemma 2, Llama 3.2, and the first compact Qwen 2.5 variants have demonstrated that a 2 to 8 billion parameter model, well trained, can solve concrete tasks with production-quality output. That opens real space at the industrial edge where latency, data sovereignty, and connectivity cost make local deployment worth it.

Key takeaways

- SLMs of 2024–2025 outperform large models from two years ago on bounded tasks, thanks to better training corpora and quality tuning.

- The industrial edge — plants, warehouses, point-of-sale — has three constraints favoring local deployment: limited connectivity, demanding latency, and data sovereignty.

- Tasks where SLMs already work in production include structured text extraction, incident classification, shift summaries, and internal chatbots with limited RAG.

- The dominant deployment pattern in 2025 is hybrid: SLM at the edge for 90% of routine requests, large cloud model as fallback for the hard cases.

- Llama 3.2 and Gemma 2 outperform Phi-3.5 on native Spanish; investment in your own evaluation set is worth more than any public benchmark.

What has changed for small models

Until 2023 the term SLM was almost derogatory. Models of 1 to 3 billion parameters were toys compared to GPT-3.5 or Llama 1. Two things have changed perception.

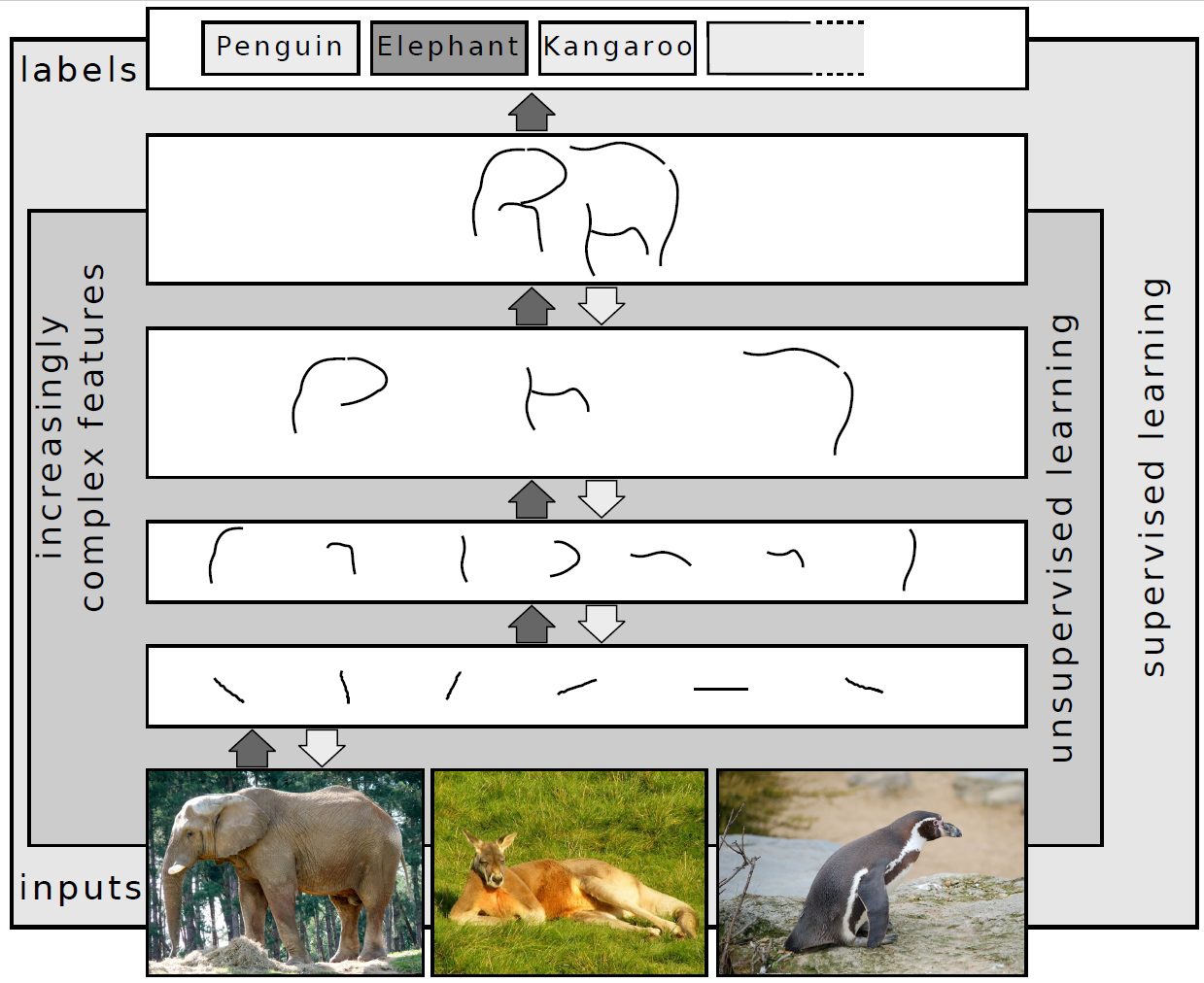

The first is training corpus quality: small models of 2024 and 2025 are trained with carefully filtered data, synthetic reasoning, and high-quality post-training refinement. A 3.8 billion parameter model like Phi-3.5-mini performs on reasoning tasks comparable to GPT-3.5 from two years ago.

The second is execution environment maturity. Tools like llama.cpp, Ollama, and vLLM have polished quantization, efficient weight loading, and batching. A model that previously required a 24 GB GPU now runs on a decent CPU with 16 GB RAM at acceptable latencies.

The fundamental point is that a small model well-used on a bounded task outperforms a large model poorly-directed on the same task. If what you need is classifying text, extracting fields, or generating short summaries, a tuned small model with good prompting is enough. Bringing a large model to the edge to do the same is, in most cases, waste.

Why the industrial edge suits them

Plants, warehouses, and points of sale have three constraints that the edge serves better than the cloud:

- Connectivity: there is not always a stable internet link, and when there is it is expensive or slow. A local model removes connectivity dependency for tasks it can solve.

- Latency: for an industrial process depending on a reading to act, a two-second response because the packet had to cross the ocean is a problem. A local model responds in milliseconds.

- Data sovereignty: many plants have sensitive information — diagrams, recipes, customer data, production figures — that cannot leave the perimeter for compliance with NIS2, GDPR, or sector-specific requirements.

In a typical office environment, where connectivity is good and sovereignty does not press as hard, the balance tilts more easily toward the cloud.

Tasks that already work well

There is a set of tasks where SLMs at the edge already work with production quality:

- Structured extraction from free text (invoices, delivery notes, incident reports, OCR from labels): a small model with a well-designed prompt extracts fields with accuracy rates above 95% on most industrial documents.

- Text classification and routing: an operator writes a report, the model decides whether it is a critical incident, which team it belongs to, or whether it is a duplicate.

- Short summary generation: a supervisor receives dozens of shift readings and the model synthesizes them into a daily summary with important findings.

- Bounded conversational assistance like an internal chatbot about procedures or manuals, with RAG over a limited document corpus. Quality is not GPT-4 level, but for standard operational queries it is more than enough.

Where SLMs still fall short

SLMs fall short on:

- Multi-step reasoning over complex problems: computing long sequences, debugging complicated code, analyzing legal text with nuance.

- Long conversation with lots of context: windows are smaller and coherence degrades when history lengthens.

- Broad and updated knowledge: answering current events, translating cultural slang, interpreting recent references.

- Creative free generation: for marketing material, literary text, or original code from open specifications, large models still produce clearly better results.

The deployment pattern in 2025

The pattern seen working in 2025 has three layers:

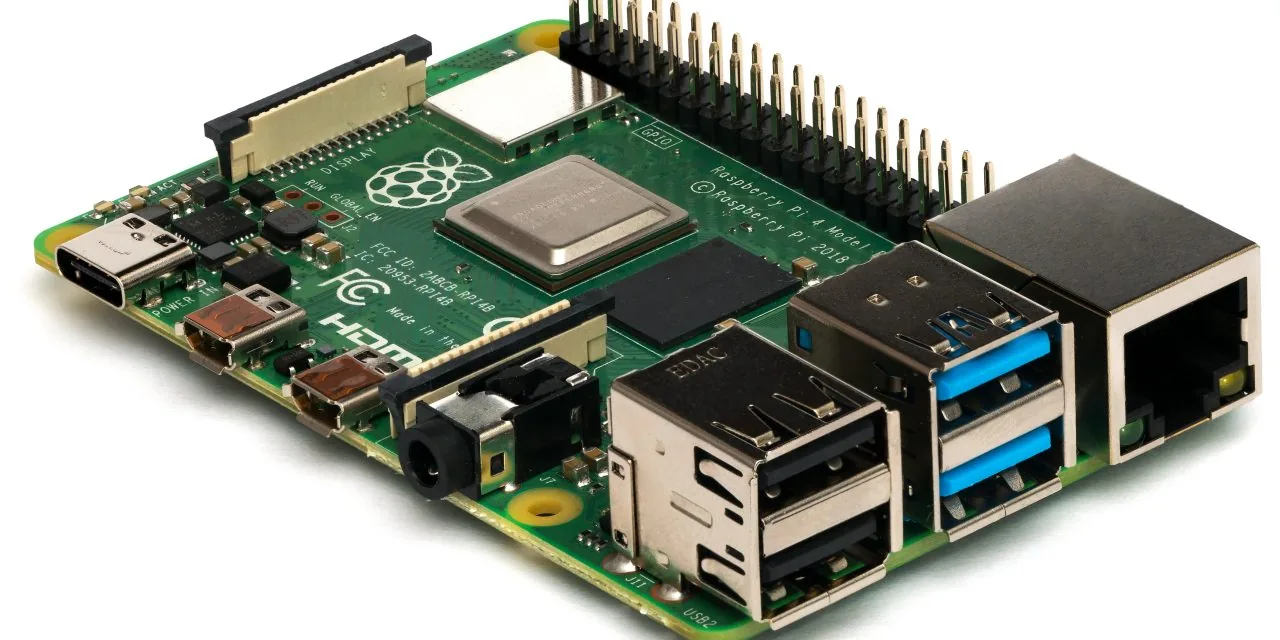

- Hardware: a machine with a powerful CPU or modest GPU (RTX 4060 or equivalent) with 32 to 64 GB RAM, between 1,500 and 3,000 euros per edge node. For low-latency or high-concurrency tasks, a recent integrated GPU from AMD or Intel is enough.

- Execution: Ollama or a server based on vLLM. Ollama is convenient for starting because it handles downloads, quantization, and serves an OpenAI-compatible API. vLLM scales better for high concurrency.

- Application: the model is consumed as a local API with REST calls, just like against the cloud. The difference is only the URL, which simplifies migration between both sides.

Model selection

- Phi-3.5-mini: performs well on reasoning and structured tasks; ideal for CPUs without GPU.

- Gemma 2 (2B and 9B): good general quality with stable instruction format.

- Llama 3.2: excels at multilingual tasks and has very good support in Ollama.

- Qwen 2.5: shines in translation nuance and broad knowledge.

For native Spanish, Llama 3.2 and Gemma 2 usually beat Phi-3.5 (optimized for English). The difference between models in the same size band is small; investment in prompt engineering and evaluation is what truly moves quality. It is worth having an in-house evaluation set with fifty or a hundred real examples — that set is worth more than any public benchmark.

My practical criterion

If the task is bounded, high-volume, and latency matters, the edge with SLM is the right option. If the task requires complex reasoning, broad world knowledge, or the volume is low, cloud with a large model is still better. The key is not to think large versus small as a dichotomy, but as different tools with different cases.

The architecture I see working most is hybrid: an SLM at the edge for 90% of routine requests, and a fallback to a large cloud model for the hard cases the local model flags as uncertain. This pattern combines the best of both worlds: low cost, low latency, and sovereignty for most, with an ace up the sleeve for rare cases.

The cultural shift that is missing is to stop seeing the large model as the default answer. For a large fraction of industrial cases, a small local model is better — faster, cheaper, more private, and with fewer dependencies. Learning to distinguish which cases fall on each side is a competence worth developing, especially as SLMs keep improving.