LoRA y QLoRA: fine-tuning eficiente al alcance de un solo portátil

Actualizado: 2026-05-03

LoRA (Low-Rank Adaptation) y QLoRA (Quantized LoRA) democratizaron el fine-tuning de modelos de lenguaje grandes al resolver el problema central que lo hacía prohibitivo: la memoria de GPU. La idea detrás de LoRA es elegante en su simplicidad —en lugar de actualizar todos los parámetros del modelo durante el entrenamiento, se añaden matrices de adaptación de rango bajo que aprenden el delta entre el comportamiento del modelo base y el comportamiento deseado. QLoRA lleva esa idea más lejos combinando cuantización del modelo base con los adaptadores LoRA, lo que hace viable el fine-tuning de modelos de 70 B en hardware de consumo.

Puntos clave

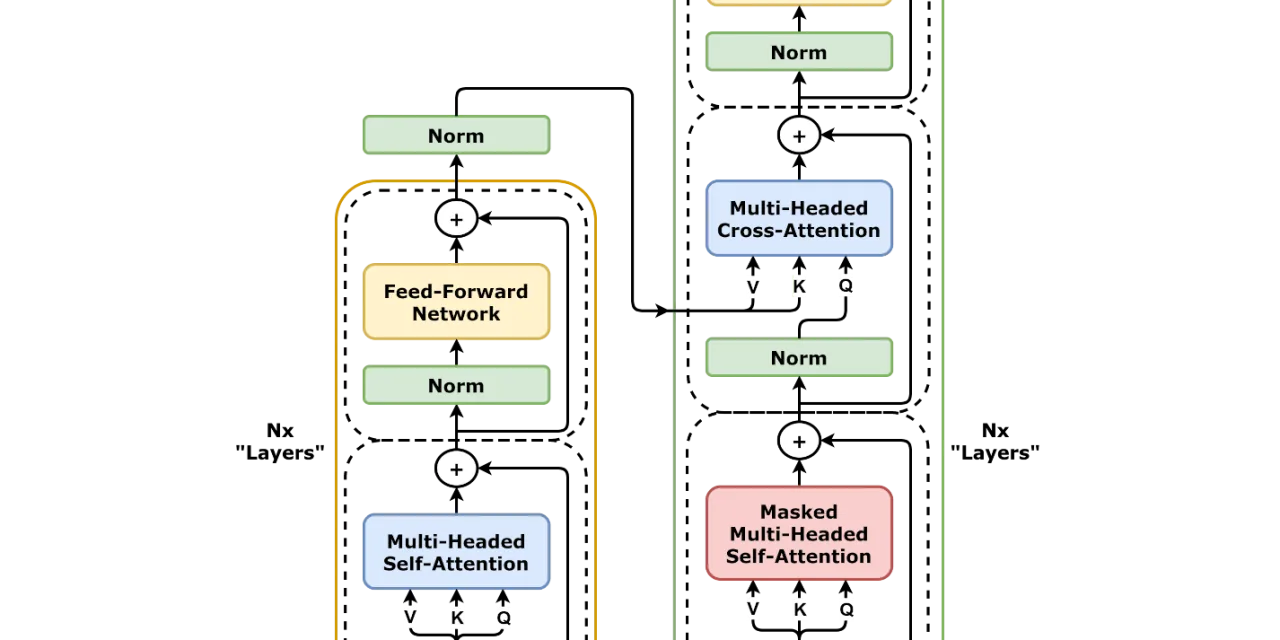

- LoRA añade matrices de bajo rango (típicamente rango 4-64) en capas de atención; el modelo base permanece congelado.

- QLoRA cuantiza el modelo base a 4 bits (NF4) y solo mantiene los adaptadores LoRA en precisión completa, reduciendo la memoria necesaria en un 65-75 %.

- Un Llama 3 8B requiere 128 GB de VRAM para fine-tuning completo; con QLoRA cabe en una RTX 3090 de 24 GB.

- La pérdida de calidad frente al fine-tuning completo es del 1-3 % en benchmarks estándar —aceptable para la mayoría de casos de uso.

- La decisión entre LoRA/QLoRA y prompt engineering depende del volumen de datos disponible y la especificidad del dominio.

El problema que resuelven

El fine-tuning tradicional de un modelo Llama 3 de 8 B requiere almacenar simultáneamente:

- Los parámetros del modelo: 8 B × 4 bytes = 32 GB solo para los pesos.

- Los gradientes de entrenamiento: otros 32 GB.

- El estado del optimizador Adam: 64 GB adicionales (dos momentos por parámetro).

- Total: 128 GB de VRAM —el equivalente a cuatro A100 de 80 GB.

A escala de 70 B, el coste se multiplica por 8,75x y supera lo que puede comprarse con cualquier presupuesto razonable para experimentación.

LoRA resuelve el problema con una observación teórica: los cambios que el fine-tuning induce en los pesos del modelo tienen rango intrínseco bajo. No necesitas actualizar una matriz densa de millones de parámetros; puedes aproximar ese cambio con el producto de dos matrices mucho más pequeñas:

ΔW ≈ A × B

donde A ∈ ℝ^(d×r) y B ∈ ℝ^(r×k), con r << d y r << kSi el rango r es 8 y la dimensión original es 4096, el número de parámetros entrenables cae de 4096² ≈ 16 M a 2 × 4096 × 8 = 65 k. Una reducción de 250x en parámetros entrenables, lo que implica una reducción proporcional en gradientes y estado del optimizador.

QLoRA: cuantización más adaptadores

QLoRA añade un paso más: cuantiza el modelo base a NF4 (Normal Float 4-bit), un formato de cuantización que preserva mejor la distribución de los pesos de los LLM que la cuantización entera estándar. Sobre ese modelo cuantizado, los adaptadores LoRA se entrenan en BFloat16 completo.

La combinación permite:

- Cargar un modelo de 70 B en cuatro GPU de 24 GB (en lugar de diez de 80 GB).

- Cargar un modelo de 7 B en una sola GPU de 8 GB.

- Fine-tune de modelos de 13 B en un laptop con GPU RTX 3080 de 16 GB.

La pérdida de calidad de NF4 frente a FP16 está bien documentada: entre el 1 % y el 3 % en benchmarks de razonamiento y coding, lo que es aceptable para la mayoría de casos de uso de dominio específico.

Flujo de trabajo con PEFT y Hugging Face

El ecosistema de Hugging Face hace que el flujo sea sorprendentemente directo. La librería PEFT (Parameter-Efficient Fine-Tuning) gestiona los adaptadores LoRA; transformers carga el modelo base; trl (Transformer Reinforcement Learning) proporciona SFTTrainer para fine-tuning supervisado:

from transformers import AutoModelForCausalLM, BitsAndBytesConfig

from peft import LoraConfig, get_peft_model

from trl import SFTTrainer

# Cargar modelo cuantizado (QLoRA)

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype="bfloat16",

)

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Meta-Llama-3-8B-Instruct",

quantization_config=bnb_config,

device_map="auto",

)

# Configurar adaptadores LoRA

lora_config = LoraConfig(

r=16, # rango de las matrices

lora_alpha=32, # factor de escala

target_modules=["q_proj", "v_proj"], # capas a adaptar

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

)

model = get_peft_model(model, lora_config)

model.print_trainable_parameters()

# trainable params: 5,242,880 || all params: 8,035,885,056 || trainable%: 0.0653El resultado: menos del 0,07 % de los parámetros son entrenables, con una reducción de memoria proporcional en gradientes y estado del optimizador.

Elección de hiperparámetros

Los hiperparámetros de LoRA que más impactan el resultado:

- Rango (

r): valores bajos (4-8) para tareas simples de estilo o formato; valores medios (16-32) para adaptación de dominio; valores altos (64-128) para cambios de comportamiento más profundos. A mayor rango, más parámetros entrenables y mayor capacidad, pero también mayor riesgo de overfitting. lora_alpha: típicamente 2× el rango. Controla el factor de escala de los adaptadores.target_modules: las capas de proyección de query y valor (q_proj,v_proj) son el mínimo; añadir proyecciones de key (k_proj) y output (o_proj) mejora la calidad a costa de más parámetros.- Learning rate: valores más bajos que en fine-tuning completo, típicamente en el rango 1e-4 a 3e-4.

Para modelos que luego servirás con vLLM en producción, asegúrate de que el formato de los adaptadores LoRA es compatible con la versión de vLLM que usas; el soporte de Multi-LoRA permite servir varios adaptadores sobre el mismo modelo base.

LoRA/QLoRA frente a prompt engineering

La decisión entre fine-tuning y prompt engineering depende de tres factores:

Cuándo el fine-tuning compensa:

- Tienes más de 500-1 000 ejemplos de entrenamiento de alta calidad.

- El dominio tiene vocabulario, formato o razonamiento específicos que el modelo base no conoce.

- La tarea requiere consistencia muy alta de formato (el prompt engineering puede dar variabilidad indeseada).

- El coste de tokens en producción es significativo; un modelo fine-tuned necesita prompts más cortos.

Cuándo el prompt engineering es suficiente:

- Los datos de entrenamiento son escasos o difíciles de obtener.

- La tarea es razonablemente general y el modelo base la resuelve bien con instrucciones.

- Necesitas iterar rápido sin ciclos de entrenamiento.

- La alucinación del modelo base es aceptable para el caso de uso.

El error más común es hacer fine-tuning sobre datos insuficientes (<200 ejemplos) esperando mejoras grandes. Con pocos datos, el modelo aprende el formato pero pierde generalización. Con LoRA/QLoRA hay poca fricción técnica para experimentar, pero eso no elimina el requisito de tener datos de calidad.

Despliegue de adaptadores LoRA

Una vez entrenado, el adaptador LoRA se guarda como un fichero independiente del modelo base —típicamente 10-200 MB frente a los varios GB del modelo completo. Las opciones de despliegue:

- Fusión:

merge_and_unload()fusiona los pesos del adaptador en el modelo base, generando un modelo estándar que cualquier runtime puede servir. - Multi-LoRA con vLLM: servir varios adaptadores sobre el mismo modelo base, conmutando por petición. Muy eficiente cuando tienes varios fine-tunes especializados.

- Adaptador separado con

transformers: carga el modelo base más el adaptador dinámicamente; útil para experimentación.

Para observabilidad del modelo fine-tuned en producción, el reto específico de los adaptadores LoRA es que el seguimiento de qué adaptador se usó en cada inferencia requiere instrumentación explícita —la mayoría de herramientas de observabilidad no lo capturan por defecto.

Conclusión

LoRA y QLoRA eliminaron la barrera de hardware que hacía prohibitivo el fine-tuning para la mayoría de equipos. Con QLoRA, un modelo de 7 B cabe en una GPU de 8 GB, y la pérdida de calidad frente al fine-tuning completo es marginal en la práctica. El verdadero cuello de botella ya no es el hardware ni el framework —son los datos de entrenamiento de calidad y la evaluación rigurosa de si el fine-tuning realmente mejora lo que importa para tu caso de uso.